Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- README.md +106 -5

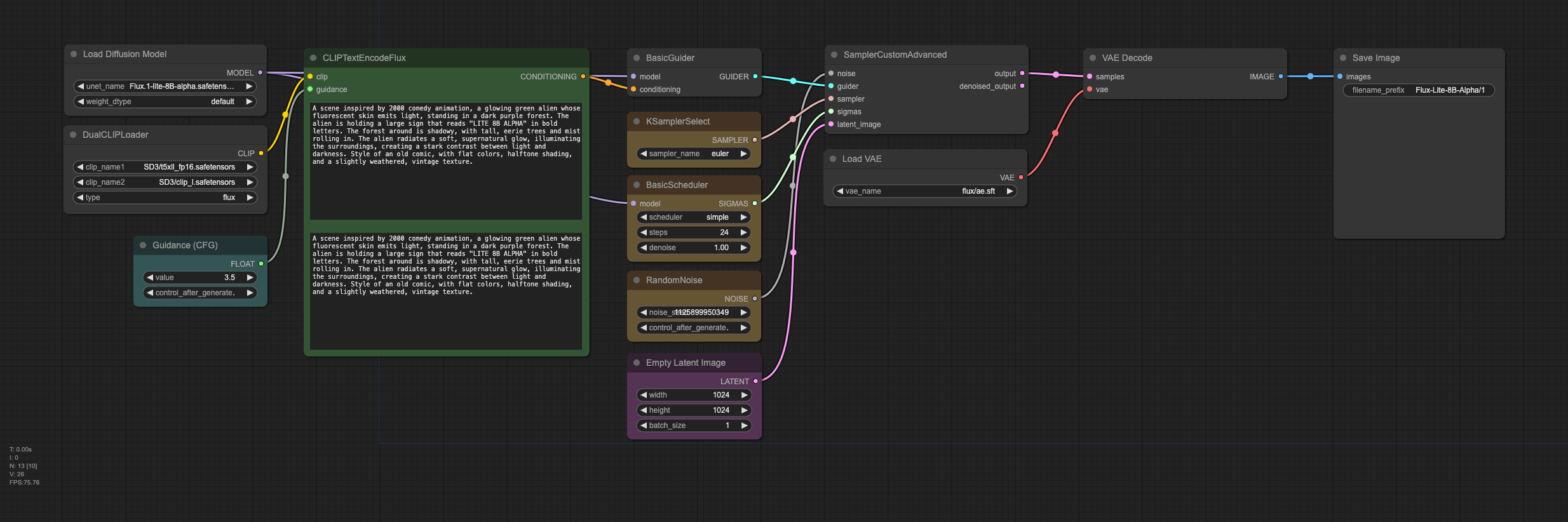

- comfy/flux.1-lite_workflow.json +652 -0

- comfy/flux.1-lite_workflow.png +0 -0

- flux.1-lite-8B.safetensors +3 -0

- model_index.json +33 -0

- sample_images/flux1-lite-8B_sample.png +3 -0

- scheduler/scheduler_config.json +16 -0

- text_encoder/config.json +25 -0

- text_encoder/model.safetensors +3 -0

- text_encoder_2/config.json +32 -0

- text_encoder_2/model-00001-of-00002.safetensors +3 -0

- text_encoder_2/model-00002-of-00002.safetensors +3 -0

- text_encoder_2/model.safetensors.index.json +226 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +30 -0

- tokenizer/tokenizer_config.json +31 -0

- tokenizer/vocab.json +0 -0

- tokenizer_2/special_tokens_map.json +125 -0

- tokenizer_2/spiece.model +3 -0

- tokenizer_2/tokenizer.json +0 -0

- tokenizer_2/tokenizer_config.json +941 -0

- transformer/config.json +1 -0

- transformer/diffusion_pytorch_model-00001-of-00002.safetensors +3 -0

- transformer/diffusion_pytorch_model-00002-of-00002.safetensors +3 -0

- transformer/diffusion_pytorch_model.safetensors.index.json +815 -0

- vae/config.json +38 -0

- vae/diffusion_pytorch_model.safetensors +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

sample_images/flux1-lite-8B_sample.png filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,5 +1,106 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: other

|

| 3 |

-

license_name: flux-1-dev-non-commercial-license

|

| 4 |

-

license_link: https://huggingface.co/black-forest-labs/FLUX.1-dev/

|

| 5 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

license_name: flux-1-dev-non-commercial-license

|

| 4 |

+

license_link: https://huggingface.co/black-forest-labs/FLUX.1-dev/resolve/main/LICENSE.md

|

| 5 |

+

base_model:

|

| 6 |

+

- black-forest-labs/FLUX.1-dev

|

| 7 |

+

pipeline_tag: text-to-image

|

| 8 |

+

library_name: diffusers

|

| 9 |

+

tags:

|

| 10 |

+

- flux

|

| 11 |

+

- text-to-image

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

# Flux.1 Lite

|

| 17 |

+

|

| 18 |

+

We are thrilled to announce the alpha release of Flux.1 Lite, an 8B parameter transformer model distilled from the FLUX.1-dev model. This version uses 7 GB less RAM and runs 23% faster while maintaining the same precision (bfloat16) as the original model.

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

## Text-to-Image

|

| 23 |

+

|

| 24 |

+

Flux.1 Lite is ready to unleash your creativity! For the best results, we strongly **recommend using a `guidance_scale` of 3.5 and setting `n_steps` between 22 and 30**.

|

| 25 |

+

|

| 26 |

+

```python

|

| 27 |

+

import torch

|

| 28 |

+

from diffusers import FluxPipeline

|

| 29 |

+

|

| 30 |

+

base_model_id = "Freepik/flux.1-lite-8B-alpha"

|

| 31 |

+

torch_dtype = torch.bfloat16

|

| 32 |

+

device = "cuda"

|

| 33 |

+

|

| 34 |

+

# Load the pipe

|

| 35 |

+

model_id = "Freepik/flux.1-lite-8B-alpha"

|

| 36 |

+

pipe = FluxPipeline.from_pretrained(

|

| 37 |

+

model_id, torch_dtype=torch_dtype

|

| 38 |

+

).to(device)

|

| 39 |

+

|

| 40 |

+

# Inference

|

| 41 |

+

prompt = "A close-up image of a green alien with fluorescent skin in the middle of a dark purple forest"

|

| 42 |

+

|

| 43 |

+

guidance_scale = 3.5 # Keep guidance_scale at 3.5

|

| 44 |

+

n_steps = 28

|

| 45 |

+

seed = 11

|

| 46 |

+

|

| 47 |

+

with torch.inference_mode():

|

| 48 |

+

image = pipe(

|

| 49 |

+

prompt=prompt,

|

| 50 |

+

generator=torch.Generator(device="cpu").manual_seed(seed),

|

| 51 |

+

num_inference_steps=n_steps,

|

| 52 |

+

guidance_scale=guidance_scale,

|

| 53 |

+

height=1024,

|

| 54 |

+

width=1024,

|

| 55 |

+

).images[0]

|

| 56 |

+

image.save("output.png")

|

| 57 |

+

```

|

| 58 |

+

|

| 59 |

+

## Motivation

|

| 60 |

+

|

| 61 |

+

Inspired by [Ostris](https://ostris.com/2024/09/07/skipping-flux-1-dev-blocks/) findings, we analyzed the mean squared error (MSE) between the input and output of each block to quantify their contribution to the final result, revealing significant variability.

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

As Ostris pointed out, not all blocks contribute equally. While skipping just one of the early MMDiT or late DiT blocks can significantly impact model performance, skipping any single block in between does not have a significant impact over the final image quality.

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

|

| 72 |

+

## Future work

|

| 73 |

+

|

| 74 |

+

Stay tuned! Our goal is to distill FLUX.1-dev further until it can run smoothly on 24 GB consumer-grade GPU cards, maintaining its original precision (bfloat16), and running even faster, making high-quality AI models accessible to everyone.

|

| 75 |

+

|

| 76 |

+

## ComfyUI

|

| 77 |

+

|

| 78 |

+

We've also crafted a ComfyUI workflow to make using Flux.1 Lite even more seamless! Find it in `comfy/flux.1-lite_workflow.json`.

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

The safetensors checkpoint is available here: [flux.1-lite-8B-alpha.safetensors](flux.1-lite-8B-alpha.safetensors)

|

| 82 |

+

|

| 83 |

+

## Try it out at Freepik!

|

| 84 |

+

|

| 85 |

+

Our [AI generator](https://www.freepik.com/pikaso/ai-image-generator) is now powered by Flux.1 Lite!

|

| 86 |

+

|

| 87 |

+

## 🔥 News 🔥

|

| 88 |

+

|

| 89 |

+

* Oct 23, 2024. Alpha 8B checkpoint is publicly available on [HuggingFace Repo](https://huggingface.co/Freepik/flux.1-lite-8B-alpha).

|

| 90 |

+

|

| 91 |

+

## Citation

|

| 92 |

+

|

| 93 |

+

If you find our work helpful, please cite it!

|

| 94 |

+

|

| 95 |

+

```bibtex

|

| 96 |

+

@article{flux1-lite,

|

| 97 |

+

title={Flux.1 Lite: Distilling Flux1.dev for Efficient Text-to-Image Generation},

|

| 98 |

+

author={Daniel Verdú, Javier Martín},

|

| 99 |

+

email={[email protected], [email protected]},

|

| 100 |

+

year={2024},

|

| 101 |

+

}

|

| 102 |

+

```

|

| 103 |

+

|

| 104 |

+

|

| 105 |

+

|

| 106 |

+

|

comfy/flux.1-lite_workflow.json

ADDED

|

@@ -0,0 +1,652 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"last_node_id": 44,

|

| 3 |

+

"last_link_id": 66,

|

| 4 |

+

"nodes": [

|

| 5 |

+

{

|

| 6 |

+

"id": 11,

|

| 7 |

+

"type": "DualCLIPLoader",

|

| 8 |

+

"pos": {

|

| 9 |

+

"0": -497,

|

| 10 |

+

"1": 351

|

| 11 |

+

},

|

| 12 |

+

"size": {

|

| 13 |

+

"0": 320,

|

| 14 |

+

"1": 110

|

| 15 |

+

},

|

| 16 |

+

"flags": {},

|

| 17 |

+

"order": 0,

|

| 18 |

+

"mode": 0,

|

| 19 |

+

"inputs": [],

|

| 20 |

+

"outputs": [

|

| 21 |

+

{

|

| 22 |

+

"name": "CLIP",

|

| 23 |

+

"type": "CLIP",

|

| 24 |

+

"links": [

|

| 25 |

+

43

|

| 26 |

+

],

|

| 27 |

+

"slot_index": 0,

|

| 28 |

+

"shape": 3

|

| 29 |

+

}

|

| 30 |

+

],

|

| 31 |

+

"properties": {

|

| 32 |

+

"Node name for S&R": "DualCLIPLoader"

|

| 33 |

+

},

|

| 34 |

+

"widgets_values": [

|

| 35 |

+

"SD3/t5xll_fp16.safetensors",

|

| 36 |

+

"SD3/clip_l.safetensors",

|

| 37 |

+

"flux"

|

| 38 |

+

]

|

| 39 |

+

},

|

| 40 |

+

{

|

| 41 |

+

"id": 16,

|

| 42 |

+

"type": "KSamplerSelect",

|

| 43 |

+

"pos": {

|

| 44 |

+

"0": 390,

|

| 45 |

+

"1": 330

|

| 46 |

+

},

|

| 47 |

+

"size": {

|

| 48 |

+

"0": 210,

|

| 49 |

+

"1": 58

|

| 50 |

+

},

|

| 51 |

+

"flags": {},

|

| 52 |

+

"order": 1,

|

| 53 |

+

"mode": 0,

|

| 54 |

+

"inputs": [],

|

| 55 |

+

"outputs": [

|

| 56 |

+

{

|

| 57 |

+

"name": "SAMPLER",

|

| 58 |

+

"type": "SAMPLER",

|

| 59 |

+

"links": [

|

| 60 |

+

19

|

| 61 |

+

],

|

| 62 |

+

"shape": 3

|

| 63 |

+

}

|

| 64 |

+

],

|

| 65 |

+

"properties": {

|

| 66 |

+

"Node name for S&R": "KSamplerSelect"

|

| 67 |

+

},

|

| 68 |

+

"widgets_values": [

|

| 69 |

+

"euler"

|

| 70 |

+

],

|

| 71 |

+

"color": "#432",

|

| 72 |

+

"bgcolor": "#653"

|

| 73 |

+

},

|

| 74 |

+

{

|

| 75 |

+

"id": 17,

|

| 76 |

+

"type": "BasicScheduler",

|

| 77 |

+

"pos": {

|

| 78 |

+

"0": 390,

|

| 79 |

+

"1": 430

|

| 80 |

+

},

|

| 81 |

+

"size": {

|

| 82 |

+

"0": 210,

|

| 83 |

+

"1": 106

|

| 84 |

+

},

|

| 85 |

+

"flags": {},

|

| 86 |

+

"order": 8,

|

| 87 |

+

"mode": 0,

|

| 88 |

+

"inputs": [

|

| 89 |

+

{

|

| 90 |

+

"name": "model",

|

| 91 |

+

"type": "MODEL",

|

| 92 |

+

"link": 38,

|

| 93 |

+

"slot_index": 0

|

| 94 |

+

}

|

| 95 |

+

],

|

| 96 |

+

"outputs": [

|

| 97 |

+

{

|

| 98 |

+

"name": "SIGMAS",

|

| 99 |

+

"type": "SIGMAS",

|

| 100 |

+

"links": [

|

| 101 |

+

20

|

| 102 |

+

],

|

| 103 |

+

"shape": 3

|

| 104 |

+

}

|

| 105 |

+

],

|

| 106 |

+

"properties": {

|

| 107 |

+

"Node name for S&R": "BasicScheduler"

|

| 108 |

+

},

|

| 109 |

+

"widgets_values": [

|

| 110 |

+

"simple",

|

| 111 |

+

24,

|

| 112 |

+

1

|

| 113 |

+

],

|

| 114 |

+

"color": "#432",

|

| 115 |

+

"bgcolor": "#653"

|

| 116 |

+

},

|

| 117 |

+

{

|

| 118 |

+

"id": 25,

|

| 119 |

+

"type": "RandomNoise",

|

| 120 |

+

"pos": {

|

| 121 |

+

"0": 390,

|

| 122 |

+

"1": 580

|

| 123 |

+

},

|

| 124 |

+

"size": {

|

| 125 |

+

"0": 210.0858612060547,

|

| 126 |

+

"1": 82

|

| 127 |

+

},

|

| 128 |

+

"flags": {},

|

| 129 |

+

"order": 2,

|

| 130 |

+

"mode": 0,

|

| 131 |

+

"inputs": [],

|

| 132 |

+

"outputs": [

|

| 133 |

+

{

|

| 134 |

+

"name": "NOISE",

|

| 135 |

+

"type": "NOISE",

|

| 136 |

+

"links": [

|

| 137 |

+

56

|

| 138 |

+

],

|

| 139 |

+

"shape": 3

|

| 140 |

+

}

|

| 141 |

+

],

|

| 142 |

+

"properties": {

|

| 143 |

+

"Node name for S&R": "RandomNoise"

|

| 144 |

+

},

|

| 145 |

+

"widgets_values": [

|

| 146 |

+

1125899950349,

|

| 147 |

+

"increment"

|

| 148 |

+

],

|

| 149 |

+

"color": "#432",

|

| 150 |

+

"bgcolor": "#653"

|

| 151 |

+

},

|

| 152 |

+

{

|

| 153 |

+

"id": 22,

|

| 154 |

+

"type": "BasicGuider",

|

| 155 |

+

"pos": {

|

| 156 |

+

"0": 390,

|

| 157 |

+

"1": 230

|

| 158 |

+

},

|

| 159 |

+

"size": {

|

| 160 |

+

"0": 210.91961669921875,

|

| 161 |

+

"1": 46

|

| 162 |

+

},

|

| 163 |

+

"flags": {},

|

| 164 |

+

"order": 9,

|

| 165 |

+

"mode": 0,

|

| 166 |

+

"inputs": [

|

| 167 |

+

{

|

| 168 |

+

"name": "model",

|

| 169 |

+

"type": "MODEL",

|

| 170 |

+

"link": 39,

|

| 171 |

+

"slot_index": 0

|

| 172 |

+

},

|

| 173 |

+

{

|

| 174 |

+

"name": "conditioning",

|

| 175 |

+

"type": "CONDITIONING",

|

| 176 |

+

"link": 55,

|

| 177 |

+

"slot_index": 1

|

| 178 |

+

}

|

| 179 |

+

],

|

| 180 |

+

"outputs": [

|

| 181 |

+

{

|

| 182 |

+

"name": "GUIDER",

|

| 183 |

+

"type": "GUIDER",

|

| 184 |

+

"links": [

|

| 185 |

+

30

|

| 186 |

+

],

|

| 187 |

+

"slot_index": 0,

|

| 188 |

+

"shape": 3

|

| 189 |

+

}

|

| 190 |

+

],

|

| 191 |

+

"properties": {

|

| 192 |

+

"Node name for S&R": "BasicGuider"

|

| 193 |

+

}

|

| 194 |

+

},

|

| 195 |

+

{

|

| 196 |

+

"id": 5,

|

| 197 |

+

"type": "EmptyLatentImage",

|

| 198 |

+

"pos": {

|

| 199 |

+

"0": 390,

|

| 200 |

+

"1": 710

|

| 201 |

+

},

|

| 202 |

+

"size": {

|

| 203 |

+

"0": 211.3397979736328,

|

| 204 |

+

"1": 106

|

| 205 |

+

},

|

| 206 |

+

"flags": {},

|

| 207 |

+

"order": 3,

|

| 208 |

+

"mode": 0,

|

| 209 |

+

"inputs": [],

|

| 210 |

+

"outputs": [

|

| 211 |

+

{

|

| 212 |

+

"name": "LATENT",

|

| 213 |

+

"type": "LATENT",

|

| 214 |

+

"links": [

|

| 215 |

+

62

|

| 216 |

+

],

|

| 217 |

+

"slot_index": 0

|

| 218 |

+

}

|

| 219 |

+

],

|

| 220 |

+

"properties": {

|

| 221 |

+

"Node name for S&R": "EmptyLatentImage"

|

| 222 |

+

},

|

| 223 |

+

"widgets_values": [

|

| 224 |

+

1024,

|

| 225 |

+

1024,

|

| 226 |

+

1

|

| 227 |

+

],

|

| 228 |

+

"color": "#323",

|

| 229 |

+

"bgcolor": "#535"

|

| 230 |

+

},

|

| 231 |

+

{

|

| 232 |

+

"id": 13,

|

| 233 |

+

"type": "SamplerCustomAdvanced",

|

| 234 |

+

"pos": {

|

| 235 |

+

"0": 701,

|

| 236 |

+

"1": 225

|

| 237 |

+

},

|

| 238 |

+

"size": {

|

| 239 |

+

"0": 320,

|

| 240 |

+

"1": 110

|

| 241 |

+

},

|

| 242 |

+

"flags": {},

|

| 243 |

+

"order": 10,

|

| 244 |

+

"mode": 0,

|

| 245 |

+

"inputs": [

|

| 246 |

+

{

|

| 247 |

+

"name": "noise",

|

| 248 |

+

"type": "NOISE",

|

| 249 |

+

"link": 56,

|

| 250 |

+

"slot_index": 0

|

| 251 |

+

},

|

| 252 |

+

{

|

| 253 |

+

"name": "guider",

|

| 254 |

+

"type": "GUIDER",

|

| 255 |

+

"link": 30,

|

| 256 |

+

"slot_index": 1

|

| 257 |

+

},

|

| 258 |

+

{

|

| 259 |

+

"name": "sampler",

|

| 260 |

+

"type": "SAMPLER",

|

| 261 |

+

"link": 19,

|

| 262 |

+

"slot_index": 2

|

| 263 |

+

},

|

| 264 |

+

{

|

| 265 |

+

"name": "sigmas",

|

| 266 |

+

"type": "SIGMAS",

|

| 267 |

+

"link": 20,

|

| 268 |

+

"slot_index": 3

|

| 269 |

+

},

|

| 270 |

+

{

|

| 271 |

+

"name": "latent_image",

|

| 272 |

+

"type": "LATENT",

|

| 273 |

+

"link": 62,

|

| 274 |

+

"slot_index": 4

|

| 275 |

+

}

|

| 276 |

+

],

|

| 277 |

+

"outputs": [

|

| 278 |

+

{

|

| 279 |

+

"name": "output",

|

| 280 |

+

"type": "LATENT",

|

| 281 |

+

"links": [

|

| 282 |

+

64

|

| 283 |

+

],

|

| 284 |

+

"slot_index": 0,

|

| 285 |

+

"shape": 3

|

| 286 |

+

},

|

| 287 |

+

{

|

| 288 |

+

"name": "denoised_output",

|

| 289 |

+

"type": "LATENT",

|

| 290 |

+

"links": [],

|

| 291 |

+

"slot_index": 1,

|

| 292 |

+

"shape": 3

|

| 293 |

+

}

|

| 294 |

+

],

|

| 295 |

+

"properties": {

|

| 296 |

+

"Node name for S&R": "SamplerCustomAdvanced"

|

| 297 |

+

}

|

| 298 |

+

},

|

| 299 |

+

{

|

| 300 |

+

"id": 8,

|

| 301 |

+

"type": "VAEDecode",

|

| 302 |

+

"pos": {

|

| 303 |

+

"0": 1108,

|

| 304 |

+

"1": 230

|

| 305 |

+

},

|

| 306 |

+

"size": {

|

| 307 |

+

"0": 320,

|

| 308 |

+

"1": 50

|

| 309 |

+

},

|

| 310 |

+

"flags": {},

|

| 311 |

+

"order": 11,

|

| 312 |

+

"mode": 0,

|

| 313 |

+

"inputs": [

|

| 314 |

+

{

|

| 315 |

+

"name": "samples",

|

| 316 |

+

"type": "LATENT",

|

| 317 |

+

"link": 64

|

| 318 |

+

},

|

| 319 |

+

{

|

| 320 |

+

"name": "vae",

|

| 321 |

+

"type": "VAE",

|

| 322 |

+

"link": 12

|

| 323 |

+

}

|

| 324 |

+

],

|

| 325 |

+

"outputs": [

|

| 326 |

+

{

|

| 327 |

+

"name": "IMAGE",

|

| 328 |

+

"type": "IMAGE",

|

| 329 |

+

"links": [

|

| 330 |

+

66

|

| 331 |

+

],

|

| 332 |

+

"slot_index": 0

|

| 333 |

+

}

|

| 334 |

+

],

|

| 335 |

+

"properties": {

|

| 336 |

+

"Node name for S&R": "VAEDecode"

|

| 337 |

+

}

|

| 338 |

+

},

|

| 339 |

+

{

|

| 340 |

+

"id": 44,

|

| 341 |

+

"type": "SaveImage",

|

| 342 |

+

"pos": {

|

| 343 |

+

"0": 1502,

|

| 344 |

+

"1": 230

|

| 345 |

+

},

|

| 346 |

+

"size": {

|

| 347 |

+

"0": 268.7469787597656,

|

| 348 |

+

"1": 270

|

| 349 |

+

},

|

| 350 |

+

"flags": {},

|

| 351 |

+

"order": 12,

|

| 352 |

+

"mode": 0,

|

| 353 |

+

"inputs": [

|

| 354 |

+

{

|

| 355 |

+

"name": "images",

|

| 356 |

+

"type": "IMAGE",

|

| 357 |

+

"link": 66

|

| 358 |

+

}

|

| 359 |

+

],

|

| 360 |

+

"outputs": [],

|

| 361 |

+

"properties": {},

|

| 362 |

+

"widgets_values": [

|

| 363 |

+

"Flux-Lite-8B-Alpha/1"

|

| 364 |

+

]

|

| 365 |

+

},

|

| 366 |

+

{

|

| 367 |

+

"id": 10,

|

| 368 |

+

"type": "VAELoader",

|

| 369 |

+

"pos": {

|

| 370 |

+

"0": 699,

|

| 371 |

+

"1": 389

|

| 372 |

+

},

|

| 373 |

+

"size": {

|

| 374 |

+

"0": 320,

|

| 375 |

+

"1": 60

|

| 376 |

+

},

|

| 377 |

+

"flags": {

|

| 378 |

+

"collapsed": false

|

| 379 |

+

},

|

| 380 |

+

"order": 4,

|

| 381 |

+

"mode": 0,

|

| 382 |

+

"inputs": [],

|

| 383 |

+

"outputs": [

|

| 384 |

+

{

|

| 385 |

+

"name": "VAE",

|

| 386 |

+

"type": "VAE",

|

| 387 |

+

"links": [

|

| 388 |

+

12

|

| 389 |

+

],

|

| 390 |

+

"slot_index": 0,

|

| 391 |

+

"shape": 3

|

| 392 |

+

}

|

| 393 |

+

],

|

| 394 |

+

"properties": {

|

| 395 |

+

"Node name for S&R": "VAELoader"

|

| 396 |

+

},

|

| 397 |

+

"widgets_values": [

|

| 398 |

+

"flux/ae.sft"

|

| 399 |

+

]

|

| 400 |

+

},

|

| 401 |

+

{

|

| 402 |

+

"id": 38,

|

| 403 |

+

"type": "PrimitiveNode",

|

| 404 |

+

"pos": {

|

| 405 |

+

"0": -387,

|

| 406 |

+

"1": 525

|

| 407 |

+

},

|

| 408 |

+

"size": {

|

| 409 |

+

"0": 210,

|

| 410 |

+

"1": 82

|

| 411 |

+

},

|

| 412 |

+

"flags": {},

|

| 413 |

+

"order": 5,

|

| 414 |

+

"mode": 0,

|

| 415 |

+

"inputs": [],

|

| 416 |

+

"outputs": [

|

| 417 |

+

{

|

| 418 |

+

"name": "FLOAT",

|

| 419 |

+

"type": "FLOAT",

|

| 420 |

+

"links": [

|

| 421 |

+

54

|

| 422 |

+

],

|

| 423 |

+

"slot_index": 0,

|

| 424 |

+

"widget": {

|

| 425 |

+

"name": "guidance"

|

| 426 |

+

}

|

| 427 |

+

}

|

| 428 |

+

],

|

| 429 |

+

"title": "Guidance (CFG)",

|

| 430 |

+

"properties": {

|

| 431 |

+

"Run widget replace on values": false

|

| 432 |

+

},

|

| 433 |

+

"widgets_values": [

|

| 434 |

+

3.5,

|

| 435 |

+

"fixed"

|

| 436 |

+

],

|

| 437 |

+

"color": "#233",

|

| 438 |

+

"bgcolor": "#355"

|

| 439 |

+

},

|

| 440 |

+

{

|

| 441 |

+

"id": 12,

|

| 442 |

+

"type": "UNETLoader",

|

| 443 |

+

"pos": {

|

| 444 |

+

"0": -496,

|

| 445 |

+

"1": 224

|

| 446 |

+

},

|

| 447 |

+

"size": {

|

| 448 |

+

"0": 317.64862060546875,

|

| 449 |

+

"1": 82.45046997070312

|

| 450 |

+

},

|

| 451 |

+

"flags": {},

|

| 452 |

+

"order": 6,

|

| 453 |

+

"mode": 0,

|

| 454 |

+

"inputs": [],

|

| 455 |

+

"outputs": [

|

| 456 |

+

{

|

| 457 |

+

"name": "MODEL",

|

| 458 |

+

"type": "MODEL",

|

| 459 |

+

"links": [

|

| 460 |

+

38,

|

| 461 |

+

39

|

| 462 |

+

],

|

| 463 |

+

"slot_index": 0,

|

| 464 |

+

"shape": 3

|

| 465 |

+

}

|

| 466 |

+

],

|

| 467 |

+

"properties": {

|

| 468 |

+

"Node name for S&R": "UNETLoader"

|

| 469 |

+

},

|

| 470 |

+

"widgets_values": [

|

| 471 |

+

"Flux.1-lite-8B-alpha.safetensors",

|

| 472 |

+

"default"

|

| 473 |

+

]

|

| 474 |

+

},

|

| 475 |

+

{

|

| 476 |

+

"id": 29,

|

| 477 |

+

"type": "CLIPTextEncodeFlux",

|

| 478 |

+

"pos": {

|

| 479 |

+

"0": -119,

|

| 480 |

+

"1": 230

|

| 481 |

+

},

|

| 482 |

+

"size": {

|

| 483 |

+

"0": 448.8125,

|

| 484 |

+

"1": 455.3394775390625

|

| 485 |

+

},

|

| 486 |

+

"flags": {},

|

| 487 |

+

"order": 7,

|

| 488 |

+

"mode": 0,

|

| 489 |

+

"inputs": [

|

| 490 |

+

{

|

| 491 |

+

"name": "clip",

|

| 492 |

+

"type": "CLIP",

|

| 493 |

+

"link": 43

|

| 494 |

+

},

|

| 495 |

+

{

|

| 496 |

+

"name": "guidance",

|

| 497 |

+

"type": "FLOAT",

|

| 498 |

+

"link": 54,

|

| 499 |

+

"slot_index": 2,

|

| 500 |

+

"widget": {

|

| 501 |

+

"name": "guidance"

|

| 502 |

+

}

|

| 503 |

+

}

|

| 504 |

+

],

|

| 505 |

+

"outputs": [

|

| 506 |

+

{

|

| 507 |

+

"name": "CONDITIONING",

|

| 508 |

+

"type": "CONDITIONING",

|

| 509 |

+

"links": [

|

| 510 |

+

55

|

| 511 |

+

],

|

| 512 |

+

"slot_index": 0,

|

| 513 |

+

"shape": 3

|

| 514 |

+

}

|

| 515 |

+

],

|

| 516 |

+

"properties": {

|

| 517 |

+

"Node name for S&R": "CLIPTextEncodeFlux"

|

| 518 |

+

},

|

| 519 |

+

"widgets_values": [

|

| 520 |

+

"A scene inspired by 2000 comedy animation, a glowing green alien whose fluorescent skin emits light, standing in a dark purple forest. The alien is holding a large sign that reads \"LITE 8B ALPHA\" in bold letters. The forest around is shadowy, with tall, eerie trees and mist rolling in. The alien radiates a soft, supernatural glow, illuminating the surroundings, creating a stark contrast between light and darkness. Style of an old comic, with flat colors, halftone shading, and a slightly weathered, vintage texture.\n\n",

|

| 521 |

+

"A scene inspired by 2000 comedy animation, a glowing green alien whose fluorescent skin emits light, standing in a dark purple forest. The alien is holding a large sign that reads \"LITE 8B ALPHA\" in bold letters. The forest around is shadowy, with tall, eerie trees and mist rolling in. The alien radiates a soft, supernatural glow, illuminating the surroundings, creating a stark contrast between light and darkness. Style of an old comic, with flat colors, halftone shading, and a slightly weathered, vintage texture.\n",

|

| 522 |

+

3.5

|

| 523 |

+

],

|

| 524 |

+

"color": "#232",

|

| 525 |

+

"bgcolor": "#353"

|

| 526 |

+

}

|

| 527 |

+

],

|

| 528 |

+

"links": [

|

| 529 |

+

[

|

| 530 |

+

12,

|

| 531 |

+

10,

|

| 532 |

+

0,

|

| 533 |

+

8,

|

| 534 |

+

1,

|

| 535 |

+

"VAE"

|

| 536 |

+

],

|

| 537 |

+

[

|

| 538 |

+

19,

|

| 539 |

+

16,

|

| 540 |

+

0,

|

| 541 |

+

13,

|

| 542 |

+

2,

|

| 543 |

+

"SAMPLER"

|

| 544 |

+

],

|

| 545 |

+

[

|

| 546 |

+

20,

|

| 547 |

+

17,

|

| 548 |

+

0,

|

| 549 |

+

13,

|

| 550 |

+

3,

|

| 551 |

+

"SIGMAS"

|

| 552 |

+

],

|

| 553 |

+

[

|

| 554 |

+

30,

|

| 555 |

+

22,

|

| 556 |

+

0,

|

| 557 |

+

13,

|

| 558 |

+

1,

|

| 559 |

+

"GUIDER"

|

| 560 |

+

],

|

| 561 |

+

[

|

| 562 |

+

38,

|

| 563 |

+

12,

|

| 564 |

+

0,

|

| 565 |

+

17,

|

| 566 |

+

0,

|

| 567 |

+

"MODEL"

|

| 568 |

+

],

|

| 569 |

+

[

|

| 570 |

+

39,

|

| 571 |

+

12,

|

| 572 |

+

0,

|

| 573 |

+

22,

|

| 574 |

+

0,

|

| 575 |

+

"MODEL"

|

| 576 |

+

],

|

| 577 |

+

[

|

| 578 |

+

43,

|

| 579 |

+

11,

|

| 580 |

+

0,

|

| 581 |

+

29,

|

| 582 |

+

0,

|

| 583 |

+

"CLIP"

|

| 584 |

+

],

|

| 585 |

+

[

|

| 586 |

+

54,

|

| 587 |

+

38,

|

| 588 |

+

0,

|

| 589 |

+

29,

|

| 590 |

+

1,

|

| 591 |

+

"FLOAT"

|

| 592 |

+

],

|

| 593 |

+

[

|

| 594 |

+

55,

|

| 595 |

+

29,

|

| 596 |

+

0,

|

| 597 |

+

22,

|

| 598 |

+

1,

|

| 599 |

+

"CONDITIONING"

|

| 600 |

+

],

|

| 601 |

+

[

|

| 602 |

+

56,

|

| 603 |

+

25,

|

| 604 |

+

0,

|

| 605 |

+

13,

|

| 606 |

+

0,

|

| 607 |

+

"NOISE"

|

| 608 |

+

],

|

| 609 |

+

[

|

| 610 |

+

62,

|

| 611 |

+

5,

|

| 612 |

+

0,

|

| 613 |

+

13,

|

| 614 |

+

4,

|

| 615 |

+

"LATENT"

|

| 616 |

+

],

|

| 617 |

+

[

|

| 618 |

+

64,

|

| 619 |

+

13,

|

| 620 |

+

0,

|

| 621 |

+

8,

|

| 622 |

+

0,

|

| 623 |

+

"LATENT"

|

| 624 |

+

],

|

| 625 |

+

[

|

| 626 |

+

66,

|

| 627 |

+

8,

|

| 628 |

+

0,

|

| 629 |

+

44,

|

| 630 |

+

0,

|

| 631 |

+

"IMAGE"

|

| 632 |

+

]

|

| 633 |

+

],

|

| 634 |

+

"groups": [],

|

| 635 |

+

"config": {},

|

| 636 |

+

"extra": {

|

| 637 |

+

"ds": {

|

| 638 |

+

"scale": 0.826446280991736,

|

| 639 |

+

"offset": [

|

| 640 |

+

804.0032486112497,

|

| 641 |

+

-74.69542723422043

|

| 642 |

+

]

|

| 643 |

+

},

|

| 644 |

+

"workspace_info": {

|

| 645 |

+

"id": "kzetqdSCzE7d9gP4zHck1",

|

| 646 |

+

"saveLock": false,

|

| 647 |

+

"cloudID": null,

|

| 648 |

+

"coverMediaPath": null

|

| 649 |

+

}

|

| 650 |

+

},

|

| 651 |

+

"version": 0.4

|

| 652 |

+

}

|

comfy/flux.1-lite_workflow.png

ADDED

|

flux.1-lite-8B.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6f0cd86981ab38c5e3465f78318a0abb118ee9ebb3123d755e41b3e64c06f9ea

|

| 3 |

+

size 16326584928

|

model_index.json

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "FluxPipeline",

|

| 3 |

+

"_diffusers_version": "0.32.0.dev0",

|

| 4 |

+

"_name_or_path": "black-forest-labs/FLUX.1-dev",

|

| 5 |

+

"scheduler": [

|

| 6 |

+

"diffusers",

|

| 7 |

+

"FlowMatchEulerDiscreteScheduler"

|

| 8 |

+

],

|

| 9 |

+

"text_encoder": [

|

| 10 |

+

"transformers",

|

| 11 |

+

"CLIPTextModel"

|

| 12 |

+

],

|

| 13 |

+

"text_encoder_2": [

|

| 14 |

+

"transformers",

|

| 15 |

+

"T5EncoderModel"

|

| 16 |

+

],

|

| 17 |

+

"tokenizer": [

|

| 18 |

+

"transformers",

|

| 19 |

+

"CLIPTokenizer"

|

| 20 |

+

],

|

| 21 |

+

"tokenizer_2": [

|

| 22 |

+

"transformers",

|

| 23 |

+

"T5TokenizerFast"

|

| 24 |

+

],

|

| 25 |

+

"transformer": [

|

| 26 |

+

"diffusers",

|

| 27 |

+

"FluxTransformer2DModel"

|

| 28 |

+

],

|

| 29 |

+

"vae": [

|

| 30 |

+

"diffusers",

|

| 31 |

+

"AutoencoderKL"

|

| 32 |

+

]

|

| 33 |

+

}

|

sample_images/flux1-lite-8B_sample.png

ADDED

|

Git LFS Details

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "FlowMatchEulerDiscreteScheduler",

|

| 3 |

+

"_diffusers_version": "0.32.0.dev0",

|

| 4 |

+

"base_image_seq_len": 256,

|

| 5 |

+

"base_shift": 0.5,

|

| 6 |

+

"invert_sigmas": false,

|

| 7 |

+

"max_image_seq_len": 4096,

|

| 8 |

+

"max_shift": 1.15,

|

| 9 |

+

"num_train_timesteps": 1000,

|

| 10 |

+

"shift": 3.0,

|

| 11 |

+

"shift_terminal": null,

|

| 12 |

+

"use_beta_sigmas": false,

|

| 13 |

+

"use_dynamic_shifting": true,

|

| 14 |

+

"use_exponential_sigmas": false,

|

| 15 |

+

"use_karras_sigmas": false

|

| 16 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/mnt/localnvme/huggingface_cache/hub/models--black-forest-labs--FLUX.1-dev/snapshots/0ef5fff789c832c5c7f4e127f94c8b54bbcced44/text_encoder",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "quick_gelu",

|

| 11 |

+

"hidden_size": 768,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 3072,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 12,

|

| 19 |

+

"num_hidden_layers": 12,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 768,

|

| 22 |

+

"torch_dtype": "bfloat16",

|

| 23 |

+

"transformers_version": "4.47.1",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

text_encoder/model.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:893d67a23f4693ed42cdab4cbad7fe3e727cf59609c40da28a46b5470f9ed082

|

| 3 |

+

size 246144352

|

text_encoder_2/config.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/mnt/localnvme/huggingface_cache/hub/models--black-forest-labs--FLUX.1-dev/snapshots/0ef5fff789c832c5c7f4e127f94c8b54bbcced44/text_encoder_2",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"T5EncoderModel"

|

| 5 |

+

],

|

| 6 |

+

"classifier_dropout": 0.0,

|

| 7 |

+

"d_ff": 10240,

|

| 8 |

+

"d_kv": 64,

|

| 9 |

+

"d_model": 4096,

|

| 10 |

+

"decoder_start_token_id": 0,

|

| 11 |

+

"dense_act_fn": "gelu_new",

|

| 12 |

+

"dropout_rate": 0.1,

|

| 13 |

+

"eos_token_id": 1,

|

| 14 |

+

"feed_forward_proj": "gated-gelu",

|

| 15 |

+

"initializer_factor": 1.0,

|

| 16 |

+

"is_encoder_decoder": true,

|

| 17 |

+

"is_gated_act": true,

|

| 18 |

+

"layer_norm_epsilon": 1e-06,

|

| 19 |

+

"model_type": "t5",

|

| 20 |

+

"num_decoder_layers": 24,

|

| 21 |

+

"num_heads": 64,

|

| 22 |

+

"num_layers": 24,

|

| 23 |

+

"output_past": true,

|

| 24 |

+

"pad_token_id": 0,

|

| 25 |

+

"relative_attention_max_distance": 128,

|

| 26 |

+

"relative_attention_num_buckets": 32,

|

| 27 |

+

"tie_word_embeddings": false,

|

| 28 |

+

"torch_dtype": "bfloat16",

|

| 29 |

+

"transformers_version": "4.47.1",

|

| 30 |

+

"use_cache": true,

|

| 31 |

+

"vocab_size": 32128

|

| 32 |

+

}

|

text_encoder_2/model-00001-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ec87bffd1923e8b2774a6d240c922a41f6143081d52cf83b8fe39e9d838c893e

|

| 3 |

+

size 4994582224

|

text_encoder_2/model-00002-of-00002.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a5640855b301fcdbceddfa90ae8066cd9414aff020552a201a255ecf2059da00

|

| 3 |

+

size 4530066360

|

text_encoder_2/model.safetensors.index.json

ADDED

|

@@ -0,0 +1,226 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|