Upload 9 files

Browse files- README.md +68 -0

- cocom.png +0 -0

- config.json +25 -0

- generation_config.json +5 -0

- model-00001-of-00003.safetensors +3 -0

- model-00002-of-00003.safetensors +3 -0

- model-00003-of-00003.safetensors +3 -0

- model.safetensors.index.json +746 -0

- modeling_cocom.py +306 -0

README.md

ADDED

|

@@ -0,0 +1,68 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: transformers

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

base_model:

|

| 6 |

+

- mistralai/Mistral-7B-Instruct-v0.2

|

| 7 |

+

---

|

| 8 |

+

|

| 9 |

+

# Summary

|

| 10 |

+

|

| 11 |

+

<!-- Provide a quick summary of what the model is/does. -->

|

| 12 |

+

|

| 13 |

+

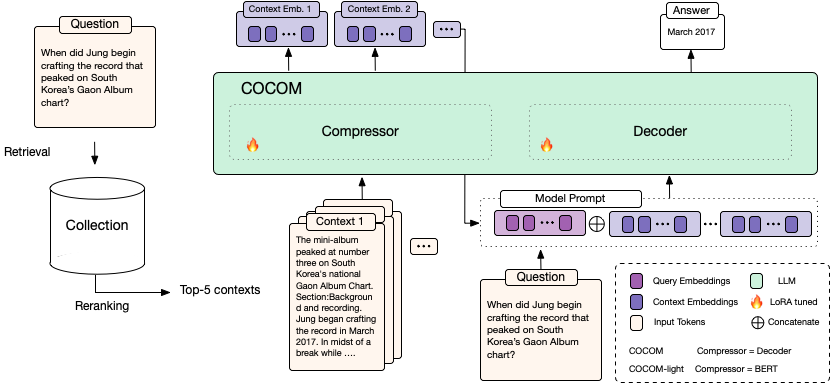

COCOM is an effective context compression method, reducing long contexts to only a handful of Context Embeddings speeding up the generation time for Question Answering

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

## Context Embeddings for Efficient Answer Generation in RAG

|

| 18 |

+

|

| 19 |

+

|

| 20 |

+

*Retrieval-Augmented Generation* (RAG) allows overcoming the limited knowledge of LLMs by extending the input with external context. A major drawback in RAG is the considerable increase in decoding time with longer inputs. We address this challenge by presenting **COCOM**, an effective context compression method, reducing long contexts to only a handful of *Context Embeddings* speeding up the generation time. Our method allows for different compression rates trading off decoding time for answer quality. Compared to earlier methods, COCOM allows for handling multiple contexts more effectively, significantly reducing decoding time for long inputs. Our method demonstrates a speed-up of up to 5.69 times while achieving higher performance compared to existing efficient context compression methods.

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

## Model Inference

|

| 24 |

+

|

| 25 |

+

For batch processing, the model takes as input

|

| 26 |

+

|

| 27 |

+

- `questions` (`list`): A list containing questions

|

| 28 |

+

- `contexts` (`list of lists`). For each question a list of contexts, where the number of contexts is fixed throughout questions. The models have been fine-tuned (and should be inferenced) with `5` contexts.

|

| 29 |

+

|

| 30 |

+

The model compresses the questions into context embeddings and answers the question based on the provided context embeddings.

|

| 31 |

+

|

| 32 |

+

```python

|

| 33 |

+

from transformers import AutoModel

|

| 34 |

+

|

| 35 |

+

model = AutoModel.from_pretrained('naver/cocom-v1-4-mistral-7b', trust_remote_code=True)

|

| 36 |

+

model = model.to('cuda')

|

| 37 |

+

contexts = [[

|

| 38 |

+

'Rosalind Bailey. Rosalind Bailey Rosalind Bailey (born 1946) is a British actress, known for her portrayal of Sarah Headley ("née" Lytton) in the 1970s and 1980s BBC television drama “When the Boat Comes In". Bailey has appeared in numerous British television drama series, including "Byker Grove", “Distant Shores" and "Burn Up". Her stage work includes playing Miss Mary Shepherd in Alan Bennett’s play "The Lady in the Van”.',

|

| 39 |

+

'Malcolm Terris. Malcolm Terris Malcolm Terris (born 11 January 1941 in Sunderland, County Durham) is a British actor. He had a lengthy career in a large number of television programmes. Possibly his best-known role was in "When the Boat Comes In", a popular 1970s series, where he played the part of Matt Headley. His film career includes appearances in "The First Great Train Robbery" (1978), "McVicar" (1980), "The Plague Dogs" (1982, voice only), "Slayground" (1983), “The Bounty" (1984) as Thomas Huggan, ship’s surgeon, "Mata Hari" (1985), "Revolution" (1985), “Scandal" (1989), and “Chaplin” (1992). His TV appearances include: One episode of',

|

| 40 |

+

'When the Boat Comes In. When the Boat Comes In When the Boat Comes In is a British television period drama produced by the BBC between 1976 and 1981. The series stars James Bolam as Jack Ford, a First World War veteran who returns to his poverty-stricken (fictional) town of Gallowshield in the North East of England. The series dramatises the political struggles of the 1920s and 1930s and explores the impact of national and international politics upon Ford and the people around him. Section:Production. The majority of episodes were written by creator James Mitchell, but in Series 1 north-eastern',

|

| 41 |

+

'Susie Youssef. Youssef began her comedy career as a writer for "The Ronnie Johns Half Hour" in 2006, and made her acting debut in the short film "Clicked" in the role of Lina in 2011. In 2014, she played Jane in the short film "Kevin Needs to Make New Friends: Because Everyone Hates Him for Some Reason" and then turned to television where she appeared in "The Chaser’s Media Circus". In 2014, Youssef played the lead role of Sarah in the Hayloft Project’s stage play "The Boat People" which won the Best On Stage award at the FBi SMAC Awards',

|

| 42 |

+

'Madelaine Newton. Madelaine Newton Madelaine Newton is a British actress best known for her portrayal of Dolly in 1970s BBC television drama "When the Boat Comes In". She is married to actor Kevin Whately, known for his role as Robert "Robbie" Lewis in both "Inspector Morse” and its spin-off "Lewis". They have two children. She starred alongside her husband in the “Inspector Morse" episode "Masonic Mysteries" as Beryl Newsome - the love-interest of Morse - whom Morse was wrongly suspected of murdering. She played Whately’s on-screen wife in the 1988 Look and Read children’s serial, Geordie Racer. She also made'

|

| 43 |

+

]]

|

| 44 |

+

questions = ['who played sarah hedley in when the boat comes in?']

|

| 45 |

+

|

| 46 |

+

answers = model.generate_from_text(contexts=contexts, questions=questions, max_new_tokens=128)

|

| 47 |

+

|

| 48 |

+

print(answers)

|

| 49 |

+

```

|

| 50 |

+

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

|

| 54 |

+

|

| 55 |

+

**References:**

|

| 56 |

+

|

| 57 |

+

**Paper**: https://arxiv.org/pdf/2407.09252

|

| 58 |

+

```

|

| 59 |

+

@misc{rau2024contextembeddingsefficientanswer,

|

| 60 |

+

title={Context Embeddings for Efficient Answer Generation in RAG},

|

| 61 |

+

author={David Rau and Shuai Wang and Hervé Déjean and Stéphane Clinchant},

|

| 62 |

+

year={2024},

|

| 63 |

+

eprint={2407.09252},

|

| 64 |

+

archivePrefix={arXiv},

|

| 65 |

+

primaryClass={cs.CL},

|

| 66 |

+

url={https://arxiv.org/abs/2407.09252},

|

| 67 |

+

}

|

| 68 |

+

```

|

cocom.png

ADDED

|

config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/scratch/1/user/sclincha/cocom_release/cocom-v1-4-mistral-7b/",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"COCOM"

|

| 5 |

+

],

|

| 6 |

+

"auto_map": {

|

| 7 |

+

"AutoConfig": "modeling_cocom.COCOMConfig",

|

| 8 |

+

"AutoModel": "modeling_cocom.COCOM",

|

| 9 |

+

"AutoModelForCausalLM": "modeling_cocom.COCOM"

|

| 10 |

+

},

|

| 11 |

+

"compr_linear_type": "concat",

|

| 12 |

+

"compr_model_name": null,

|

| 13 |

+

"compr_rate": 4,

|

| 14 |

+

"decoder_model_name": "mistralai/Mistral-7B-Instruct-v0.2",

|

| 15 |

+

"generation_top_k": 5,

|

| 16 |

+

"lora": true,

|

| 17 |

+

"lora_r": 16,

|

| 18 |

+

"max_new_tokens": 128,

|

| 19 |

+

"model_type": "COCOM",

|

| 20 |

+

"quantization": "no",

|

| 21 |

+

"sep": false,

|

| 22 |

+

"torch_dtype": "bfloat16",

|

| 23 |

+

"training_form": "both",

|

| 24 |

+

"transformers_version": "4.45.2"

|

| 25 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"max_new_tokens": 128,

|

| 4 |

+

"transformers_version": "4.45.2"

|

| 5 |

+

}

|

model-00001-of-00003.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0fe5aba54200930a590d773567a042402a03f6e313f6bdfa19b7c7aeb7014b06

|

| 3 |

+

size 4971467720

|

model-00002-of-00003.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:502881d0b08de90d4a8e29665b253a4dd691a71c769a19e77b2de1cd9686a987

|

| 3 |

+

size 4996308792

|

model-00003-of-00003.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4dd7504c0fe0be1055012a2a8eb5f728e23c9240bbf6c8381c20fcf31585d440

|

| 3 |

+

size 4599751896

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,746 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 14567415808

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"decoder.base_model.model.lm_head.weight": "model-00003-of-00003.safetensors",

|

| 7 |

+

"decoder.base_model.model.model.embed_tokens.weight": "model-00001-of-00003.safetensors",

|

| 8 |

+

"decoder.base_model.model.model.layers.0.input_layernorm.weight": "model-00001-of-00003.safetensors",

|

| 9 |

+

"decoder.base_model.model.model.layers.0.mlp.down_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 10 |

+

"decoder.base_model.model.model.layers.0.mlp.down_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 11 |

+

"decoder.base_model.model.model.layers.0.mlp.down_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 12 |

+

"decoder.base_model.model.model.layers.0.mlp.gate_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 13 |

+

"decoder.base_model.model.model.layers.0.mlp.gate_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 14 |

+

"decoder.base_model.model.model.layers.0.mlp.gate_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 15 |

+

"decoder.base_model.model.model.layers.0.mlp.up_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 16 |

+

"decoder.base_model.model.model.layers.0.mlp.up_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 17 |

+

"decoder.base_model.model.model.layers.0.mlp.up_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 18 |

+

"decoder.base_model.model.model.layers.0.post_attention_layernorm.weight": "model-00001-of-00003.safetensors",

|

| 19 |

+

"decoder.base_model.model.model.layers.0.self_attn.k_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 20 |

+

"decoder.base_model.model.model.layers.0.self_attn.k_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 21 |

+

"decoder.base_model.model.model.layers.0.self_attn.k_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 22 |

+

"decoder.base_model.model.model.layers.0.self_attn.o_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 23 |

+

"decoder.base_model.model.model.layers.0.self_attn.o_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 24 |

+

"decoder.base_model.model.model.layers.0.self_attn.o_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 25 |

+

"decoder.base_model.model.model.layers.0.self_attn.q_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 26 |

+

"decoder.base_model.model.model.layers.0.self_attn.q_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 27 |

+

"decoder.base_model.model.model.layers.0.self_attn.q_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 28 |

+

"decoder.base_model.model.model.layers.0.self_attn.v_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 29 |

+

"decoder.base_model.model.model.layers.0.self_attn.v_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 30 |

+

"decoder.base_model.model.model.layers.0.self_attn.v_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 31 |

+

"decoder.base_model.model.model.layers.1.input_layernorm.weight": "model-00001-of-00003.safetensors",

|

| 32 |

+

"decoder.base_model.model.model.layers.1.mlp.down_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 33 |

+

"decoder.base_model.model.model.layers.1.mlp.down_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 34 |

+

"decoder.base_model.model.model.layers.1.mlp.down_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 35 |

+

"decoder.base_model.model.model.layers.1.mlp.gate_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 36 |

+

"decoder.base_model.model.model.layers.1.mlp.gate_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 37 |

+

"decoder.base_model.model.model.layers.1.mlp.gate_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 38 |

+

"decoder.base_model.model.model.layers.1.mlp.up_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 39 |

+

"decoder.base_model.model.model.layers.1.mlp.up_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 40 |

+

"decoder.base_model.model.model.layers.1.mlp.up_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 41 |

+

"decoder.base_model.model.model.layers.1.post_attention_layernorm.weight": "model-00001-of-00003.safetensors",

|

| 42 |

+

"decoder.base_model.model.model.layers.1.self_attn.k_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 43 |

+

"decoder.base_model.model.model.layers.1.self_attn.k_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 44 |

+

"decoder.base_model.model.model.layers.1.self_attn.k_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 45 |

+

"decoder.base_model.model.model.layers.1.self_attn.o_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 46 |

+

"decoder.base_model.model.model.layers.1.self_attn.o_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 47 |

+

"decoder.base_model.model.model.layers.1.self_attn.o_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 48 |

+

"decoder.base_model.model.model.layers.1.self_attn.q_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 49 |

+

"decoder.base_model.model.model.layers.1.self_attn.q_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 50 |

+

"decoder.base_model.model.model.layers.1.self_attn.q_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 51 |

+

"decoder.base_model.model.model.layers.1.self_attn.v_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 52 |

+

"decoder.base_model.model.model.layers.1.self_attn.v_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 53 |

+

"decoder.base_model.model.model.layers.1.self_attn.v_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 54 |

+

"decoder.base_model.model.model.layers.10.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 55 |

+

"decoder.base_model.model.model.layers.10.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 56 |

+

"decoder.base_model.model.model.layers.10.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 57 |

+

"decoder.base_model.model.model.layers.10.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 58 |

+

"decoder.base_model.model.model.layers.10.mlp.gate_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 59 |

+

"decoder.base_model.model.model.layers.10.mlp.gate_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 60 |

+

"decoder.base_model.model.model.layers.10.mlp.gate_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 61 |

+

"decoder.base_model.model.model.layers.10.mlp.up_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 62 |

+

"decoder.base_model.model.model.layers.10.mlp.up_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 63 |

+

"decoder.base_model.model.model.layers.10.mlp.up_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 64 |

+

"decoder.base_model.model.model.layers.10.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 65 |

+

"decoder.base_model.model.model.layers.10.self_attn.k_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 66 |

+

"decoder.base_model.model.model.layers.10.self_attn.k_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 67 |

+

"decoder.base_model.model.model.layers.10.self_attn.k_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 68 |

+

"decoder.base_model.model.model.layers.10.self_attn.o_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 69 |

+

"decoder.base_model.model.model.layers.10.self_attn.o_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 70 |

+

"decoder.base_model.model.model.layers.10.self_attn.o_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 71 |

+

"decoder.base_model.model.model.layers.10.self_attn.q_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 72 |

+

"decoder.base_model.model.model.layers.10.self_attn.q_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 73 |

+

"decoder.base_model.model.model.layers.10.self_attn.q_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 74 |

+

"decoder.base_model.model.model.layers.10.self_attn.v_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 75 |

+

"decoder.base_model.model.model.layers.10.self_attn.v_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 76 |

+

"decoder.base_model.model.model.layers.10.self_attn.v_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 77 |

+

"decoder.base_model.model.model.layers.11.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 78 |

+

"decoder.base_model.model.model.layers.11.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 79 |

+

"decoder.base_model.model.model.layers.11.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 80 |

+

"decoder.base_model.model.model.layers.11.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 81 |

+

"decoder.base_model.model.model.layers.11.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 82 |

+

"decoder.base_model.model.model.layers.11.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 83 |

+

"decoder.base_model.model.model.layers.11.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 84 |

+

"decoder.base_model.model.model.layers.11.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 85 |

+

"decoder.base_model.model.model.layers.11.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 86 |

+

"decoder.base_model.model.model.layers.11.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 87 |

+

"decoder.base_model.model.model.layers.11.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 88 |

+

"decoder.base_model.model.model.layers.11.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 89 |

+

"decoder.base_model.model.model.layers.11.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 90 |

+

"decoder.base_model.model.model.layers.11.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 91 |

+

"decoder.base_model.model.model.layers.11.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 92 |

+

"decoder.base_model.model.model.layers.11.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 93 |

+

"decoder.base_model.model.model.layers.11.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 94 |

+

"decoder.base_model.model.model.layers.11.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 95 |

+

"decoder.base_model.model.model.layers.11.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 96 |

+

"decoder.base_model.model.model.layers.11.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 97 |

+

"decoder.base_model.model.model.layers.11.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 98 |

+

"decoder.base_model.model.model.layers.11.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 99 |

+

"decoder.base_model.model.model.layers.11.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 100 |

+

"decoder.base_model.model.model.layers.12.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 101 |

+

"decoder.base_model.model.model.layers.12.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 102 |

+

"decoder.base_model.model.model.layers.12.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 103 |

+

"decoder.base_model.model.model.layers.12.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 104 |

+

"decoder.base_model.model.model.layers.12.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 105 |

+

"decoder.base_model.model.model.layers.12.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 106 |

+

"decoder.base_model.model.model.layers.12.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 107 |

+

"decoder.base_model.model.model.layers.12.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 108 |

+

"decoder.base_model.model.model.layers.12.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 109 |

+

"decoder.base_model.model.model.layers.12.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 110 |

+

"decoder.base_model.model.model.layers.12.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 111 |

+

"decoder.base_model.model.model.layers.12.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 112 |

+

"decoder.base_model.model.model.layers.12.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 113 |

+

"decoder.base_model.model.model.layers.12.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 114 |

+

"decoder.base_model.model.model.layers.12.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 115 |

+

"decoder.base_model.model.model.layers.12.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 116 |

+

"decoder.base_model.model.model.layers.12.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 117 |

+

"decoder.base_model.model.model.layers.12.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 118 |

+

"decoder.base_model.model.model.layers.12.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 119 |

+

"decoder.base_model.model.model.layers.12.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 120 |

+

"decoder.base_model.model.model.layers.12.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 121 |

+

"decoder.base_model.model.model.layers.12.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 122 |

+

"decoder.base_model.model.model.layers.12.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 123 |

+

"decoder.base_model.model.model.layers.13.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 124 |

+

"decoder.base_model.model.model.layers.13.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 125 |

+

"decoder.base_model.model.model.layers.13.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 126 |

+

"decoder.base_model.model.model.layers.13.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 127 |

+

"decoder.base_model.model.model.layers.13.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 128 |

+

"decoder.base_model.model.model.layers.13.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 129 |

+

"decoder.base_model.model.model.layers.13.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 130 |

+

"decoder.base_model.model.model.layers.13.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 131 |

+

"decoder.base_model.model.model.layers.13.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 132 |

+

"decoder.base_model.model.model.layers.13.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 133 |

+

"decoder.base_model.model.model.layers.13.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 134 |

+

"decoder.base_model.model.model.layers.13.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 135 |

+

"decoder.base_model.model.model.layers.13.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 136 |

+

"decoder.base_model.model.model.layers.13.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 137 |

+

"decoder.base_model.model.model.layers.13.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 138 |

+

"decoder.base_model.model.model.layers.13.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 139 |

+

"decoder.base_model.model.model.layers.13.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 140 |

+

"decoder.base_model.model.model.layers.13.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 141 |

+

"decoder.base_model.model.model.layers.13.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 142 |

+

"decoder.base_model.model.model.layers.13.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 143 |

+

"decoder.base_model.model.model.layers.13.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 144 |

+

"decoder.base_model.model.model.layers.13.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 145 |

+

"decoder.base_model.model.model.layers.13.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 146 |

+

"decoder.base_model.model.model.layers.14.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 147 |

+

"decoder.base_model.model.model.layers.14.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 148 |

+

"decoder.base_model.model.model.layers.14.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 149 |

+

"decoder.base_model.model.model.layers.14.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 150 |

+

"decoder.base_model.model.model.layers.14.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 151 |

+

"decoder.base_model.model.model.layers.14.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 152 |

+

"decoder.base_model.model.model.layers.14.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 153 |

+

"decoder.base_model.model.model.layers.14.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 154 |

+

"decoder.base_model.model.model.layers.14.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 155 |

+

"decoder.base_model.model.model.layers.14.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 156 |

+

"decoder.base_model.model.model.layers.14.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 157 |

+

"decoder.base_model.model.model.layers.14.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 158 |

+

"decoder.base_model.model.model.layers.14.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 159 |

+

"decoder.base_model.model.model.layers.14.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 160 |

+

"decoder.base_model.model.model.layers.14.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 161 |

+

"decoder.base_model.model.model.layers.14.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 162 |

+

"decoder.base_model.model.model.layers.14.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 163 |

+

"decoder.base_model.model.model.layers.14.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 164 |

+

"decoder.base_model.model.model.layers.14.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 165 |

+

"decoder.base_model.model.model.layers.14.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 166 |

+

"decoder.base_model.model.model.layers.14.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 167 |

+

"decoder.base_model.model.model.layers.14.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 168 |

+

"decoder.base_model.model.model.layers.14.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 169 |

+

"decoder.base_model.model.model.layers.15.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 170 |

+

"decoder.base_model.model.model.layers.15.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 171 |

+

"decoder.base_model.model.model.layers.15.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 172 |

+

"decoder.base_model.model.model.layers.15.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 173 |

+

"decoder.base_model.model.model.layers.15.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 174 |

+

"decoder.base_model.model.model.layers.15.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 175 |

+

"decoder.base_model.model.model.layers.15.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 176 |

+

"decoder.base_model.model.model.layers.15.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 177 |

+

"decoder.base_model.model.model.layers.15.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 178 |

+

"decoder.base_model.model.model.layers.15.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 179 |

+

"decoder.base_model.model.model.layers.15.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 180 |

+

"decoder.base_model.model.model.layers.15.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 181 |

+

"decoder.base_model.model.model.layers.15.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 182 |

+

"decoder.base_model.model.model.layers.15.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 183 |

+

"decoder.base_model.model.model.layers.15.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 184 |

+

"decoder.base_model.model.model.layers.15.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 185 |

+

"decoder.base_model.model.model.layers.15.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 186 |

+

"decoder.base_model.model.model.layers.15.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 187 |

+

"decoder.base_model.model.model.layers.15.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 188 |

+

"decoder.base_model.model.model.layers.15.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 189 |

+

"decoder.base_model.model.model.layers.15.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 190 |

+

"decoder.base_model.model.model.layers.15.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 191 |

+

"decoder.base_model.model.model.layers.15.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 192 |

+

"decoder.base_model.model.model.layers.16.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 193 |

+

"decoder.base_model.model.model.layers.16.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 194 |

+

"decoder.base_model.model.model.layers.16.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 195 |

+

"decoder.base_model.model.model.layers.16.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 196 |

+

"decoder.base_model.model.model.layers.16.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 197 |

+

"decoder.base_model.model.model.layers.16.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 198 |

+

"decoder.base_model.model.model.layers.16.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 199 |

+

"decoder.base_model.model.model.layers.16.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 200 |

+

"decoder.base_model.model.model.layers.16.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 201 |

+

"decoder.base_model.model.model.layers.16.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 202 |

+

"decoder.base_model.model.model.layers.16.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 203 |

+

"decoder.base_model.model.model.layers.16.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 204 |

+

"decoder.base_model.model.model.layers.16.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 205 |

+

"decoder.base_model.model.model.layers.16.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 206 |

+

"decoder.base_model.model.model.layers.16.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 207 |

+

"decoder.base_model.model.model.layers.16.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 208 |

+

"decoder.base_model.model.model.layers.16.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 209 |

+

"decoder.base_model.model.model.layers.16.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 210 |

+

"decoder.base_model.model.model.layers.16.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 211 |

+

"decoder.base_model.model.model.layers.16.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 212 |

+

"decoder.base_model.model.model.layers.16.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 213 |

+

"decoder.base_model.model.model.layers.16.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 214 |

+

"decoder.base_model.model.model.layers.16.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 215 |

+

"decoder.base_model.model.model.layers.17.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 216 |

+

"decoder.base_model.model.model.layers.17.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 217 |

+

"decoder.base_model.model.model.layers.17.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 218 |

+

"decoder.base_model.model.model.layers.17.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 219 |

+

"decoder.base_model.model.model.layers.17.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 220 |

+

"decoder.base_model.model.model.layers.17.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 221 |

+

"decoder.base_model.model.model.layers.17.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 222 |

+

"decoder.base_model.model.model.layers.17.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 223 |

+

"decoder.base_model.model.model.layers.17.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 224 |

+

"decoder.base_model.model.model.layers.17.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 225 |

+

"decoder.base_model.model.model.layers.17.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 226 |

+

"decoder.base_model.model.model.layers.17.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 227 |

+

"decoder.base_model.model.model.layers.17.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 228 |

+

"decoder.base_model.model.model.layers.17.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 229 |

+

"decoder.base_model.model.model.layers.17.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 230 |

+

"decoder.base_model.model.model.layers.17.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 231 |

+

"decoder.base_model.model.model.layers.17.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 232 |

+

"decoder.base_model.model.model.layers.17.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 233 |

+

"decoder.base_model.model.model.layers.17.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 234 |

+

"decoder.base_model.model.model.layers.17.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 235 |

+

"decoder.base_model.model.model.layers.17.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 236 |

+

"decoder.base_model.model.model.layers.17.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 237 |

+

"decoder.base_model.model.model.layers.17.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 238 |

+

"decoder.base_model.model.model.layers.18.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 239 |

+

"decoder.base_model.model.model.layers.18.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 240 |

+

"decoder.base_model.model.model.layers.18.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 241 |

+

"decoder.base_model.model.model.layers.18.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 242 |

+

"decoder.base_model.model.model.layers.18.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 243 |

+

"decoder.base_model.model.model.layers.18.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 244 |

+

"decoder.base_model.model.model.layers.18.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 245 |

+

"decoder.base_model.model.model.layers.18.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 246 |

+

"decoder.base_model.model.model.layers.18.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 247 |

+

"decoder.base_model.model.model.layers.18.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 248 |

+

"decoder.base_model.model.model.layers.18.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 249 |

+

"decoder.base_model.model.model.layers.18.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 250 |

+

"decoder.base_model.model.model.layers.18.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 251 |

+

"decoder.base_model.model.model.layers.18.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 252 |

+

"decoder.base_model.model.model.layers.18.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 253 |

+

"decoder.base_model.model.model.layers.18.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 254 |

+

"decoder.base_model.model.model.layers.18.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 255 |

+

"decoder.base_model.model.model.layers.18.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 256 |

+

"decoder.base_model.model.model.layers.18.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 257 |

+

"decoder.base_model.model.model.layers.18.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 258 |

+

"decoder.base_model.model.model.layers.18.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 259 |

+

"decoder.base_model.model.model.layers.18.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 260 |

+

"decoder.base_model.model.model.layers.18.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 261 |

+

"decoder.base_model.model.model.layers.19.input_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 262 |

+

"decoder.base_model.model.model.layers.19.mlp.down_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 263 |

+

"decoder.base_model.model.model.layers.19.mlp.down_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 264 |

+

"decoder.base_model.model.model.layers.19.mlp.down_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 265 |

+

"decoder.base_model.model.model.layers.19.mlp.gate_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 266 |

+

"decoder.base_model.model.model.layers.19.mlp.gate_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 267 |

+

"decoder.base_model.model.model.layers.19.mlp.gate_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 268 |

+

"decoder.base_model.model.model.layers.19.mlp.up_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 269 |

+

"decoder.base_model.model.model.layers.19.mlp.up_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 270 |

+

"decoder.base_model.model.model.layers.19.mlp.up_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 271 |

+

"decoder.base_model.model.model.layers.19.post_attention_layernorm.weight": "model-00002-of-00003.safetensors",

|

| 272 |

+

"decoder.base_model.model.model.layers.19.self_attn.k_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 273 |

+

"decoder.base_model.model.model.layers.19.self_attn.k_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 274 |

+

"decoder.base_model.model.model.layers.19.self_attn.k_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 275 |

+

"decoder.base_model.model.model.layers.19.self_attn.o_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 276 |

+

"decoder.base_model.model.model.layers.19.self_attn.o_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 277 |

+

"decoder.base_model.model.model.layers.19.self_attn.o_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 278 |

+

"decoder.base_model.model.model.layers.19.self_attn.q_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 279 |

+

"decoder.base_model.model.model.layers.19.self_attn.q_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 280 |

+

"decoder.base_model.model.model.layers.19.self_attn.q_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 281 |

+

"decoder.base_model.model.model.layers.19.self_attn.v_proj.base_layer.weight": "model-00002-of-00003.safetensors",

|

| 282 |

+

"decoder.base_model.model.model.layers.19.self_attn.v_proj.lora_A.default.weight": "model-00002-of-00003.safetensors",

|

| 283 |

+

"decoder.base_model.model.model.layers.19.self_attn.v_proj.lora_B.default.weight": "model-00002-of-00003.safetensors",

|

| 284 |

+

"decoder.base_model.model.model.layers.2.input_layernorm.weight": "model-00001-of-00003.safetensors",

|

| 285 |

+

"decoder.base_model.model.model.layers.2.mlp.down_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 286 |

+

"decoder.base_model.model.model.layers.2.mlp.down_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 287 |

+

"decoder.base_model.model.model.layers.2.mlp.down_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 288 |

+

"decoder.base_model.model.model.layers.2.mlp.gate_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 289 |

+

"decoder.base_model.model.model.layers.2.mlp.gate_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 290 |

+

"decoder.base_model.model.model.layers.2.mlp.gate_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 291 |

+

"decoder.base_model.model.model.layers.2.mlp.up_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 292 |

+

"decoder.base_model.model.model.layers.2.mlp.up_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 293 |

+

"decoder.base_model.model.model.layers.2.mlp.up_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 294 |

+

"decoder.base_model.model.model.layers.2.post_attention_layernorm.weight": "model-00001-of-00003.safetensors",

|

| 295 |

+

"decoder.base_model.model.model.layers.2.self_attn.k_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 296 |

+

"decoder.base_model.model.model.layers.2.self_attn.k_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 297 |

+

"decoder.base_model.model.model.layers.2.self_attn.k_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 298 |

+

"decoder.base_model.model.model.layers.2.self_attn.o_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 299 |

+

"decoder.base_model.model.model.layers.2.self_attn.o_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 300 |

+

"decoder.base_model.model.model.layers.2.self_attn.o_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 301 |

+

"decoder.base_model.model.model.layers.2.self_attn.q_proj.base_layer.weight": "model-00001-of-00003.safetensors",

|

| 302 |

+

"decoder.base_model.model.model.layers.2.self_attn.q_proj.lora_A.default.weight": "model-00001-of-00003.safetensors",

|

| 303 |

+

"decoder.base_model.model.model.layers.2.self_attn.q_proj.lora_B.default.weight": "model-00001-of-00003.safetensors",

|

| 304 |

+