File size: 411 Bytes

5edd837 6133853 20a59e1 1121266 6133853 ba098a0 1121266 b036c6f |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

---

license: apache-2.0

datasets:

- totally-not-an-llm/EverythingLM-data-V3

language:

- en

tags:

- openllama

- 3b

---

Trained on 3 epoch of the EverythingLM data.

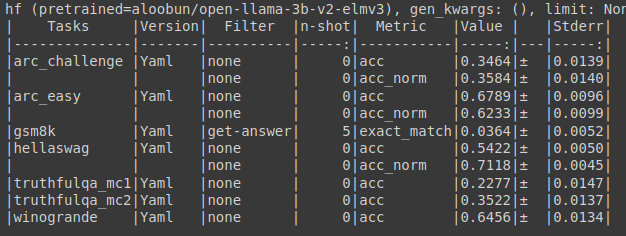

Eval Results :

I like to tweak smaller models than 3B and mix loras, but now I'm trying my hand at finetuning a 3B model. Lets see how it goes. |