Upload folder using huggingface_hub

Browse files- .gitattributes +25 -0

- LICENSE +217 -0

- Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-0.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-0.5.rkllm +3 -0

- Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-1.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-0.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-0.5.rkllm +3 -0

- Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-1.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-0.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-0.5.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-1.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-0.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-0.5.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-1.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-0.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-0.5.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-1.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-0.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-0.5.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-1.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-0.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-0.5.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-1.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-0.0.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-0.5.rkllm +3 -0

- Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-1.0.rkllm +3 -0

- NOTICE +13 -0

- README.md +148 -0

- assets/img.png +0 -0

- assets/intro_2.jpg +3 -0

- assets/logo.png +0 -0

- assets/results.jpg +0 -0

- assets/translation.jpg +0 -0

- config.json +28 -0

- generation_config.json +14 -0

- merges.txt +0 -0

- model.safetensors.index.json +346 -0

- tokenizer.json +0 -0

- tokenizer_config.json +40 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,28 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/intro_2.jpg filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-1.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-1.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-1.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-1.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-1.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 52 |

+

Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 53 |

+

Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 54 |

+

Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-1.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 55 |

+

Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 56 |

+

Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 57 |

+

Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-1.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 58 |

+

Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 59 |

+

Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 60 |

+

Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-1.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

LICENSE

ADDED

|

@@ -0,0 +1,217 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Copyright (C) 2024 AIDC-AI

|

| 2 |

+

|

| 3 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 4 |

+

you may not use this file except in compliance with the License.

|

| 5 |

+

You may obtain a copy of the License at

|

| 6 |

+

|

| 7 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 8 |

+

|

| 9 |

+

Unless required by applicable law or agreed to in writing, software

|

| 10 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 11 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 12 |

+

See the License for the specific language governing permissions and

|

| 13 |

+

limitations under the License.

|

| 14 |

+

|

| 15 |

+

Copyright 2018- The Hugging Face team. All rights reserved.

|

| 16 |

+

|

| 17 |

+

Apache License

|

| 18 |

+

Version 2.0, January 2004

|

| 19 |

+

http://www.apache.org/licenses/

|

| 20 |

+

|

| 21 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 22 |

+

|

| 23 |

+

1. Definitions.

|

| 24 |

+

|

| 25 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 26 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 27 |

+

|

| 28 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 29 |

+

the copyright owner that is granting the License.

|

| 30 |

+

|

| 31 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 32 |

+

other entities that control, are controlled by, or are under common

|

| 33 |

+

control with that entity. For the purposes of this definition,

|

| 34 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 35 |

+

direction or management of such entity, whether by contract or

|

| 36 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 37 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 38 |

+

|

| 39 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 40 |

+

exercising permissions granted by this License.

|

| 41 |

+

|

| 42 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 43 |

+

including but not limited to software source code, documentation

|

| 44 |

+

source, and configuration files.

|

| 45 |

+

|

| 46 |

+

"Object" form shall mean any form resulting from mechanical

|

| 47 |

+

transformation or translation of a Source form, including but

|

| 48 |

+

not limited to compiled object code, generated documentation,

|

| 49 |

+

and conversions to other media types.

|

| 50 |

+

|

| 51 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 52 |

+

Object form, made available under the License, as indicated by a

|

| 53 |

+

copyright notice that is included in or attached to the work

|

| 54 |

+

(an example is provided in the Appendix below).

|

| 55 |

+

|

| 56 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 57 |

+

form, that is based on (or derived from) the Work and for which the

|

| 58 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 59 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 60 |

+

of this License, Derivative Works shall not include works that remain

|

| 61 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 62 |

+

the Work and Derivative Works thereof.

|

| 63 |

+

|

| 64 |

+

"Contribution" shall mean any work of authorship, including

|

| 65 |

+

the original version of the Work and any modifications or additions

|

| 66 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 67 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 68 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 69 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 70 |

+

means any form of electronic, verbal, or written communication sent

|

| 71 |

+

to the Licensor or its representatives, including but not limited to

|

| 72 |

+

communication on electronic mailing lists, source code control systems,

|

| 73 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 74 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 75 |

+

excluding communication that is conspicuously marked or otherwise

|

| 76 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 77 |

+

|

| 78 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 79 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 80 |

+

subsequently incorporated within the Work.

|

| 81 |

+

|

| 82 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 83 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 84 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 85 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 86 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 87 |

+

Work and such Derivative Works in Source or Object form.

|

| 88 |

+

|

| 89 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 90 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 91 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 92 |

+

(except as stated in this section) patent license to make, have made,

|

| 93 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 94 |

+

where such license applies only to those patent claims licensable

|

| 95 |

+

by such Contributor that are necessarily infringed by their

|

| 96 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 97 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 98 |

+

institute patent litigation against any entity (including a

|

| 99 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 100 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 101 |

+

or contributory patent infringement, then any patent licenses

|

| 102 |

+

granted to You under this License for that Work shall terminate

|

| 103 |

+

as of the date such litigation is filed.

|

| 104 |

+

|

| 105 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 106 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 107 |

+

modifications, and in Source or Object form, provided that You

|

| 108 |

+

meet the following conditions:

|

| 109 |

+

|

| 110 |

+

(a) You must give any other recipients of the Work or

|

| 111 |

+

Derivative Works a copy of this License; and

|

| 112 |

+

|

| 113 |

+

(b) You must cause any modified files to carry prominent notices

|

| 114 |

+

stating that You changed the files; and

|

| 115 |

+

|

| 116 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 117 |

+

that You distribute, all copyright, patent, trademark, and

|

| 118 |

+

attribution notices from the Source form of the Work,

|

| 119 |

+

excluding those notices that do not pertain to any part of

|

| 120 |

+

the Derivative Works; and

|

| 121 |

+

|

| 122 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 123 |

+

distribution, then any Derivative Works that You distribute must

|

| 124 |

+

include a readable copy of the attribution notices contained

|

| 125 |

+

within such NOTICE file, excluding those notices that do not

|

| 126 |

+

pertain to any part of the Derivative Works, in at least one

|

| 127 |

+

of the following places: within a NOTICE text file distributed

|

| 128 |

+

as part of the Derivative Works; within the Source form or

|

| 129 |

+

documentation, if provided along with the Derivative Works; or,

|

| 130 |

+

within a display generated by the Derivative Works, if and

|

| 131 |

+

wherever such third-party notices normally appear. The contents

|

| 132 |

+

of the NOTICE file are for informational purposes only and

|

| 133 |

+

do not modify the License. You may add Your own attribution

|

| 134 |

+

notices within Derivative Works that You distribute, alongside

|

| 135 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 136 |

+

that such additional attribution notices cannot be construed

|

| 137 |

+

as modifying the License.

|

| 138 |

+

|

| 139 |

+

You may add Your own copyright statement to Your modifications and

|

| 140 |

+

may provide additional or different license terms and conditions

|

| 141 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 142 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 143 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 144 |

+

the conditions stated in this License.

|

| 145 |

+

|

| 146 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 147 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 148 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 149 |

+

this License, without any additional terms or conditions.

|

| 150 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 151 |

+

the terms of any separate license agreement you may have executed

|

| 152 |

+

with Licensor regarding such Contributions.

|

| 153 |

+

|

| 154 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 155 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 156 |

+

except as required for reasonable and customary use in describing the

|

| 157 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 158 |

+

|

| 159 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 160 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 161 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 162 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 163 |

+

implied, including, without limitation, any warranties or conditions

|

| 164 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 165 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 166 |

+

appropriateness of using or redistributing the Work and assume any

|

| 167 |

+

risks associated with Your exercise of permissions under this License.

|

| 168 |

+

|

| 169 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 170 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 171 |

+

unless required by applicable law (such as deliberate and grossly

|

| 172 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 173 |

+

liable to You for damages, including any direct, indirect, special,

|

| 174 |

+

incidental, or consequential damages of any character arising as a

|

| 175 |

+

result of this License or out of the use or inability to use the

|

| 176 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 177 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 178 |

+

other commercial damages or losses), even if such Contributor

|

| 179 |

+

has been advised of the possibility of such damages.

|

| 180 |

+

|

| 181 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 182 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 183 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 184 |

+

or other liability obligations and/or rights consistent with this

|

| 185 |

+

License. However, in accepting such obligations, You may act only

|

| 186 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 187 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 188 |

+

defend, and hold each Contributor harmless for any liability

|

| 189 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 190 |

+

of your accepting any such warranty or additional liability.

|

| 191 |

+

|

| 192 |

+

END OF TERMS AND CONDITIONS

|

| 193 |

+

|

| 194 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 195 |

+

|

| 196 |

+

To apply the Apache License to your work, attach the following

|

| 197 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 198 |

+

replaced with your own identifying information. (Don't include

|

| 199 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 200 |

+

comment syntax for the file format. We also recommend that a

|

| 201 |

+

file or class name and description of purpose be included on the

|

| 202 |

+

same "printed page" as the copyright notice for easier

|

| 203 |

+

identification within third-party archives.

|

| 204 |

+

|

| 205 |

+

Copyright [yyyy] [name of copyright owner]

|

| 206 |

+

|

| 207 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 208 |

+

you may not use this file except in compliance with the License.

|

| 209 |

+

You may obtain a copy of the License at

|

| 210 |

+

|

| 211 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 212 |

+

|

| 213 |

+

Unless required by applicable law or agreed to in writing, software

|

| 214 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 215 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 216 |

+

See the License for the specific language governing permissions and

|

| 217 |

+

limitations under the License.

|

Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5d6f91d72900182310435ce9ca34ec68580fc360463f1390629f4b1d618d2688

|

| 3 |

+

size 8193644932

|

Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5d6f91d72900182310435ce9ca34ec68580fc360463f1390629f4b1d618d2688

|

| 3 |

+

size 8193644932

|

Marco-o1-rk3588-w8a8-opt-0-hybrid-ratio-1.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5d6f91d72900182310435ce9ca34ec68580fc360463f1390629f4b1d618d2688

|

| 3 |

+

size 8193644932

|

Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:dd98e86bb5a0320c83def583f275b12644516d0e1a7214a3c926cccb218f0025

|

| 3 |

+

size 8193644932

|

Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:dd98e86bb5a0320c83def583f275b12644516d0e1a7214a3c926cccb218f0025

|

| 3 |

+

size 8193644932

|

Marco-o1-rk3588-w8a8-opt-1-hybrid-ratio-1.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:dd98e86bb5a0320c83def583f275b12644516d0e1a7214a3c926cccb218f0025

|

| 3 |

+

size 8193644932

|

Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d7ddc7946f1bea20aec4aa476f9010bf50021ab6dcdce8a8f4489df8d30b28bc

|

| 3 |

+

size 8685609764

|

Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d7ddc7946f1bea20aec4aa476f9010bf50021ab6dcdce8a8f4489df8d30b28bc

|

| 3 |

+

size 8685609764

|

Marco-o1-rk3588-w8a8_g128-opt-0-hybrid-ratio-1.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d7ddc7946f1bea20aec4aa476f9010bf50021ab6dcdce8a8f4489df8d30b28bc

|

| 3 |

+

size 8685609764

|

Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e8c8be2c1e47a93e0b5d6e83fde48feee6ccd23ebad5721eeff5fd7cec40e481

|

| 3 |

+

size 8685609764

|

Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e8c8be2c1e47a93e0b5d6e83fde48feee6ccd23ebad5721eeff5fd7cec40e481

|

| 3 |

+

size 8685609764

|

Marco-o1-rk3588-w8a8_g128-opt-1-hybrid-ratio-1.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e8c8be2c1e47a93e0b5d6e83fde48feee6ccd23ebad5721eeff5fd7cec40e481

|

| 3 |

+

size 8685609764

|

Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a2217bb52c4cdf4426d1d734ce4043b3c9ea57b5d57f699fdb3a95e136005c2b

|

| 3 |

+

size 8433069220

|

Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a2217bb52c4cdf4426d1d734ce4043b3c9ea57b5d57f699fdb3a95e136005c2b

|

| 3 |

+

size 8433069220

|

Marco-o1-rk3588-w8a8_g256-opt-0-hybrid-ratio-1.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a2217bb52c4cdf4426d1d734ce4043b3c9ea57b5d57f699fdb3a95e136005c2b

|

| 3 |

+

size 8433069220

|

Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:40a2dfec72f8b269d81fdbdce3e49f9792f1941f1296830499749da340e0d219

|

| 3 |

+

size 8433069220

|

Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:40a2dfec72f8b269d81fdbdce3e49f9792f1941f1296830499749da340e0d219

|

| 3 |

+

size 8433069220

|

Marco-o1-rk3588-w8a8_g256-opt-1-hybrid-ratio-1.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:40a2dfec72f8b269d81fdbdce3e49f9792f1941f1296830499749da340e0d219

|

| 3 |

+

size 8433069220

|

Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e2ae09226a7143c341d33a6ab187ff877f0b4f256d4c43276fbacbb57122a61c

|

| 3 |

+

size 8306798924

|

Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e2ae09226a7143c341d33a6ab187ff877f0b4f256d4c43276fbacbb57122a61c

|

| 3 |

+

size 8306798924

|

Marco-o1-rk3588-w8a8_g512-opt-0-hybrid-ratio-1.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e2ae09226a7143c341d33a6ab187ff877f0b4f256d4c43276fbacbb57122a61c

|

| 3 |

+

size 8306798924

|

Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:20a5c965739aff7c5808ff1c925bc09a6ca5cb941a4eeb1df527fc19bee43c18

|

| 3 |

+

size 8306798924

|

Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:20a5c965739aff7c5808ff1c925bc09a6ca5cb941a4eeb1df527fc19bee43c18

|

| 3 |

+

size 8306798924

|

Marco-o1-rk3588-w8a8_g512-opt-1-hybrid-ratio-1.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:20a5c965739aff7c5808ff1c925bc09a6ca5cb941a4eeb1df527fc19bee43c18

|

| 3 |

+

size 8306798924

|

NOTICE

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Copyright (C) 2024 AIDC-AI

|

| 2 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 3 |

+

you may not use this file except in compliance with the License.

|

| 4 |

+

You may obtain a copy of the License at

|

| 5 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 6 |

+

Unless required by applicable law or agreed to in writing, software

|

| 7 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 8 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 9 |

+

See the License for the specific language governing permissions and

|

| 10 |

+

limitations under the License.

|

| 11 |

+

|

| 12 |

+

This model was trained based on the following model:

|

| 13 |

+

Qwen2.5-7B-Instruct (https://huggingface.co/Qwen/Qwen2.5-7B-Instruct), license:(https://huggingface.co/Qwen/Qwen2.5-7B-Instruct/blob/main/LICENSE, SPDX-License-identifier:Apache-2.0)

|

README.md

ADDED

|

@@ -0,0 +1,148 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: transformers

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

inference: false

|

| 5 |

+

---

|

| 6 |

+

# Marco-o1-RK3588-1.1.2

|

| 7 |

+

|

| 8 |

+

This version of Marco-o1 has been converted to run on the RK3588 NPU using ['w8a8', 'w8a8_g128', 'w8a8_g256', 'w8a8_g512'] quantization.

|

| 9 |

+

This model has been optimized with the following LoRA:

|

| 10 |

+

|

| 11 |

+

Compatible with RKLLM version: 1.1.2

|

| 12 |

+

|

| 13 |

+

## Useful links:

|

| 14 |

+

[Official RKLLM GitHub](https://github.com/airockchip/rknn-llm)

|

| 15 |

+

|

| 16 |

+

[RockhipNPU Reddit](https://reddit.com/r/RockchipNPU)

|

| 17 |

+

|

| 18 |

+

[EZRKNN-LLM](https://github.com/Pelochus/ezrknn-llm/)

|

| 19 |

+

|

| 20 |

+

Pretty much anything by these folks: [marty1885](https://github.com/marty1885) and [happyme531](https://huggingface.co/happyme531)

|

| 21 |

+

|

| 22 |

+

Converted using https://github.com/c0zaut/ez-er-rkllm-toolkit

|

| 23 |

+

|

| 24 |

+

# Original Model Card for base model, Marco-o1, below:

|

| 25 |

+

|

| 26 |

+

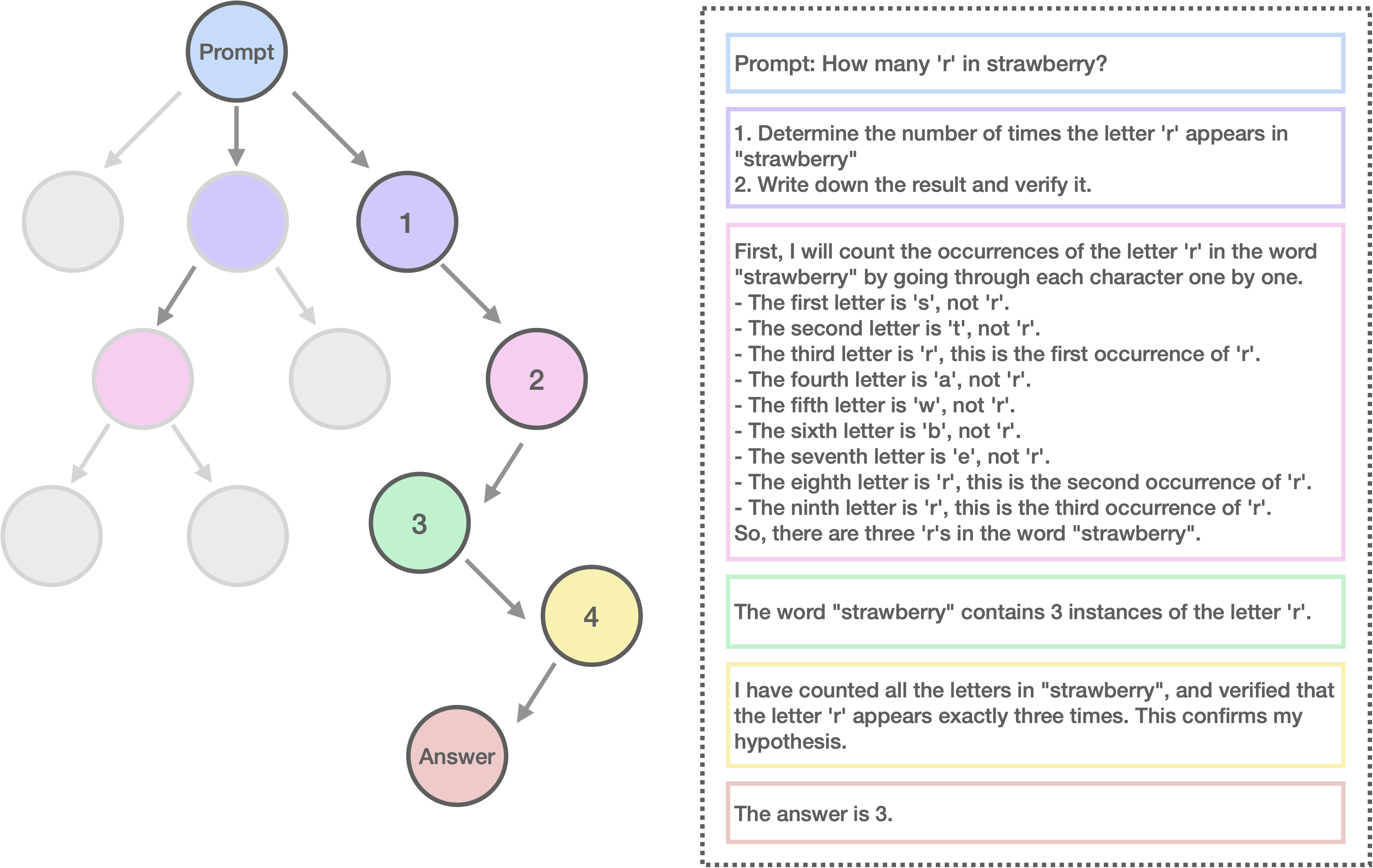

<p align="center">

|

| 27 |

+

<img src="assets/logo.png" width="150" style="margin-bottom: 0.2;"/>

|

| 28 |

+

|

| 29 |

+

<p>

|

| 30 |

+

|

| 31 |

+

# 🍓 Marco-o1: Towards Open Reasoning Models for Open-Ended Solutions

|

| 32 |

+

|

| 33 |

+

<!-- Broader Real-World Applications -->

|

| 34 |

+

|

| 35 |

+

<!-- # 🍓 Marco-o1: An Open Large Reasoning Model for Real-World Solutions -->

|

| 36 |

+

|

| 37 |

+

<!-- <h2 align="center"> <a href="https://github.com/AIDC-AI/Marco-o1/">Marco-o1</a></h2> -->

|

| 38 |

+

<!-- <h5 align="center"> If you appreciate our project, please consider giving us a star ⭐ on GitHub to stay updated with the latest developments. </h2> -->

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

<div align="center">

|

| 42 |

+

|

| 43 |

+

<!-- **Affiliations:** -->

|

| 44 |

+

|

| 45 |

+

⭐ _**MarcoPolo Team**_ ⭐

|

| 46 |

+

|

| 47 |

+

[_**AI Business, Alibaba International Digital Commerce**_](https://aidc-ai.com)

|

| 48 |

+

|

| 49 |

+

[**Github**](https://github.com/AIDC-AI/Marco-o1) 🤗 [**Hugging Face**](https://huggingface.co/AIDC-AI/Marco-o1) 📝 [**Paper**](https://arxiv.org/abs/2411.14405) 🧑💻 [**Model**](https://huggingface.co/AIDC-AI/Marco-o1) 🗂️ [**Data**](https://github.com/AIDC-AI/Marco-o1/tree/main/data) 📽️ [**Demo**](https://huggingface.co/AIDC-AI/Marco-o1)

|

| 50 |

+

|

| 51 |

+

</div>

|

| 52 |

+

|

| 53 |

+

🎯 **Marco-o1** not only focuses on disciplines with standard answers, such as mathematics, physics, and coding—which are well-suited for reinforcement learning (RL)—but also places greater emphasis on **open-ended resolutions**. We aim to address the question: _"Can the o1 model effectively generalize to broader domains where clear standards are absent and rewards are challenging to quantify?"_

|

| 54 |

+

|

| 55 |

+

Currently, Marco-o1 Large Language Model (LLM) is powered by _Chain-of-Thought (CoT) fine-tuning_, _Monte Carlo Tree Search (MCTS)_, _reflection mechanisms_, and _innovative reasoning strategies_—optimized for complex real-world problem-solving tasks.

|

| 56 |

+

|

| 57 |

+

⚠️ **Limitations:** <ins>We would like to emphasize that this research work is inspired by OpenAI's o1 (from which the name is also derived). This work aims to explore potential approaches to shed light on the currently unclear technical roadmap for large reasoning models. Besides, our focus is on open-ended questions, and we have observed interesting phenomena in multilingual applications. However, we must acknowledge that the current model primarily exhibits o1-like reasoning characteristics and its performance still fall short of a fully realized "o1" model. This is not a one-time effort, and we remain committed to continuous optimization and ongoing improvement.</ins>

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

## 🚀 Highlights

|

| 62 |

+

Currently, our work is distinguished by the following highlights:

|

| 63 |

+

|

| 64 |

+

- 🍀 Fine-Tuning with CoT Data: We develop Marco-o1-CoT by performing full-parameter fine-tuning on the base model using open-source CoT dataset combined with our self-developed synthetic data.

|

| 65 |

+

- 🍀 Solution Space Expansion via MCTS: We integrate LLMs with MCTS (Marco-o1-MCTS), using the model's output confidence to guide the search and expand the solution space.

|

| 66 |

+

- 🍀 Reasoning Action Strategy: We implement novel reasoning action strategies and a reflection mechanism (Marco-o1-MCTS Mini-Step), including exploring different action granularities within the MCTS framework and prompting the model to self-reflect, thereby significantly enhancing the model's ability to solve complex problems.

|

| 67 |

+

- 🍀 Application in Translation Tasks: We are the first to apply Large Reasoning Models (LRM) to Machine Translation task, exploring inference time scaling laws in the multilingual and translation domain.

|

| 68 |

+

|

| 69 |

+

OpenAI recently introduced the groundbreaking o1 model, renowned for its exceptional reasoning capabilities. This model has demonstrated outstanding performance on platforms such as AIME, CodeForces, surpassing other leading models. Inspired by this success, we aimed to push the boundaries of LLMs even further, enhancing their reasoning abilities to tackle complex, real-world challenges.

|

| 70 |

+

|

| 71 |

+

🌍 Marco-o1 leverages advanced techniques like CoT fine-tuning, MCTS, and Reasoning Action Strategies to enhance its reasoning power. As shown in Figure 2, by fine-tuning Qwen2-7B-Instruct with a combination of the filtered Open-O1 CoT dataset, Marco-o1 CoT dataset, and Marco-o1 Instruction dataset, Marco-o1 improved its handling of complex tasks. MCTS allows exploration of multiple reasoning paths using confidence scores derived from softmax-applied log probabilities of the top-k alternative tokens, guiding the model to optimal solutions. Moreover, our reasoning action strategy involves varying the granularity of actions within steps and mini-steps to optimize search efficiency and accuracy.

|

| 72 |

+

|

| 73 |

+

<div align="center">

|

| 74 |

+

<img src="assets/intro_2.jpg" alt="Figure Description or Alt Text" width="80%">

|

| 75 |

+

<p><strong>Figure 2: </strong>The overview of Marco-o1.</p>

|

| 76 |

+

</div>

|

| 77 |

+

|

| 78 |

+

🌏 As shown in Figure 3, Marco-o1 achieved accuracy improvements of +6.17% on the MGSM (English) dataset and +5.60% on the MGSM (Chinese) dataset, showcasing enhanced reasoning capabilities.

|

| 79 |

+

|

| 80 |

+

<div align="center">

|

| 81 |

+

<img src="assets/results.jpg" alt="Figure Description or Alt Text" width="80%">

|

| 82 |

+

<p><strong>Figure 3: </strong>The main results of Marco-o1.</p>

|

| 83 |

+

</div>

|

| 84 |

+

|

| 85 |

+

🌎 Additionally, in translation tasks, we demonstrate that Marco-o1 excels in translating slang expressions, such as translating "这个鞋拥有踩屎感" (literal translation: "This shoe offers a stepping-on-poop sensation.") to "This shoe has a comfortable sole," demonstrating its superior grasp of colloquial nuances.

|

| 86 |

+

|

| 87 |

+

<div align="center">

|

| 88 |

+

<img src="assets/translation.jpg" alt="Figure Description or Alt Text" width="80%">

|

| 89 |

+

<p><strong>Figure 4: </strong>The demostration of translation task using Marco-o1.</p>

|

| 90 |

+

</div>

|

| 91 |

+

|

| 92 |

+

For more information,please visit our [**Github**](https://github.com/AIDC-AI/Marco-o1).

|

| 93 |

+

|

| 94 |

+

## Usage

|

| 95 |

+

|

| 96 |

+

1. **Load Marco-o1-CoT model:**

|

| 97 |

+

```

|

| 98 |

+

# Load model directly

|

| 99 |

+

from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 100 |

+

|

| 101 |

+

tokenizer = AutoTokenizer.from_pretrained("AIDC-AI/Marco-o1")

|

| 102 |

+

model = AutoModelForCausalLM.from_pretrained("AIDC-AI/Marco-o1")

|

| 103 |

+

```

|

| 104 |

+

|

| 105 |

+

2. **Inference:**

|

| 106 |

+

|

| 107 |

+

Execute the inference script (you can give any customized inputs inside):

|

| 108 |

+

```

|

| 109 |

+

./src/talk_with_model.py

|

| 110 |

+

|

| 111 |

+

# Use vLLM

|

| 112 |

+

./src/talk_with_model_vllm.py

|

| 113 |

+

|

| 114 |

+

```

|

| 115 |

+

|

| 116 |

+

|

| 117 |

+

# 👨🏻💻 Acknowledgement

|

| 118 |

+

|

| 119 |

+

## Main Contributors

|

| 120 |

+

From MarcoPolo Team, AI Business, Alibaba International Digital Commerce:

|

| 121 |

+

- Yu Zhao

|

| 122 |

+

- [Huifeng Yin](https://github.com/HuifengYin)

|

| 123 |

+

- Hao Wang

|

| 124 |

+

- [Longyue Wang](http://www.longyuewang.com)

|

| 125 |

+

|

| 126 |

+

## Citation

|

| 127 |

+

|

| 128 |

+

If you find Marco-o1 useful for your research and applications, please cite:

|

| 129 |

+

|

| 130 |

+

```

|

| 131 |

+

@misc{zhao2024marcoo1openreasoningmodels,

|

| 132 |

+

title={Marco-o1: Towards Open Reasoning Models for Open-Ended Solutions},

|

| 133 |

+

author={Yu Zhao and Huifeng Yin and Bo Zeng and Hao Wang and Tianqi Shi and Chenyang Lyu and Longyue Wang and Weihua Luo and Kaifu Zhang},

|

| 134 |

+

year={2024},

|

| 135 |

+

eprint={2411.14405},

|

| 136 |

+

archivePrefix={arXiv},

|

| 137 |

+

primaryClass={cs.CL},

|

| 138 |

+

url={https://arxiv.org/abs/2411.14405},

|

| 139 |

+

}

|

| 140 |

+

```

|

| 141 |

+

|

| 142 |

+

## LICENSE

|

| 143 |

+

|

| 144 |

+

This project is licensed under [Apache License Version 2](https://huggingface.co/datasets/choosealicense/licenses/blob/main/markdown/apache-2.0.md) (SPDX-License-identifier: Apache-2.0).

|

| 145 |

+

|

| 146 |

+

## DISCLAIMER

|

| 147 |

+

|

| 148 |

+

We used compliance checking algorithms during the training process, to ensure the compliance of the trained model and dataset to the best of our ability. Due to complex data and the diversity of language model usage scenarios, we cannot guarantee that the model is completely free of copyright issues or improper content. If you believe anything infringes on your rights or generates improper content, please contact us, and we will promptly address the matter.

|

assets/img.png

ADDED

|

assets/intro_2.jpg

ADDED

|

Git LFS Details

|

assets/logo.png

ADDED

|

assets/results.jpg

ADDED

|

assets/translation.jpg

ADDED

|

config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "AIDC-AI/Macro-o1",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"Qwen2ForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 151643,

|

| 8 |

+

"eos_token_id": 151645,

|

| 9 |

+

"hidden_act": "silu",

|

| 10 |

+

"hidden_size": 3584,

|

| 11 |

+

"initializer_range": 0.02,

|

| 12 |

+

"intermediate_size": 18944,

|

| 13 |

+

"max_position_embeddings": 32768,

|

| 14 |

+

"max_window_layers": 28,

|

| 15 |

+

"model_type": "qwen2",

|

| 16 |

+

"num_attention_heads": 28,

|

| 17 |

+

"num_hidden_layers": 28,

|

| 18 |

+

"num_key_value_heads": 4,

|

| 19 |

+

"rms_norm_eps": 1e-06,

|

| 20 |

+

"rope_theta": 1000000.0,

|

| 21 |

+

"sliding_window": null,

|

| 22 |

+

"tie_word_embeddings": false,

|

| 23 |

+

"torch_dtype": "bfloat16",

|

| 24 |

+

"transformers_version": "4.41.2",

|

| 25 |

+

"use_cache": true,

|

| 26 |

+

"use_sliding_window": false,

|

| 27 |

+

"vocab_size": 152064

|

| 28 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"pad_token_id": 151643,

|

| 4 |

+

"do_sample": true,

|

| 5 |

+

"eos_token_id": [

|

| 6 |

+

151645,

|

| 7 |

+

151643

|

| 8 |

+

],

|

| 9 |

+

"repetition_penalty": 1.05,

|

| 10 |

+

"temperature": 0.7,

|

| 11 |

+

"top_k": 20,

|

| 12 |

+

"top_p": 0.8,

|

| 13 |

+

"transformers_version": "4.37.0"

|

| 14 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,346 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 15231233024

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00004-of-00004.safetensors",

|

| 7 |

+

"model.embed_tokens.weight": "model-00001-of-00004.safetensors",

|

| 8 |

+

"model.layers.0.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 9 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 10 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 11 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 12 |

+

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 13 |

+

"model.layers.0.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

| 14 |

+

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 15 |

+

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 16 |

+

"model.layers.0.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

| 17 |

+

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 18 |

+

"model.layers.0.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

| 19 |

+

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 20 |

+

"model.layers.1.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 21 |

+

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 22 |

+

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 23 |

+

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 24 |

+

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 25 |

+

"model.layers.1.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

| 26 |

+

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 27 |

+

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 28 |

+

"model.layers.1.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

| 29 |

+

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 30 |

+

"model.layers.1.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

| 31 |

+

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 32 |

+

"model.layers.10.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 33 |

+

"model.layers.10.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 34 |

+

"model.layers.10.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 35 |

+

"model.layers.10.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 36 |

+

"model.layers.10.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 37 |

+

"model.layers.10.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

| 38 |

+

"model.layers.10.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 39 |

+

"model.layers.10.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 40 |

+

"model.layers.10.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

| 41 |

+

"model.layers.10.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 42 |

+

"model.layers.10.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

| 43 |

+

"model.layers.10.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 44 |

+

"model.layers.11.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 45 |

+

"model.layers.11.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 46 |

+