End of training

Browse files- README.md +2 -1

- all_results.json +12 -0

- eval_results.json +7 -0

- train_results.json +8 -0

- trainer_state.json +129 -0

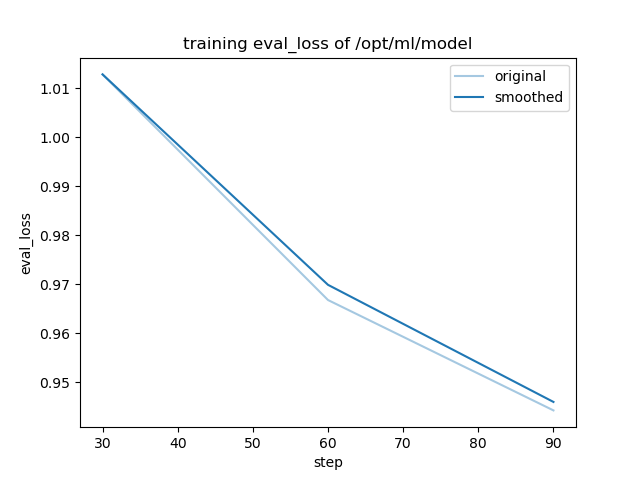

- training_eval_loss.png +0 -0

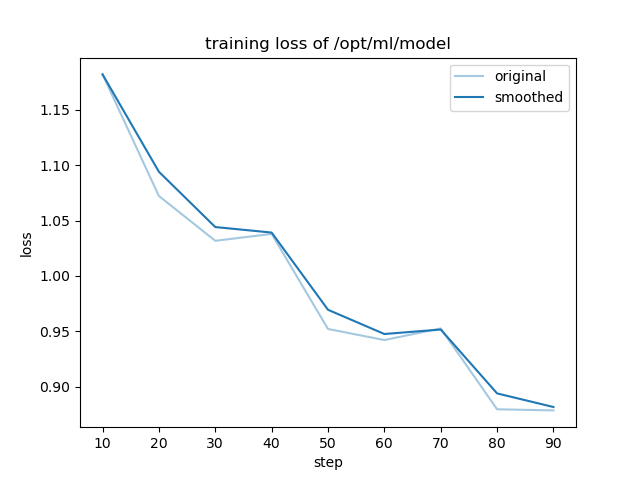

- training_loss.png +0 -0

README.md

CHANGED

|

@@ -4,6 +4,7 @@ license: llama3.1

|

|

| 4 |

base_model: meta-llama/Meta-Llama-3.1-8B

|

| 5 |

tags:

|

| 6 |

- llama-factory

|

|

|

|

| 7 |

- generated_from_trainer

|

| 8 |

model-index:

|

| 9 |

- name: stackexchange_christianity

|

|

@@ -15,7 +16,7 @@ should probably proofread and complete it, then remove this comment. -->

|

|

| 15 |

|

| 16 |

# stackexchange_christianity

|

| 17 |

|

| 18 |

-

This model is a fine-tuned version of [meta-llama/Meta-Llama-3.1-8B](https://huggingface.co/meta-llama/Meta-Llama-3.1-8B) on

|

| 19 |

It achieves the following results on the evaluation set:

|

| 20 |

- Loss: 0.9443

|

| 21 |

|

|

|

|

| 4 |

base_model: meta-llama/Meta-Llama-3.1-8B

|

| 5 |

tags:

|

| 6 |

- llama-factory

|

| 7 |

+

- full

|

| 8 |

- generated_from_trainer

|

| 9 |

model-index:

|

| 10 |

- name: stackexchange_christianity

|

|

|

|

| 16 |

|

| 17 |

# stackexchange_christianity

|

| 18 |

|

| 19 |

+

This model is a fine-tuned version of [meta-llama/Meta-Llama-3.1-8B](https://huggingface.co/meta-llama/Meta-Llama-3.1-8B) on the mlfoundations-dev/stackexchange_christianity dataset.

|

| 20 |

It achieves the following results on the evaluation set:

|

| 21 |

- Loss: 0.9443

|

| 22 |

|

all_results.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.959016393442623,

|

| 3 |

+

"eval_loss": 0.9442600607872009,

|

| 4 |

+

"eval_runtime": 32.2309,

|

| 5 |

+

"eval_samples_per_second": 25.472,

|

| 6 |

+

"eval_steps_per_second": 0.403,

|

| 7 |

+

"total_flos": 150543972433920.0,

|

| 8 |

+

"train_loss": 0.9920466634962294,

|

| 9 |

+

"train_runtime": 5576.9487,

|

| 10 |

+

"train_samples_per_second": 8.385,

|

| 11 |

+

"train_steps_per_second": 0.016

|

| 12 |

+

}

|

eval_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.959016393442623,

|

| 3 |

+

"eval_loss": 0.9442600607872009,

|

| 4 |

+

"eval_runtime": 32.2309,

|

| 5 |

+

"eval_samples_per_second": 25.472,

|

| 6 |

+

"eval_steps_per_second": 0.403

|

| 7 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.959016393442623,

|

| 3 |

+

"total_flos": 150543972433920.0,

|

| 4 |

+

"train_loss": 0.9920466634962294,

|

| 5 |

+

"train_runtime": 5576.9487,

|

| 6 |

+

"train_samples_per_second": 8.385,

|

| 7 |

+

"train_steps_per_second": 0.016

|

| 8 |

+

}

|

trainer_state.json

ADDED

|

@@ -0,0 +1,129 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"best_metric": null,

|

| 3 |

+

"best_model_checkpoint": null,

|

| 4 |

+

"epoch": 2.959016393442623,

|

| 5 |

+

"eval_steps": 500,

|

| 6 |

+

"global_step": 90,

|

| 7 |

+

"is_hyper_param_search": false,

|

| 8 |

+

"is_local_process_zero": true,

|

| 9 |

+

"is_world_process_zero": true,

|

| 10 |

+

"log_history": [

|

| 11 |

+

{

|

| 12 |

+

"epoch": 0.32786885245901637,

|

| 13 |

+

"grad_norm": 17.434409581805603,

|

| 14 |

+

"learning_rate": 5e-06,

|

| 15 |

+

"loss": 1.1821,

|

| 16 |

+

"step": 10

|

| 17 |

+

},

|

| 18 |

+

{

|

| 19 |

+

"epoch": 0.6557377049180327,

|

| 20 |

+

"grad_norm": 7.59380268216057,

|

| 21 |

+

"learning_rate": 5e-06,

|

| 22 |

+

"loss": 1.0722,

|

| 23 |

+

"step": 20

|

| 24 |

+

},

|

| 25 |

+

{

|

| 26 |

+

"epoch": 0.9836065573770492,

|

| 27 |

+

"grad_norm": 1.5863650387592914,

|

| 28 |

+

"learning_rate": 5e-06,

|

| 29 |

+

"loss": 1.0317,

|

| 30 |

+

"step": 30

|

| 31 |

+

},

|

| 32 |

+

{

|

| 33 |

+

"epoch": 0.9836065573770492,

|

| 34 |

+

"eval_loss": 1.0128474235534668,

|

| 35 |

+

"eval_runtime": 32.8639,

|

| 36 |

+

"eval_samples_per_second": 24.982,

|

| 37 |

+

"eval_steps_per_second": 0.396,

|

| 38 |

+

"step": 30

|

| 39 |

+

},

|

| 40 |

+

{

|

| 41 |

+

"epoch": 1.3155737704918034,

|

| 42 |

+

"grad_norm": 2.3139241529035615,

|

| 43 |

+

"learning_rate": 5e-06,

|

| 44 |

+

"loss": 1.0379,

|

| 45 |

+

"step": 40

|

| 46 |

+

},

|

| 47 |

+

{

|

| 48 |

+

"epoch": 1.6434426229508197,

|

| 49 |

+

"grad_norm": 1.0834656481897813,

|

| 50 |

+

"learning_rate": 5e-06,

|

| 51 |

+

"loss": 0.952,

|

| 52 |

+

"step": 50

|

| 53 |

+

},

|

| 54 |

+

{

|

| 55 |

+

"epoch": 1.971311475409836,

|

| 56 |

+

"grad_norm": 3.294871252924834,

|

| 57 |

+

"learning_rate": 5e-06,

|

| 58 |

+

"loss": 0.942,

|

| 59 |

+

"step": 60

|

| 60 |

+

},

|

| 61 |

+

{

|

| 62 |

+

"epoch": 1.971311475409836,

|

| 63 |

+

"eval_loss": 0.966790497303009,

|

| 64 |

+

"eval_runtime": 32.8378,

|

| 65 |

+

"eval_samples_per_second": 25.002,

|

| 66 |

+

"eval_steps_per_second": 0.396,

|

| 67 |

+

"step": 60

|

| 68 |

+

},

|

| 69 |

+

{

|

| 70 |

+

"epoch": 2.30327868852459,

|

| 71 |

+

"grad_norm": 2.469625552209217,

|

| 72 |

+

"learning_rate": 5e-06,

|

| 73 |

+

"loss": 0.9525,

|

| 74 |

+

"step": 70

|

| 75 |

+

},

|

| 76 |

+

{

|

| 77 |

+

"epoch": 2.6311475409836067,

|

| 78 |

+

"grad_norm": 1.8447831909454038,

|

| 79 |

+

"learning_rate": 5e-06,

|

| 80 |

+

"loss": 0.8794,

|

| 81 |

+

"step": 80

|

| 82 |

+

},

|

| 83 |

+

{

|

| 84 |

+

"epoch": 2.959016393442623,

|

| 85 |

+

"grad_norm": 1.9087591460647273,

|

| 86 |

+

"learning_rate": 5e-06,

|

| 87 |

+

"loss": 0.8784,

|

| 88 |

+

"step": 90

|

| 89 |

+

},

|

| 90 |

+

{

|

| 91 |

+

"epoch": 2.959016393442623,

|

| 92 |

+

"eval_loss": 0.9442600607872009,

|

| 93 |

+

"eval_runtime": 31.7685,

|

| 94 |

+

"eval_samples_per_second": 25.843,

|

| 95 |

+

"eval_steps_per_second": 0.409,

|

| 96 |

+

"step": 90

|

| 97 |

+

},

|

| 98 |

+

{

|

| 99 |

+

"epoch": 2.959016393442623,

|

| 100 |

+

"step": 90,

|

| 101 |

+

"total_flos": 150543972433920.0,

|

| 102 |

+

"train_loss": 0.9920466634962294,

|

| 103 |

+

"train_runtime": 5576.9487,

|

| 104 |

+

"train_samples_per_second": 8.385,

|

| 105 |

+

"train_steps_per_second": 0.016

|

| 106 |

+

}

|

| 107 |

+

],

|

| 108 |

+

"logging_steps": 10,

|

| 109 |

+

"max_steps": 90,

|

| 110 |

+

"num_input_tokens_seen": 0,

|

| 111 |

+

"num_train_epochs": 3,

|

| 112 |

+

"save_steps": 500,

|

| 113 |

+

"stateful_callbacks": {

|

| 114 |

+

"TrainerControl": {

|

| 115 |

+

"args": {

|

| 116 |

+

"should_epoch_stop": false,

|

| 117 |

+

"should_evaluate": false,

|

| 118 |

+

"should_log": false,

|

| 119 |

+

"should_save": true,

|

| 120 |

+

"should_training_stop": true

|

| 121 |

+

},

|

| 122 |

+

"attributes": {}

|

| 123 |

+

}

|

| 124 |

+

},

|

| 125 |

+

"total_flos": 150543972433920.0,

|

| 126 |

+

"train_batch_size": 8,

|

| 127 |

+

"trial_name": null,

|

| 128 |

+

"trial_params": null

|

| 129 |

+

}

|

training_eval_loss.png

ADDED

|

training_loss.png

ADDED

|