pushed

Browse files- .chainlit/config.toml +84 -0

- .env +1 -0

- Python RAQA Example.ipynb +1106 -0

- README.md +50 -11

- __pycache__/app.cpython-310.pyc +0 -0

- aimakerspace/__init__.py +0 -0

- aimakerspace/__pycache__/__init__.cpython-310.pyc +0 -0

- aimakerspace/__pycache__/__init__.cpython-311.pyc +0 -0

- aimakerspace/__pycache__/text_utils.cpython-310.pyc +0 -0

- aimakerspace/__pycache__/text_utils.cpython-311.pyc +0 -0

- aimakerspace/__pycache__/vectordatabase.cpython-310.pyc +0 -0

- aimakerspace/__pycache__/vectordatabase.cpython-311.pyc +0 -0

- aimakerspace/openai_utils/__init__.py +0 -0

- aimakerspace/openai_utils/__pycache__/__init__.cpython-310.pyc +0 -0

- aimakerspace/openai_utils/__pycache__/__init__.cpython-311.pyc +0 -0

- aimakerspace/openai_utils/__pycache__/chatmodel.cpython-310.pyc +0 -0

- aimakerspace/openai_utils/__pycache__/chatmodel.cpython-311.pyc +0 -0

- aimakerspace/openai_utils/__pycache__/embedding.cpython-310.pyc +0 -0

- aimakerspace/openai_utils/__pycache__/embedding.cpython-311.pyc +0 -0

- aimakerspace/openai_utils/__pycache__/prompts.cpython-310.pyc +0 -0

- aimakerspace/openai_utils/__pycache__/prompts.cpython-311.pyc +0 -0

- aimakerspace/openai_utils/chatmodel.py +27 -0

- aimakerspace/openai_utils/embedding.py +59 -0

- aimakerspace/openai_utils/prompts.py +75 -0

- aimakerspace/text_utils.py +77 -0

- aimakerspace/vectordatabase.py +81 -0

- app.py +137 -0

- chainlit.md +14 -0

- data/KingLear.txt +0 -0

- images/docchain_img.png +0 -0

- images/raqaapp_img.png +0 -0

- images/texsplitter_img.png +0 -0

- requirements.txt +12 -0

- wandb/run-20231205_135828-pd04j1u0/files/conda-environment.yaml +103 -0

- wandb/run-20231205_135828-pd04j1u0/files/config.yaml +26 -0

- wandb/run-20231205_135828-pd04j1u0/files/requirements.txt +81 -0

- wandb/run-20231205_135828-pd04j1u0/files/wandb-metadata.json +52 -0

- wandb/run-20231205_135828-pd04j1u0/files/wandb-summary.json +1 -0

- wandb/run-20231205_135828-pd04j1u0/logs/debug-internal.log +247 -0

- wandb/run-20231205_135828-pd04j1u0/logs/debug.log +43 -0

- wandb/run-20231205_135828-pd04j1u0/run-pd04j1u0.wandb +0 -0

.chainlit/config.toml

ADDED

|

@@ -0,0 +1,84 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[project]

|

| 2 |

+

# Whether to enable telemetry (default: true). No personal data is collected.

|

| 3 |

+

enable_telemetry = true

|

| 4 |

+

|

| 5 |

+

# List of environment variables to be provided by each user to use the app.

|

| 6 |

+

user_env = []

|

| 7 |

+

|

| 8 |

+

# Duration (in seconds) during which the session is saved when the connection is lost

|

| 9 |

+

session_timeout = 3600

|

| 10 |

+

|

| 11 |

+

# Enable third parties caching (e.g LangChain cache)

|

| 12 |

+

cache = false

|

| 13 |

+

|

| 14 |

+

# Follow symlink for asset mount (see https://github.com/Chainlit/chainlit/issues/317)

|

| 15 |

+

# follow_symlink = false

|

| 16 |

+

|

| 17 |

+

[features]

|

| 18 |

+

# Show the prompt playground

|

| 19 |

+

prompt_playground = true

|

| 20 |

+

|

| 21 |

+

# Process and display HTML in messages. This can be a security risk (see https://stackoverflow.com/questions/19603097/why-is-it-dangerous-to-render-user-generated-html-or-javascript)

|

| 22 |

+

unsafe_allow_html = false

|

| 23 |

+

|

| 24 |

+

# Process and display mathematical expressions. This can clash with "$" characters in messages.

|

| 25 |

+

latex = false

|

| 26 |

+

|

| 27 |

+

# Authorize users to upload files with messages

|

| 28 |

+

multi_modal = true

|

| 29 |

+

|

| 30 |

+

# Allows user to use speech to text

|

| 31 |

+

[features.speech_to_text]

|

| 32 |

+

enabled = false

|

| 33 |

+

# See all languages here https://github.com/JamesBrill/react-speech-recognition/blob/HEAD/docs/API.md#language-string

|

| 34 |

+

# language = "en-US"

|

| 35 |

+

|

| 36 |

+

[UI]

|

| 37 |

+

# Name of the app and chatbot.

|

| 38 |

+

name = "Chatbot"

|

| 39 |

+

|

| 40 |

+

# Show the readme while the conversation is empty.

|

| 41 |

+

show_readme_as_default = true

|

| 42 |

+

|

| 43 |

+

# Description of the app and chatbot. This is used for HTML tags.

|

| 44 |

+

# description = ""

|

| 45 |

+

|

| 46 |

+

# Large size content are by default collapsed for a cleaner ui

|

| 47 |

+

default_collapse_content = true

|

| 48 |

+

|

| 49 |

+

# The default value for the expand messages settings.

|

| 50 |

+

default_expand_messages = false

|

| 51 |

+

|

| 52 |

+

# Hide the chain of thought details from the user in the UI.

|

| 53 |

+

hide_cot = false

|

| 54 |

+

|

| 55 |

+

# Link to your github repo. This will add a github button in the UI's header.

|

| 56 |

+

# github = ""

|

| 57 |

+

|

| 58 |

+

# Specify a CSS file that can be used to customize the user interface.

|

| 59 |

+

# The CSS file can be served from the public directory or via an external link.

|

| 60 |

+

# custom_css = "/public/test.css"

|

| 61 |

+

|

| 62 |

+

# Override default MUI light theme. (Check theme.ts)

|

| 63 |

+

[UI.theme.light]

|

| 64 |

+

#background = "#FAFAFA"

|

| 65 |

+

#paper = "#FFFFFF"

|

| 66 |

+

|

| 67 |

+

[UI.theme.light.primary]

|

| 68 |

+

#main = "#F80061"

|

| 69 |

+

#dark = "#980039"

|

| 70 |

+

#light = "#FFE7EB"

|

| 71 |

+

|

| 72 |

+

# Override default MUI dark theme. (Check theme.ts)

|

| 73 |

+

[UI.theme.dark]

|

| 74 |

+

#background = "#FAFAFA"

|

| 75 |

+

#paper = "#FFFFFF"

|

| 76 |

+

|

| 77 |

+

[UI.theme.dark.primary]

|

| 78 |

+

#main = "#F80061"

|

| 79 |

+

#dark = "#980039"

|

| 80 |

+

#light = "#FFE7EB"

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

[meta]

|

| 84 |

+

generated_by = "0.7.700"

|

.env

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

OPENAI_API_KEY=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

|

Python RAQA Example.ipynb

ADDED

|

@@ -0,0 +1,1106 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"cells": [

|

| 3 |

+

{

|

| 4 |

+

"cell_type": "markdown",

|

| 5 |

+

"metadata": {},

|

| 6 |

+

"source": [

|

| 7 |

+

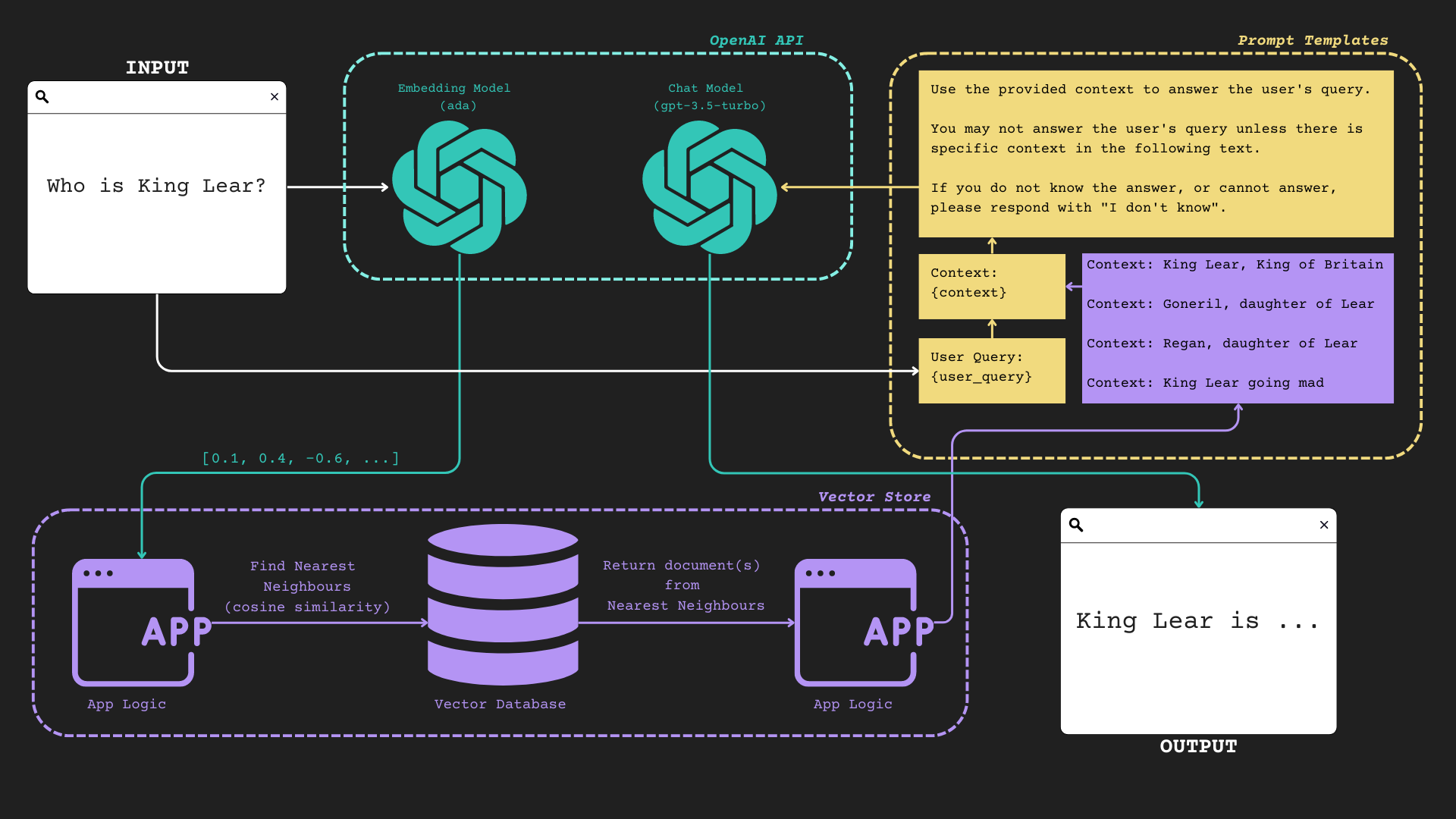

"# Your First RAQA Application\n",

|

| 8 |

+

"\n",

|

| 9 |

+

"In this notebook, we'll walk you through each of the components that are involved in a simple RAQA application. \n",

|

| 10 |

+

"\n",

|

| 11 |

+

"We won't be leveraging any fancy tools, just the OpenAI Python SDK, Numpy, and some classic Python.\n",

|

| 12 |

+

"\n",

|

| 13 |

+

"> NOTE: This was done with Python 3.11.4."

|

| 14 |

+

]

|

| 15 |

+

},

|

| 16 |

+

{

|

| 17 |

+

"cell_type": "markdown",

|

| 18 |

+

"metadata": {},

|

| 19 |

+

"source": [

|

| 20 |

+

"Let's look at a rather complicated looking visual representation of a basic RAQA application.\n",

|

| 21 |

+

"\n",

|

| 22 |

+

"<img src=\"https://i.imgur.com/LCNkd1A.png\" />"

|

| 23 |

+

]

|

| 24 |

+

},

|

| 25 |

+

{

|

| 26 |

+

"cell_type": "markdown",

|

| 27 |

+

"metadata": {},

|

| 28 |

+

"source": [

|

| 29 |

+

"### Imports and Utility \n",

|

| 30 |

+

"\n",

|

| 31 |

+

"We're just doing some imports and enabling `async` to work within the Jupyter environment here, nothing too crazy!"

|

| 32 |

+

]

|

| 33 |

+

},

|

| 34 |

+

{

|

| 35 |

+

"cell_type": "code",

|

| 36 |

+

"execution_count": 3,

|

| 37 |

+

"metadata": {},

|

| 38 |

+

"outputs": [],

|

| 39 |

+

"source": [

|

| 40 |

+

"!pip install -q -U numpy matplotlib plotly pandas scipy scikit-learn python-dotenv chainlit==0.7.700 cohere==4.37 openai==1.3.5 tiktoken==0.5.1"

|

| 41 |

+

]

|

| 42 |

+

},

|

| 43 |

+

{

|

| 44 |

+

"cell_type": "code",

|

| 45 |

+

"execution_count": 4,

|

| 46 |

+

"metadata": {},

|

| 47 |

+

"outputs": [],

|

| 48 |

+

"source": [

|

| 49 |

+

"from aimakerspace.text_utils import TextFileLoader, CharacterTextSplitter\n",

|

| 50 |

+

"from aimakerspace.vectordatabase import VectorDatabase\n",

|

| 51 |

+

"import asyncio"

|

| 52 |

+

]

|

| 53 |

+

},

|

| 54 |

+

{

|

| 55 |

+

"cell_type": "code",

|

| 56 |

+

"execution_count": 5,

|

| 57 |

+

"metadata": {},

|

| 58 |

+

"outputs": [],

|

| 59 |

+

"source": [

|

| 60 |

+

"import nest_asyncio\n",

|

| 61 |

+

"nest_asyncio.apply()"

|

| 62 |

+

]

|

| 63 |

+

},

|

| 64 |

+

{

|

| 65 |

+

"cell_type": "markdown",

|

| 66 |

+

"metadata": {},

|

| 67 |

+

"source": [

|

| 68 |

+

"# Documents\n",

|

| 69 |

+

"\n",

|

| 70 |

+

"We'll be concerning ourselves with this part of the flow in the following section:\n",

|

| 71 |

+

"\n",

|

| 72 |

+

"<img src=\"https://i.imgur.com/wBYB2x3.png\" />"

|

| 73 |

+

]

|

| 74 |

+

},

|

| 75 |

+

{

|

| 76 |

+

"cell_type": "markdown",

|

| 77 |

+

"metadata": {},

|

| 78 |

+

"source": [

|

| 79 |

+

"### Loading Source Documents\n",

|

| 80 |

+

"\n",

|

| 81 |

+

"So, first things first, we need some documents to work with. \n",

|

| 82 |

+

"\n",

|

| 83 |

+

"While we could work directly with the `.txt` files (or whatever file-types you wanted to extend this to) we can instead do some batch processing of those documents at the beginning in order to store them in a more machine compatible format. \n",

|

| 84 |

+

"\n",

|

| 85 |

+

"In this case, we're going to parse our text file into a single document in memory.\n",

|

| 86 |

+

"\n",

|

| 87 |

+

"Let's look at the relevant bits of the `TextFileLoader` class:\n",

|

| 88 |

+

"\n",

|

| 89 |

+

"```python\n",

|

| 90 |

+

"def load_file(self):\n",

|

| 91 |

+

" with open(self.path, \"r\", encoding=self.encoding) as f:\n",

|

| 92 |

+

" self.documents.append(f.read())\n",

|

| 93 |

+

"```\n",

|

| 94 |

+

"\n",

|

| 95 |

+

"We're simply loading the document using the built in `open` method, and storing that output in our `self.documents` list.\n"

|

| 96 |

+

]

|

| 97 |

+

},

|

| 98 |

+

{

|

| 99 |

+

"cell_type": "code",

|

| 100 |

+

"execution_count": 5,

|

| 101 |

+

"metadata": {},

|

| 102 |

+

"outputs": [

|

| 103 |

+

{

|

| 104 |

+

"data": {

|

| 105 |

+

"text/plain": [

|

| 106 |

+

"1"

|

| 107 |

+

]

|

| 108 |

+

},

|

| 109 |

+

"execution_count": 5,

|

| 110 |

+

"metadata": {},

|

| 111 |

+

"output_type": "execute_result"

|

| 112 |

+

}

|

| 113 |

+

],

|

| 114 |

+

"source": [

|

| 115 |

+

"text_loader = TextFileLoader(\"data/KingLear.txt\")\n",

|

| 116 |

+

"documents = text_loader.load_documents()\n",

|

| 117 |

+

"len(documents)"

|

| 118 |

+

]

|

| 119 |

+

},

|

| 120 |

+

{

|

| 121 |

+

"cell_type": "code",

|

| 122 |

+

"execution_count": 7,

|

| 123 |

+

"metadata": {},

|

| 124 |

+

"outputs": [

|

| 125 |

+

{

|

| 126 |

+

"name": "stdout",

|

| 127 |

+

"output_type": "stream",

|

| 128 |

+

"text": [

|

| 129 |

+

"ACT I\n",

|

| 130 |

+

"SCENE I. King Lear's palace.\n",

|

| 131 |

+

"Enter KENT, GLOUCESTER, and EDMUND\n",

|

| 132 |

+

"KENT\n",

|

| 133 |

+

"I thought the king had more affected the Duke of\n",

|

| 134 |

+

"Albany than Cornwall.\n",

|

| 135 |

+

"GLOUCESTER\n",

|

| 136 |

+

"It did always seem so to us: but now, in the\n",

|

| 137 |

+

"division of the kingdom, it appears not which of\n",

|

| 138 |

+

"the dukes he values most; for equalities are so\n",

|

| 139 |

+

"weighed, that curiosity in neither can make choice\n",

|

| 140 |

+

"of either's moiety.\n",

|

| 141 |

+

"KENT\n",

|

| 142 |

+

"Is not this your son, my lord?\n",

|

| 143 |

+

"GLOUCESTER\n",

|

| 144 |

+

"His breeding, sir, hath been at my charge: I have\n",

|

| 145 |

+

"so often blushed to acknowledge him, that now I am\n",

|

| 146 |

+

"brazed to it.\n",

|

| 147 |

+

"KENT\n",

|

| 148 |

+

"I cannot conceive you.\n",

|

| 149 |

+

"GLOUCESTER\n",

|

| 150 |

+

"Sir, this young fellow's mot\n"

|

| 151 |

+

]

|

| 152 |

+

}

|

| 153 |

+

],

|

| 154 |

+

"source": [

|

| 155 |

+

"print(documents[0][:600])"

|

| 156 |

+

]

|

| 157 |

+

},

|

| 158 |

+

{

|

| 159 |

+

"cell_type": "markdown",

|

| 160 |

+

"metadata": {},

|

| 161 |

+

"source": [

|

| 162 |

+

"### Splitting Text Into Chunks\n",

|

| 163 |

+

"\n",

|

| 164 |

+

"As we can see, there is one document - and it's the entire text of King Lear.\n",

|

| 165 |

+

"\n",

|

| 166 |

+

"We'll want to chunk the document into smaller parts so it's easier to pass the most relevant snippets to the LLM. \n",

|

| 167 |

+

"\n",

|

| 168 |

+

"There is no fixed way to split/chunk documents - and you'll need to rely on some intuition as well as knowing your data *very* well in order to build the most robust system.\n",

|

| 169 |

+

"\n",

|

| 170 |

+

"For this toy example, we'll just split blindly on length. \n",

|

| 171 |

+

"\n",

|

| 172 |

+

">There's an opportunity to clear up some terminology here, for this course we will be stick to the following: \n",

|

| 173 |

+

">\n",

|

| 174 |

+

">- \"source documents\" : The `.txt`, `.pdf`, `.html`, ..., files that make up the files and information we start with in its raw format\n",

|

| 175 |

+

">- \"document(s)\" : single (or more) text object(s)\n",

|

| 176 |

+

">- \"corpus\" : the combination of all of our documents"

|

| 177 |

+

]

|

| 178 |

+

},

|

| 179 |

+

{

|

| 180 |

+

"cell_type": "markdown",

|

| 181 |

+

"metadata": {},

|

| 182 |

+

"source": [

|

| 183 |

+

"Let's take a peek visually at what we're doing here - and why it might be useful:\n",

|

| 184 |

+

"\n",

|

| 185 |

+

"<img src=\"https://i.imgur.com/rtM6Ci6.png\" />"

|

| 186 |

+

]

|

| 187 |

+

},

|

| 188 |

+

{

|

| 189 |

+

"cell_type": "markdown",

|

| 190 |

+

"metadata": {},

|

| 191 |

+

"source": [

|

| 192 |

+

"As you can see (though it's not specifically true in this toy example) the idea of splitting documents is to break them into managable sized chunks that retain the most relevant local context."

|

| 193 |

+

]

|

| 194 |

+

},

|

| 195 |

+

{

|

| 196 |

+

"cell_type": "code",

|

| 197 |

+

"execution_count": 6,

|

| 198 |

+

"metadata": {},

|

| 199 |

+

"outputs": [

|

| 200 |

+

{

|

| 201 |

+

"ename": "NameError",

|

| 202 |

+

"evalue": "name 'documents' is not defined",

|

| 203 |

+

"output_type": "error",

|

| 204 |

+

"traceback": [

|

| 205 |

+

"\u001b[1;31m---------------------------------------------------------------------------\u001b[0m",

|

| 206 |

+

"\u001b[1;31mNameError\u001b[0m Traceback (most recent call last)",

|

| 207 |

+

"\u001b[1;32mc:\\ai\\llmops3\\repos\\vsrepo\\my-first-raqa\\Python RAQA Example.ipynb Cell 14\u001b[0m line \u001b[0;36m2\n\u001b[0;32m <a href='vscode-notebook-cell:/c%3A/ai/llmops3/repos/vsrepo/my-first-raqa/Python%20RAQA%20Example.ipynb#X16sZmlsZQ%3D%3D?line=0'>1</a>\u001b[0m text_splitter \u001b[39m=\u001b[39m CharacterTextSplitter()\n\u001b[1;32m----> <a href='vscode-notebook-cell:/c%3A/ai/llmops3/repos/vsrepo/my-first-raqa/Python%20RAQA%20Example.ipynb#X16sZmlsZQ%3D%3D?line=1'>2</a>\u001b[0m split_documents \u001b[39m=\u001b[39m text_splitter\u001b[39m.\u001b[39msplit_texts(documents)\n\u001b[0;32m <a href='vscode-notebook-cell:/c%3A/ai/llmops3/repos/vsrepo/my-first-raqa/Python%20RAQA%20Example.ipynb#X16sZmlsZQ%3D%3D?line=2'>3</a>\u001b[0m \u001b[39mlen\u001b[39m(split_documents)\n",

|

| 208 |

+

"\u001b[1;31mNameError\u001b[0m: name 'documents' is not defined"

|

| 209 |

+

]

|

| 210 |

+

}

|

| 211 |

+

],

|

| 212 |

+

"source": [

|

| 213 |

+

"text_splitter = CharacterTextSplitter()\n",

|

| 214 |

+

"split_documents = text_splitter.split_texts(documents)\n",

|

| 215 |

+

"len(split_documents)"

|

| 216 |

+

]

|

| 217 |

+

},

|

| 218 |

+

{

|

| 219 |

+

"cell_type": "markdown",

|

| 220 |

+

"metadata": {},

|

| 221 |

+

"source": [

|

| 222 |

+

"Let's take a look at some of the documents we've managed to split."

|

| 223 |

+

]

|

| 224 |

+

},

|

| 225 |

+

{

|

| 226 |

+

"cell_type": "code",

|

| 227 |

+

"execution_count": 16,

|

| 228 |

+

"metadata": {},

|

| 229 |

+

"outputs": [

|

| 230 |

+

{

|

| 231 |

+

"data": {

|

| 232 |

+

"text/plain": [

|

| 233 |

+

"[\"\\ufeffACT I\\nSCENE I. King Lear's palace.\\nEnter KENT, GLOUCESTER, and EDMUND\\nKENT\\nI thought the king had more affected the Duke of\\nAlbany than Cornwall.\\nGLOUCESTER\\nIt did always seem so to us: but now, in the\\ndivision of the kingdom, it appears not which of\\nthe dukes he values most; for equalities are so\\nweighed, that curiosity in neither can make choice\\nof either's moiety.\\nKENT\\nIs not this your son, my lord?\\nGLOUCESTER\\nHis breeding, sir, hath been at my charge: I have\\nso often blushed to acknowledge him, that now I am\\nbrazed to it.\\nKENT\\nI cannot conceive you.\\nGLOUCESTER\\nSir, this young fellow's mother could: whereupon\\nshe grew round-wombed, and had, indeed, sir, a son\\nfor her cradle ere she had a husband for her bed.\\nDo you smell a fault?\\nKENT\\nI cannot wish the fault undone, the issue of it\\nbeing so proper.\\nGLOUCESTER\\nBut I have, sir, a son by order of law, some year\\nelder than this, who yet is no dearer in my account:\\nthough this knave came something saucily into the\\nworld before he was se\"]"

|

| 234 |

+

]

|

| 235 |

+

},

|

| 236 |

+

"execution_count": 16,

|

| 237 |

+

"metadata": {},

|

| 238 |

+

"output_type": "execute_result"

|

| 239 |

+

}

|

| 240 |

+

],

|

| 241 |

+

"source": [

|

| 242 |

+

"split_documents[0:1]"

|

| 243 |

+

]

|

| 244 |

+

},

|

| 245 |

+

{

|

| 246 |

+

"cell_type": "markdown",

|

| 247 |

+

"metadata": {},

|

| 248 |

+

"source": [

|

| 249 |

+

"### Embeddings and Vectors\n",

|

| 250 |

+

"\n",

|

| 251 |

+

"Next, we have to convert our corpus into a \"machine readable\" format. \n",

|

| 252 |

+

"\n",

|

| 253 |

+

"Loosely, this means turning the text into numbers. \n",

|

| 254 |

+

"\n",

|

| 255 |

+

"There are plenty of resources that talk about this process in great detail - I'll leave this [blog](https://txt.cohere.com/sentence-word-embeddings/) from Cohere:AI as a resource if you want to deep dive a bit. \n",

|

| 256 |

+

"\n",

|

| 257 |

+

"Today, we're going to talk about the actual process of creating, and then storing, these embeddings, and how we can leverage that to intelligently add context to our queries."

|

| 258 |

+

]

|

| 259 |

+

},

|

| 260 |

+

{

|

| 261 |

+

"cell_type": "markdown",

|

| 262 |

+

"metadata": {},

|

| 263 |

+

"source": [

|

| 264 |

+

"While this is all baked into 1 call - let's look at some of the code that powers this process:\n",

|

| 265 |

+

"\n",

|

| 266 |

+

"Let's look at our `VectorDatabase().__init__()`:\n",

|

| 267 |

+

"\n",

|

| 268 |

+

"```python\n",

|

| 269 |

+

"def __init__(self, embedding_model: EmbeddingModel = None):\n",

|

| 270 |

+

" self.vectors = defaultdict(np.array)\n",

|

| 271 |

+

" self.embedding_model = embedding_model or EmbeddingModel()\n",

|

| 272 |

+

"```\n",

|

| 273 |

+

"\n",

|

| 274 |

+

"As you can see - our vectors are merely stored as a dictionary of `np.array` objects.\n",

|

| 275 |

+

"\n",

|

| 276 |

+

"Secondly, our `VectorDatabase()` has a default `EmbeddingModel()` which is a wrapper for OpenAI's `text-embedding-ada-002` model. \n",

|

| 277 |

+

"\n",

|

| 278 |

+

"> **Quick Info About `text-embedding-ada-002`**:\n",

|

| 279 |

+

"> - It has a context window of **8192** tokens\n",

|

| 280 |

+

"> - It returns vectors with dimension **1536**"

|

| 281 |

+

]

|

| 282 |

+

},

|

| 283 |

+

{

|

| 284 |

+

"cell_type": "code",

|

| 285 |

+

"execution_count": 17,

|

| 286 |

+

"metadata": {},

|

| 287 |

+

"outputs": [],

|

| 288 |

+

"source": [

|

| 289 |

+

"import os\n",

|

| 290 |

+

"import openai\n",

|

| 291 |

+

"from getpass import getpass\n",

|

| 292 |

+

"\n",

|

| 293 |

+

"openai.api_key = getpass(\"OpenAI API Key: \")\n",

|

| 294 |

+

"os.environ[\"OPENAI_API_KEY\"] = openai.api_key"

|

| 295 |

+

]

|

| 296 |

+

},

|

| 297 |

+

{

|

| 298 |

+

"cell_type": "markdown",

|

| 299 |

+

"metadata": {},

|

| 300 |

+

"source": [

|

| 301 |

+

"We can call the `async_get_embeddings` method of our `EmbeddingModel()` on a list of `str` and receive a list of `float` back!\n",

|

| 302 |

+

"\n",

|

| 303 |

+

"```python\n",

|

| 304 |

+

"async def async_get_embeddings(self, list_of_text: List[str]) -> List[List[float]]:\n",

|

| 305 |

+

" return await aget_embeddings(\n",

|

| 306 |

+

" list_of_text=list_of_text, engine=self.embeddings_model_name\n",

|

| 307 |

+

" )\n",

|

| 308 |

+

"```"

|

| 309 |

+

]

|

| 310 |

+

},

|

| 311 |

+

{

|

| 312 |

+

"cell_type": "markdown",

|

| 313 |

+

"metadata": {},

|

| 314 |

+

"source": [

|

| 315 |

+

"We cast those to `np.array` when we build our `VectorDatabase()`:\n",

|

| 316 |

+

"\n",

|

| 317 |

+

"```python\n",

|

| 318 |

+

"async def abuild_from_list(self, list_of_text: List[str]) -> \"VectorDatabase\":\n",

|

| 319 |

+

" embeddings = await self.embedding_model.async_get_embeddings(list_of_text)\n",

|

| 320 |

+

" for text, embedding in zip(list_of_text, embeddings):\n",

|

| 321 |

+

" self.insert(text, np.array(embedding))\n",

|

| 322 |

+

" return self\n",

|

| 323 |

+

"```\n",

|

| 324 |

+

"\n",

|

| 325 |

+

"And that's all we need to do!"

|

| 326 |

+

]

|

| 327 |

+

},

|

| 328 |

+

{

|

| 329 |

+

"cell_type": "code",

|

| 330 |

+

"execution_count": 19,

|

| 331 |

+

"metadata": {},

|

| 332 |

+

"outputs": [],

|

| 333 |

+

"source": [

|

| 334 |

+

"vector_db = VectorDatabase()\n",

|

| 335 |

+

"vector_db = asyncio.run(vector_db.abuild_from_list(split_documents))"

|

| 336 |

+

]

|

| 337 |

+

},

|

| 338 |

+

{

|

| 339 |

+

"cell_type": "markdown",

|

| 340 |

+

"metadata": {},

|

| 341 |

+

"source": [

|

| 342 |

+

"So, to review what we've done so far in natural language:\n",

|

| 343 |

+

"\n",

|

| 344 |

+

"1. We load source documents\n",

|

| 345 |

+

"2. We split those source documents into smaller chunks (documents)\n",

|

| 346 |

+

"3. We send each of those documents to the `text-embedding-ada-002` OpenAI API endpoint\n",

|

| 347 |

+

"4. We store each of the text representations with the vector representations as keys/values in a dictionary"

|

| 348 |

+

]

|

| 349 |

+

},

|

| 350 |

+

{

|

| 351 |

+

"cell_type": "markdown",

|

| 352 |

+

"metadata": {},

|

| 353 |

+

"source": [

|

| 354 |

+

"### Semantic Similarity\n",

|

| 355 |

+

"\n",

|

| 356 |

+

"The next step is to be able to query our `VectorDatabase()` with a `str` and have it return to us vectors and text that is most relevant from our corpus. \n",

|

| 357 |

+

"\n",

|

| 358 |

+

"We're going to use the following process to achieve this in our toy example:\n",

|

| 359 |

+

"\n",

|

| 360 |

+

"1. We need to embed our query with the same `EmbeddingModel()` as we used to construct our `VectorDatabase()`\n",

|

| 361 |

+

"2. We loop through every vector in our `VectorDatabase()` and use a distance measure to compare how related they are\n",

|

| 362 |

+

"3. We return a list of the top `k` closest vectors, with their text representations\n",

|

| 363 |

+

"\n",

|

| 364 |

+

"There's some very heavy optimization that can be done at each of these steps - but let's just focus on the basic pattern in this notebook.\n",

|

| 365 |

+

"\n",

|

| 366 |

+

"> We are using [cosine similarity](https://www.engati.com/glossary/cosine-similarity) as a distance measure in this example - but there are many many distance measures you could use - like [these](https://flavien-vidal.medium.com/similarity-distances-for-natural-language-processing-16f63cd5ba55)\n",

|

| 367 |

+

"\n",

|

| 368 |

+

"> We are using a rather inefficient way of calculating relative distance between the query vector and all other vectors - there are more advanced approaches that are much more efficient, like [ANN](https://towardsdatascience.com/comprehensive-guide-to-approximate-nearest-neighbors-algorithms-8b94f057d6b6)"

|

| 369 |

+

]

|

| 370 |

+

},

|

| 371 |

+

{

|

| 372 |

+

"cell_type": "code",

|

| 373 |

+

"execution_count": 20,

|

| 374 |

+

"metadata": {},

|

| 375 |

+

"outputs": [

|

| 376 |

+

{

|

| 377 |

+

"data": {

|

| 378 |

+

"text/plain": [

|

| 379 |

+

"[(\"ng] O my good master!\\nKING LEAR\\nPrithee, away.\\nEDGAR\\n'Tis noble Kent, your friend.\\nKING LEAR\\nA plague upon you, murderers, traitors all!\\nI might have saved her; now she's gone for ever!\\nCordelia, Cordelia! stay a little. Ha!\\nWhat is't thou say'st? Her voice was ever soft,\\nGentle, and low, an excellent thing in woman.\\nI kill'd the slave that was a-hanging thee.\\nCaptain\\n'Tis true, my lords, he did.\\nKING LEAR\\nDid I not, fellow?\\nI have seen the day, with my good biting falchion\\nI would have made them skip: I am old now,\\nAnd these same crosses spoil me. Who are you?\\nMine eyes are not o' the best: I'll tell you straight.\\nKENT\\nIf fortune brag of two she loved and hated,\\nOne of them we behold.\\nKING LEAR\\nThis is a dull sight. Are you not Kent?\\nKENT\\nThe same,\\nYour servant Kent: Where is your servant Caius?\\nKING LEAR\\nHe's a good fellow, I can tell you that;\\nHe'll strike, and quickly too: he's dead and rotten.\\nKENT\\nNo, my good lord; I am the very man,--\\nKING LEAR\\nI'll see that straight.\\nKENT\\nThat,\",\n",

|

| 380 |

+

" 0.8344666931475856),\n",

|

| 381 |

+

" (\",\\nLay comforts to your bosom; and bestow\\nYour needful counsel to our business,\\nWhich craves the instant use.\\nGLOUCESTER\\nI serve you, madam:\\nYour graces are right welcome.\\nExeunt\\n\\nSCENE II. Before Gloucester's castle.\\nEnter KENT and OSWALD, severally\\nOSWALD\\nGood dawning to thee, friend: art of this house?\\nKENT\\nAy.\\nOSWALD\\nWhere may we set our horses?\\nKENT\\nI' the mire.\\nOSWALD\\nPrithee, if thou lovest me, tell me.\\nKENT\\nI love thee not.\\nOSWALD\\nWhy, then, I care not for thee.\\nKENT\\nIf I had thee in Lipsbury pinfold, I would make thee\\ncare for me.\\nOSWALD\\nWhy dost thou use me thus? I know thee not.\\nKENT\\nFellow, I know thee.\\nOSWALD\\nWhat dost thou know me for?\\nKENT\\nA knave; a rascal; an eater of broken meats; a\\nbase, proud, shallow, beggarly, three-suited,\\nhundred-pound, filthy, worsted-stocking knave; a\\nlily-livered, action-taking knave, a whoreson,\\nglass-gazing, super-serviceable finical rogue;\\none-trunk-inheriting slave; one that wouldst be a\\nbawd, in way of good service, and art nothing but\\nth\",\n",

|

| 382 |

+

" 0.8218615790372598),\n",

|

| 383 |

+

" (\" Caius?\\nKING LEAR\\nHe's a good fellow, I can tell you that;\\nHe'll strike, and quickly too: he's dead and rotten.\\nKENT\\nNo, my good lord; I am the very man,--\\nKING LEAR\\nI'll see that straight.\\nKENT\\nThat, from your first of difference and decay,\\nHave follow'd your sad steps.\\nKING LEAR\\nYou are welcome hither.\\nKENT\\nNor no man else: all's cheerless, dark, and deadly.\\nYour eldest daughters have fordone them selves,\\nAnd desperately are dead.\\nKING LEAR\\nAy, so I think.\\nALBANY\\nHe knows not what he says: and vain it is\\nThat we present us to him.\\nEDGAR\\nVery bootless.\\nEnter a Captain\\n\\nCaptain\\nEdmund is dead, my lord.\\nALBANY\\nThat's but a trifle here.\\nYou lords and noble friends, know our intent.\\nWhat comfort to this great decay may come\\nShall be applied: for us we will resign,\\nDuring the life of this old majesty,\\nTo him our absolute power:\\nTo EDGAR and KENT\\n\\nyou, to your rights:\\nWith boot, and such addition as your honours\\nHave more than merited. All friends shall taste\\nThe wages of their virtue, and \",\n",

|

| 384 |

+

" 0.8212563905734079)]"

|

| 385 |

+

]

|

| 386 |

+

},

|

| 387 |

+

"execution_count": 20,

|

| 388 |

+

"metadata": {},

|

| 389 |

+

"output_type": "execute_result"

|

| 390 |

+

}

|

| 391 |

+

],

|

| 392 |

+

"source": [

|

| 393 |

+

"vector_db.search_by_text(\"Your servant Kent. Where is your servant Caius?\", k=3)"

|

| 394 |

+

]

|

| 395 |

+

},

|

| 396 |

+

{

|

| 397 |

+

"cell_type": "markdown",

|

| 398 |

+

"metadata": {},

|

| 399 |

+

"source": [

|

| 400 |

+

"# Prompts\n",

|

| 401 |

+

"\n",

|

| 402 |

+

"In the following section, we'll be looking at the role of prompts - and how they help us to guide our application in the right direction.\n",

|

| 403 |

+

"\n",

|

| 404 |

+

"In this notebook, we're going to rely on the idea of \"zero-shot in-context learning\".\n",

|

| 405 |

+

"\n",

|

| 406 |

+

"This is a lot of words to say: \"We will ask it to perform our desired task in the prompt, and provide no examples.\""

|

| 407 |

+

]

|

| 408 |

+

},

|

| 409 |

+

{

|

| 410 |

+

"cell_type": "markdown",

|

| 411 |

+

"metadata": {},

|

| 412 |

+

"source": [

|

| 413 |

+

"### XYZRolePrompt\n",

|

| 414 |

+

"\n",

|

| 415 |

+

"Before we do that, let's stop and think a bit about how OpenAI's chat models work. \n",

|

| 416 |

+

"\n",

|

| 417 |

+

"We know they have roles - as is indicated in the following API [documentation](https://platform.openai.com/docs/api-reference/chat/create#chat/create-messages)\n",

|

| 418 |

+

"\n",

|

| 419 |

+

"There are three roles, and they function as follows (taken directly from [OpenAI](https://platform.openai.com/docs/guides/gpt/chat-completions-api)): \n",

|

| 420 |

+

"\n",

|

| 421 |

+

"- `{\"role\" : \"system\"}` : The system message helps set the behavior of the assistant. For example, you can modify the personality of the assistant or provide specific instructions about how it should behave throughout the conversation. However note that the system message is optional and the model’s behavior without a system message is likely to be similar to using a generic message such as \"You are a helpful assistant.\"\n",

|

| 422 |

+

"- `{\"role\" : \"user\"}` : The user messages provide requests or comments for the assistant to respond to.\n",

|

| 423 |

+

"- `{\"role\" : \"assistant\"}` : Assistant messages store previous assistant responses, but can also be written by you to give examples of desired behavior.\n",

|

| 424 |

+

"\n",

|

| 425 |

+

"The main idea is this: \n",

|

| 426 |

+

"\n",

|

| 427 |

+

"1. You start with a system message that outlines how the LLM should respond, what kind of behaviours you can expect from it, and more\n",

|

| 428 |

+

"2. Then, you can provide a few examples in the form of \"assistant\"/\"user\" pairs\n",

|

| 429 |

+

"3. Then, you prompt the model with the true \"user\" message.\n",

|

| 430 |

+

"\n",

|

| 431 |

+

"In this example, we'll be forgoing the 2nd step for simplicities sake."

|

| 432 |

+

]

|

| 433 |

+

},

|

| 434 |

+

{

|

| 435 |

+

"cell_type": "markdown",

|

| 436 |

+

"metadata": {},

|

| 437 |

+

"source": [

|

| 438 |

+

"#### Utility Functions\n",

|

| 439 |

+

"\n",

|

| 440 |

+

"You'll notice that we're using some utility functions from the `aimakerspace` module - let's take a peek at these and see what they're doing!"

|

| 441 |

+

]

|

| 442 |

+

},

|

| 443 |

+

{

|

| 444 |

+

"cell_type": "markdown",

|

| 445 |

+

"metadata": {},

|

| 446 |

+

"source": [

|

| 447 |

+

"##### XYZRolePrompt"

|

| 448 |

+

]

|

| 449 |

+

},

|

| 450 |

+

{

|

| 451 |

+

"cell_type": "markdown",

|

| 452 |

+

"metadata": {},

|

| 453 |

+

"source": [

|

| 454 |

+

"Here we have our `system`, `user`, and `assistant` role prompts. \n",

|

| 455 |

+

"\n",

|

| 456 |

+

"Let's take a peek at what they look like:\n",

|

| 457 |

+

"\n",

|

| 458 |

+

"```python\n",

|

| 459 |

+

"class BasePrompt:\n",

|

| 460 |

+

" def __init__(self, prompt):\n",

|

| 461 |

+

" \"\"\"\n",

|

| 462 |

+

" Initializes the BasePrompt object with a prompt template.\n",

|

| 463 |

+

"\n",

|

| 464 |

+

" :param prompt: A string that can contain placeholders within curly braces\n",

|

| 465 |

+

" \"\"\"\n",

|

| 466 |

+

" self.prompt = prompt\n",

|

| 467 |

+

" self._pattern = re.compile(r\"\\{([^}]+)\\}\")\n",

|

| 468 |

+

"\n",

|

| 469 |

+

" def format_prompt(self, **kwargs):\n",

|

| 470 |

+

" \"\"\"\n",

|

| 471 |

+

" Formats the prompt string using the keyword arguments provided.\n",

|

| 472 |

+

"\n",

|

| 473 |

+

" :param kwargs: The values to substitute into the prompt string\n",

|

| 474 |

+

" :return: The formatted prompt string\n",

|

| 475 |

+

" \"\"\"\n",

|

| 476 |

+

" matches = self._pattern.findall(self.prompt)\n",

|

| 477 |

+

" return self.prompt.format(**{match: kwargs.get(match, \"\") for match in matches})\n",

|

| 478 |

+

"\n",

|

| 479 |

+

" def get_input_variables(self):\n",

|

| 480 |

+

" \"\"\"\n",

|

| 481 |

+

" Gets the list of input variable names from the prompt string.\n",

|

| 482 |

+

"\n",

|

| 483 |

+

" :return: List of input variable names\n",

|

| 484 |

+

" \"\"\"\n",

|

| 485 |

+

" return self._pattern.findall(self.prompt)\n",

|

| 486 |

+

"```\n",

|

| 487 |

+

"\n",

|

| 488 |

+

"Then we have our `RolePrompt` which laser focuses us on the role pattern found in most API endpoints for LLMs.\n",

|

| 489 |

+

"\n",

|

| 490 |

+

"```python\n",

|

| 491 |

+

"class RolePrompt(BasePrompt):\n",

|

| 492 |

+

" def __init__(self, prompt, role: str):\n",

|

| 493 |

+

" \"\"\"\n",

|

| 494 |

+

" Initializes the RolePrompt object with a prompt template and a role.\n",

|

| 495 |

+

"\n",

|

| 496 |

+

" :param prompt: A string that can contain placeholders within curly braces\n",

|

| 497 |

+

" :param role: The role for the message ('system', 'user', or 'assistant')\n",

|

| 498 |

+

" \"\"\"\n",

|

| 499 |

+

" super().__init__(prompt)\n",

|

| 500 |

+

" self.role = role\n",

|

| 501 |

+

"\n",

|

| 502 |

+

" def create_message(self, **kwargs):\n",

|

| 503 |

+

" \"\"\"\n",

|

| 504 |

+

" Creates a message dictionary with a role and a formatted message.\n",

|

| 505 |

+

"\n",

|