Commit

·

955f2c6

1

Parent(s):

08041b7

Update README.md

Browse files

README.md

CHANGED

|

@@ -7,6 +7,12 @@ sdk: static

|

|

| 7 |

pinned: false

|

| 8 |

---

|

| 9 |

|

| 10 |

-

The official collection for our paper [LoraHub: Efficient Cross-Task Generalization via Dynamic LoRA Composition](https://arxiv.org/abs/2307.13269).

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

|

| 12 |

-

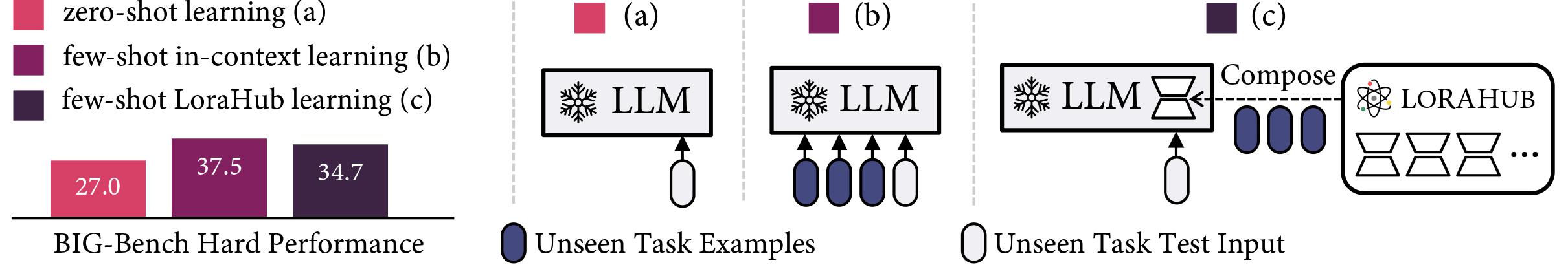

Low-rank adaptations (LoRA) are often employed to fine-tune large language models (LLMs) for new tasks. This paper investigates LoRA composability for cross-task generalization and introduces LoraHub, a strategic framework devised for the purposive assembly of LoRA modules trained on diverse given tasks, with the objective of achieving adaptable performance on unseen tasks. With just a few examples from a novel task, LoraHub enables the fluid combination of multiple LoRA modules, eradicating the need for human expertise. Notably, the composition requires neither additional model parameters nor gradients. Our empirical results, derived from the Big-Bench Hard (BBH) benchmark, suggest that LoraHub can effectively mimic the performance of in-context learning in few-shot scenarios, excluding the necessity of in-context examples alongside each inference input. A significant contribution of our research is the fostering of a community for LoRA, where users can share their trained LoRA modules, thereby facilitating their application to new tasks. We anticipate this resource will widen access to and spur advancements in general intelligence as well as LLMs in production. Code will be available at this https URL.

|

|

|

|

| 7 |

pinned: false

|

| 8 |

---

|

| 9 |

|

| 10 |

+

The official collection for our paper [LoraHub: Efficient Cross-Task Generalization via Dynamic LoRA Composition](https://arxiv.org/abs/2307.13269).

|

| 11 |

+

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

LoraHub is a framework that allows composing multiple LoRA modules trained on different tasks. The goal is to achieve good performance on unseen tasks using just a few examples, without needing extra parameters or training. And we want to build a marketplace where users can share their trained LoRA modules, thereby facilitating the application of these modules to new tasks.

|

| 15 |

+

|

| 16 |

+

* **Code**: https://github.com/sail-sg/lorahub

|

| 17 |

+

* **Install**: pip install lorahub

|

| 18 |

|

|

|