Model Card for cloudyu/Mixtral-8x7B-Instruct-v0.1-DPO

try to improve mistralai/Mixtral-8x7B-Instruct-v0.1 by DPO training

Metrics improved by Truthful DPO traingin after 100 steps

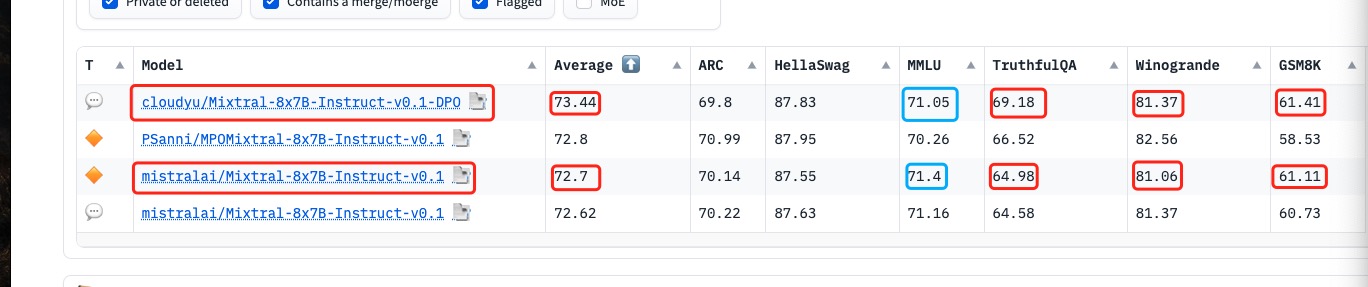

Open LLM Leaderboard Evaluation Results

Detailed results can be found here

| Metric | Value |

|---|---|

| Avg. | 73.44 |

| AI2 Reasoning Challenge (25-Shot) | 69.80 |

| HellaSwag (10-Shot) | 87.83 |

| MMLU (5-Shot) | 71.05 |

| TruthfulQA (0-shot) | 69.18 |

| Winogrande (5-shot) | 81.37 |

| GSM8k (5-shot) | 61.41 |

- Downloads last month

- 1,081

Inference Providers

NEW

This model is not currently available via any of the supported third-party Inference Providers, and

the model is not deployed on the HF Inference API.

Model tree for cloudyu/Mixtral-8x7B-Instruct-v0.1-DPO

Evaluation results

- normalized accuracy on AI2 Reasoning Challenge (25-Shot)test set Open LLM Leaderboard69.800

- normalized accuracy on HellaSwag (10-Shot)validation set Open LLM Leaderboard87.830

- accuracy on MMLU (5-Shot)test set Open LLM Leaderboard71.050

- mc2 on TruthfulQA (0-shot)validation set Open LLM Leaderboard69.180

- accuracy on Winogrande (5-shot)validation set Open LLM Leaderboard81.370

- accuracy on GSM8k (5-shot)test set Open LLM Leaderboard61.410