datasetId

stringlengths 5

121

| author

stringlengths 2

42

| last_modified

unknown | downloads

int64 0

2.98M

| likes

int64 0

6.71k

| tags

sequencelengths 1

7.92k

| task_categories

sequencelengths 0

47

⌀ | createdAt

unknown | card

stringlengths 15

1M

|

|---|---|---|---|---|---|---|---|---|

ACCC1380/private-model | ACCC1380 | "2024-12-26T12:04:14Z" | 33,157 | 7 | [

"language:ch",

"license:apache-2.0",

"region:us"

] | null | "2023-06-13T11:48:06Z" | ---

license: apache-2.0

language:

- ch

---

# 此huggingface库主要存储本人电脑的一些重要文件

## 如果无法下载文件,把下载链接的huggingface.co改成hf-mirror.com 即可

## 如果你也想要在此处永久备份文件,可以参考我的上传代码:

```python

# 功能函数,清理打包上传

from pathlib import Path

from huggingface_hub import HfApi, login

repo_id = 'ACCC1380/private-model'

yun_folders = ['/kaggle/input']

def hugface_upload(yun_folders, repo_id):

if 5 == 5:

hugToken = '********************' #改成你的huggingface_token

if hugToken != '':

login(token=hugToken)

api = HfApi()

print("HfApi 类已实例化")

print("开始上传文件...")

for yun_folder in yun_folders:

folder_path = Path(yun_folder)

if folder_path.exists() and folder_path.is_dir():

for file_in_folder in folder_path.glob('**/*'):

if file_in_folder.is_file():

try:

response = api.upload_file(

path_or_fileobj=file_in_folder,

path_in_repo=str(file_in_folder.relative_to(folder_path.parent)),

repo_id=repo_id,

repo_type="dataset"

)

print("文件上传完成")

print(f"响应: {response}")

except Exception as e:

print(f"文件 {file_in_folder} 上传失败: {e}")

continue

else:

print(f'Error: Folder {yun_folder} does not exist')

else:

print(f'Error: File {huggingface_token_file} does not exist')

hugface_upload(yun_folders, repo_id)

```

## 本地电脑需要梯子环境,上传可能很慢。可以使用kaggle等中转服务器上传,下载速率400MB/s,上传速率60MB/s。

# 在kaggle上面转存模型:

- 第一步:下载文件

```notebook

!apt install -y aria2

!aria2c -x 16 -s 16 -c -k 1M "把下载链接填到这双引号里" -o "保存的文件名称.safetensors"

```

- 第二步:使用上述代码的API上传

```python

# 功能函数,清理打包上传

from pathlib import Path

from huggingface_hub import HfApi, login

repo_id = 'ACCC1380/private-model'

yun_folders = ['/kaggle/working'] #kaggle的output路径

def hugface_upload(yun_folders, repo_id):

if 5 == 5:

hugToken = '********************' #改成你的huggingface_token

if hugToken != '':

login(token=hugToken)

api = HfApi()

print("HfApi 类已实例化")

print("开始上传文件...")

for yun_folder in yun_folders:

folder_path = Path(yun_folder)

if folder_path.exists() and folder_path.is_dir():

for file_in_folder in folder_path.glob('**/*'):

if file_in_folder.is_file():

try:

response = api.upload_file(

path_or_fileobj=file_in_folder,

path_in_repo=str(file_in_folder.relative_to(folder_path.parent)),

repo_id=repo_id,

repo_type="dataset"

)

print("文件上传完成")

print(f"响应: {response}")

except Exception as e:

print(f"文件 {file_in_folder} 上传失败: {e}")

continue

else:

print(f'Error: Folder {yun_folder} does not exist')

else:

print(f'Error: File {huggingface_token_file} does not exist')

hugface_upload(yun_folders, repo_id)

```

- 第三步:等待上传完成:

|

uoft-cs/cifar10 | uoft-cs | "2024-01-04T06:53:11Z" | 33,120 | 65 | [

"task_categories:image-classification",

"annotations_creators:crowdsourced",

"language_creators:found",

"multilinguality:monolingual",

"source_datasets:extended|other-80-Million-Tiny-Images",

"language:en",

"license:unknown",

"size_categories:10K<n<100K",

"format:parquet",

"modality:image",

"library:datasets",

"library:pandas",

"library:mlcroissant",

"library:polars",

"region:us"

] | [

"image-classification"

] | "2022-03-02T23:29:22Z" | ---

annotations_creators:

- crowdsourced

language_creators:

- found

language:

- en

license:

- unknown

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- extended|other-80-Million-Tiny-Images

task_categories:

- image-classification

task_ids: []

paperswithcode_id: cifar-10

pretty_name: Cifar10

dataset_info:

config_name: plain_text

features:

- name: img

dtype: image

- name: label

dtype:

class_label:

names:

'0': airplane

'1': automobile

'2': bird

'3': cat

'4': deer

'5': dog

'6': frog

'7': horse

'8': ship

'9': truck

splits:

- name: train

num_bytes: 113648310.0

num_examples: 50000

- name: test

num_bytes: 22731580.0

num_examples: 10000

download_size: 143646105

dataset_size: 136379890.0

configs:

- config_name: plain_text

data_files:

- split: train

path: plain_text/train-*

- split: test

path: plain_text/test-*

default: true

---

# Dataset Card for CIFAR-10

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://www.cs.toronto.edu/~kriz/cifar.html

- **Repository:**

- **Paper:** Learning Multiple Layers of Features from Tiny Images by Alex Krizhevsky

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

The CIFAR-10 dataset consists of 60000 32x32 colour images in 10 classes, with 6000 images per class. There are 50000 training images and 10000 test images.

The dataset is divided into five training batches and one test batch, each with 10000 images. The test batch contains exactly 1000 randomly-selected images from each class. The training batches contain the remaining images in random order, but some training batches may contain more images from one class than another. Between them, the training batches contain exactly 5000 images from each class.

### Supported Tasks and Leaderboards

- `image-classification`: The goal of this task is to classify a given image into one of 10 classes. The leaderboard is available [here](https://paperswithcode.com/sota/image-classification-on-cifar-10).

### Languages

English

## Dataset Structure

### Data Instances

A sample from the training set is provided below:

```

{

'img': <PIL.PngImagePlugin.PngImageFile image mode=RGB size=32x32 at 0x201FA6EE748>,

'label': 0

}

```

### Data Fields

- img: A `PIL.Image.Image` object containing the 32x32 image. Note that when accessing the image column: `dataset[0]["image"]` the image file is automatically decoded. Decoding of a large number of image files might take a significant amount of time. Thus it is important to first query the sample index before the `"image"` column, *i.e.* `dataset[0]["image"]` should **always** be preferred over `dataset["image"][0]`

- label: 0-9 with the following correspondence

0 airplane

1 automobile

2 bird

3 cat

4 deer

5 dog

6 frog

7 horse

8 ship

9 truck

### Data Splits

Train and Test

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

```

@TECHREPORT{Krizhevsky09learningmultiple,

author = {Alex Krizhevsky},

title = {Learning multiple layers of features from tiny images},

institution = {},

year = {2009}

}

```

### Contributions

Thanks to [@czabo](https://github.com/czabo) for adding this dataset. |

open-llm-leaderboard-old/results | open-llm-leaderboard-old | "2024-07-18T13:49:22Z" | 32,538 | 48 | [

"language:en",

"region:us"

] | null | "2023-06-19T15:15:24Z" | ---

language:

- en

---

# Open LLM Leaderboard Results

This repository contains the outcomes of your submitted models that have been evaluated through the Open LLM Leaderboard. Our goal is to shed light on the cutting-edge Large Language Models (LLMs) and chatbots, enabling you to make well-informed decisions regarding your chosen application.

## Evaluation Methodology

The evaluation process involves running your models against several benchmarks from the Eleuther AI Harness, a unified framework for measuring the effectiveness of generative language models. Below is a brief overview of each benchmark:

1. AI2 Reasoning Challenge (ARC) - Grade-School Science Questions (25-shot)

2. HellaSwag - Commonsense Inference (10-shot)

3. MMLU - Massive Multi-Task Language Understanding, knowledge on 57 domains (5-shot)

4. TruthfulQA - Propensity to Produce Falsehoods (0-shot)

5. Winogrande - Adversarial Winograd Schema Challenge (5-shot)

6. GSM8k - Grade School Math Word Problems Solving Complex Mathematical Reasoning (5-shot)

Together, these benchmarks provide an assessment of a model's capabilities in terms of knowledge, reasoning, and some math, in various scenarios.

## Exploring Model Details

For further insights into the inputs and outputs of specific models, locate the "📄" emoji associated with the desired model in the leaderboard. Clicking on this icon will direct you to the respective GitHub page containing detailed information about the model's behavior during the evaluation process.

|

fsicoli/common_voice_15_0 | fsicoli | "2023-12-20T18:55:52Z" | 32,515 | 5 | [

"task_categories:automatic-speech-recognition",

"language:ab",

"language:af",

"language:am",

"language:ar",

"language:as",

"language:ast",

"language:az",

"language:ba",

"language:bas",

"language:be",

"language:bg",

"language:bn",

"language:br",

"language:ca",

"language:ckb",

"language:cnh",

"language:cs",

"language:cv",

"language:cy",

"language:da",

"language:de",

"language:dv",

"language:dyu",

"language:el",

"language:en",

"language:eo",

"language:es",

"language:et",

"language:eu",

"language:fa",

"language:fi",

"language:fr",

"language:gl",

"language:gn",

"language:ha",

"language:he",

"language:hi",

"language:hsb",

"language:hu",

"language:ia",

"language:id",

"language:ig",

"language:is",

"language:it",

"language:ja",

"language:ka",

"language:kab",

"language:kk",

"language:kmr",

"language:ko",

"language:ky",

"language:lg",

"language:lo",

"language:lt",

"language:lv",

"language:mdf",

"language:mhr",

"language:mk",

"language:ml",

"language:mn",

"language:mr",

"language:mrj",

"language:mt",

"language:myv",

"language:nl",

"language:oc",

"language:or",

"language:pl",

"language:ps",

"language:pt",

"language:quy",

"language:ro",

"language:ru",

"language:rw",

"language:sah",

"language:sat",

"language:sc",

"language:sk",

"language:skr",

"language:sl",

"language:sq",

"language:sr",

"language:sw",

"language:ta",

"language:th",

"language:ti",

"language:tig",

"language:tk",

"language:tok",

"language:tr",

"language:tt",

"language:tw",

"language:ug",

"language:uk",

"language:ur",

"language:uz",

"language:vi",

"language:vot",

"language:yue",

"language:zgh",

"language:zh",

"language:yo",

"license:cc",

"size_categories:100B<n<1T",

"region:us",

"mozilla",

"foundation"

] | [

"automatic-speech-recognition"

] | "2023-11-13T13:27:04Z" | ---

license: cc

language:

- ab

- af

- am

- ar

- as

- ast

- az

- ba

- bas

- be

- bg

- bn

- br

- ca

- ckb

- cnh

- cs

- cv

- cy

- da

- de

- dv

- dyu

- el

- en

- eo

- es

- et

- eu

- fa

- fi

- fr

- gl

- gn

- ha

- he

- hi

- hsb

- hu

- ia

- id

- ig

- is

- it

- ja

- ka

- kab

- kk

- kmr

- ko

- ky

- lg

- lo

- lt

- lv

- mdf

- mhr

- mk

- ml

- mn

- mr

- mrj

- mt

- myv

- nl

- oc

- or

- pl

- ps

- pt

- quy

- ro

- ru

- rw

- sah

- sat

- sc

- sk

- skr

- sl

- sq

- sr

- sw

- ta

- th

- ti

- tig

- tk

- tok

- tr

- tt

- tw

- ug

- uk

- ur

- uz

- vi

- vot

- yue

- zgh

- zh

- yo

task_categories:

- automatic-speech-recognition

pretty_name: Common Voice Corpus 15.0

size_categories:

- 100B<n<1T

tags:

- mozilla

- foundation

---

# Dataset Card for Common Voice Corpus 15.0

<!-- Provide a quick summary of the dataset. -->

This dataset is an unofficial version of the Mozilla Common Voice Corpus 15. It was downloaded and converted from the project's website https://commonvoice.mozilla.org/.

## Languages

```

Abkhaz, Albanian, Amharic, Arabic, Armenian, Assamese, Asturian, Azerbaijani, Basaa, Bashkir, Basque, Belarusian, Bengali, Breton, Bulgarian, Cantonese, Catalan, Central Kurdish, Chinese (China), Chinese (Hong Kong), Chinese (Taiwan), Chuvash, Czech, Danish, Dhivehi, Dioula, Dutch, English, Erzya, Esperanto, Estonian, Finnish, French, Frisian, Galician, Georgian, German, Greek, Guarani, Hakha Chin, Hausa, Hill Mari, Hindi, Hungarian, Icelandic, Igbo, Indonesian, Interlingua, Irish, Italian, Japanese, Kabyle, Kazakh, Kinyarwanda, Korean, Kurmanji Kurdish, Kyrgyz, Lao, Latvian, Lithuanian, Luganda, Macedonian, Malayalam, Maltese, Marathi, Meadow Mari, Moksha, Mongolian, Nepali, Norwegian Nynorsk, Occitan, Odia, Pashto, Persian, Polish, Portuguese, Punjabi, Quechua Chanka, Romanian, Romansh Sursilvan, Romansh Vallader, Russian, Sakha, Santali (Ol Chiki), Saraiki, Sardinian, Serbian, Slovak, Slovenian, Sorbian, Upper, Spanish, Swahili, Swedish, Taiwanese (Minnan), Tamazight, Tamil, Tatar, Thai, Tigre, Tigrinya, Toki Pona, Turkish, Turkmen, Twi, Ukrainian, Urdu, Uyghur, Uzbek, Vietnamese, Votic, Welsh, Yoruba

```

## How to use

The datasets library allows you to load and pre-process your dataset in pure Python, at scale. The dataset can be downloaded and prepared in one call to your local drive by using the load_dataset function.

For example, to download the Portuguese config, simply specify the corresponding language config name (i.e., "pt" for Portuguese):

```

from datasets import load_dataset

cv_15 = load_dataset("fsicoli/common_voice_15_0", "pt", split="train")

```

Using the datasets library, you can also stream the dataset on-the-fly by adding a streaming=True argument to the load_dataset function call. Loading a dataset in streaming mode loads individual samples of the dataset at a time, rather than downloading the entire dataset to disk.

```

from datasets import load_dataset

cv_15 = load_dataset("fsicoli/common_voice_15_0", "pt", split="train", streaming=True)

print(next(iter(cv_15)))

```

Bonus: create a PyTorch dataloader directly with your own datasets (local/streamed).

### Local

```

from datasets import load_dataset

from torch.utils.data.sampler import BatchSampler, RandomSampler

cv_15 = load_dataset("fsicoli/common_voice_15_0", "pt", split="train")

batch_sampler = BatchSampler(RandomSampler(cv_15), batch_size=32, drop_last=False)

dataloader = DataLoader(cv_15, batch_sampler=batch_sampler)

```

### Streaming

```

from datasets import load_dataset

from torch.utils.data import DataLoader

cv_15 = load_dataset("fsicoli/common_voice_15_0", "pt", split="train")

dataloader = DataLoader(cv_15, batch_size=32)

```

To find out more about loading and preparing audio datasets, head over to hf.co/blog/audio-datasets.

### Dataset Structure

Data Instances

A typical data point comprises the path to the audio file and its sentence. Additional fields include accent, age, client_id, up_votes, down_votes, gender, locale and segment.

### Licensing Information

Public Domain, CC-0

### Citation Information

```

@inproceedings{commonvoice:2020,

author = {Ardila, R. and Branson, M. and Davis, K. and Henretty, M. and Kohler, M. and Meyer, J. and Morais, R. and Saunders, L. and Tyers, F. M. and Weber, G.},

title = {Common Voice: A Massively-Multilingual Speech Corpus},

booktitle = {Proceedings of the 12th Conference on Language Resources and Evaluation (LREC 2020)},

pages = {4211--4215},

year = 2020

}

``` |

ibrahimhamamci/CT-RATE | ibrahimhamamci | "2024-11-05T00:05:36Z" | 32,475 | 105 | [

"license:cc-by-nc-sa-4.0",

"size_categories:100K<n<1M",

"format:csv",

"modality:tabular",

"modality:text",

"library:datasets",

"library:pandas",

"library:mlcroissant",

"library:polars",

"arxiv:2403.17834",

"arxiv:2305.16037",

"arxiv:2403.06801",

"region:us"

] | null | "2024-02-09T17:54:34Z" | ---

title: "CT-RATE Dataset"

license: cc-by-nc-sa-4.0

extra_gated_prompt: |

## Terms and Conditions for Using the CT-RATE Dataset

**1. Acceptance of Terms**

Accessing and using the CT-RATE dataset implies your agreement to these terms and conditions. If you disagree with any part, please refrain from using the dataset.

**2. Permitted Use**

- The dataset is intended solely for academic, research, and educational purposes.

- Any commercial exploitation of the dataset without prior permission is strictly forbidden.

- You must adhere to all relevant laws, regulations, and research ethics, including data privacy and protection standards.

**3. Data Protection and Privacy**

- Acknowledge the presence of sensitive information within the dataset and commit to maintaining data confidentiality.

- Direct attempts to re-identify individuals from the dataset are prohibited.

- Ensure compliance with data protection laws such as GDPR and HIPAA.

**4. Attribution**

- Cite the dataset and acknowledge the providers in any publications resulting from its use.

- Claims of ownership or exclusive rights over the dataset or derivatives are not permitted.

**5. Redistribution**

- Redistribution of the dataset or any portion thereof is not allowed.

- Sharing derived data must respect the privacy and confidentiality terms set forth.

**6. Disclaimer**

The dataset is provided "as is" without warranty of any kind, either expressed or implied, including but not limited to the accuracy or completeness of the data.

**7. Limitation of Liability**

Under no circumstances will the dataset providers be liable for any claims or damages resulting from your use of the dataset.

**8. Access Revocation**

Violation of these terms may result in the termination of your access to the dataset.

**9. Amendments**

The terms and conditions may be updated at any time; continued use of the dataset signifies acceptance of the new terms.

**10. Governing Law**

These terms are governed by the laws of the location of the dataset providers, excluding conflict of law rules.

**Consent:**

Accessing and using the CT-RATE dataset signifies your acknowledgment and agreement to these terms and conditions.

extra_gated_fields:

Name: "text"

Institution: "text"

Email: "text"

I have read and agree with Terms and Conditions for using the CT-RATE dataset: "checkbox"

configs:

- config_name: labels

data_files:

- split: train

path: "dataset/multi_abnormality_labels/train_predicted_labels.csv"

- split: validation

path: "dataset/multi_abnormality_labels/valid_predicted_labels.csv"

- config_name: reports

data_files:

- split: train

path: "dataset/radiology_text_reports/train_reports.csv"

- split: validation

path: "dataset/radiology_text_reports/validation_reports.csv"

- config_name: metadata

data_files:

- split: train

path: "dataset/metadata/train_metadata.csv"

- split: validation

path: "dataset/metadata/validation_metadata.csv"

---

# [Developing Generalist Foundation Models from a Multimodal Dataset for 3D Computed Tomography](https://arxiv.org/abs/2403.17834)

Welcome to the official page for [our paper](https://arxiv.org/abs/2403.17834), which introduces **CT-RATE**—a pioneering dataset in 3D medical imaging that uniquely pairs textual data with image data focused on chest CT volumes. Here, you will find the CT-RATE dataset, comprising chest CT volumes paired with corresponding radiology text reports, multi-abnormality labels, and metadata, all freely accessible to researchers.

## CT-RATE: A novel dataset of chest CT volumes with corresponding radiology text reports

<p align="center">

<img src="https://github.com/ibrahimethemhamamci/CT-CLIP/blob/main/figures/CT-RATE.png?raw=true" width="100%">

</p>

A major challenge in computational research in 3D medical imaging is the lack of comprehensive datasets. Addressing this issue, we present CT-RATE, the first 3D medical imaging dataset that pairs images with textual reports. CT-RATE consists of 25,692 non-contrast chest CT volumes, expanded to 50,188 through various reconstructions, from 21,304 unique patients, along with corresponding radiology text reports, multi-abnormality labels, and metadata.

We divided the cohort into two groups: 20,000 patients were allocated to the training set and 1,304 to the validation set. Our folders are structured as split_patientID_scanID_reconstructionID. For instance, "valid_53_a_1" indicates that this is a CT volume from the validation set, scan "a" from patient 53, and reconstruction 1 of scan "a". This naming convention applies to all files.

## CT-CLIP: CT-focused contrastive language-image pre-training framework

<p align="center">

<img src="https://github.com/ibrahimethemhamamci/CT-CLIP/blob/main/figures/CT-CLIP.png?raw=true" width="100%">

</p>

Leveraging CT-RATE, we developed CT-CLIP, a CT-focused contrastive language-image pre-training framework. As a versatile, self-supervised model, CT-CLIP is designed for broad application and does not require task-specific training. Remarkably, CT-CLIP outperforms state-of-the-art, fully supervised methods in multi-abnormality detection across all key metrics, thus eliminating the need for manual annotation. We also demonstrate its utility in case retrieval, whether using imagery or textual queries, thereby advancing knowledge dissemination.

Our complete codebase is openly available on [our official GitHub repository](https://github.com/ibrahimethemhamamci/CT-CLIP).

## CT-CHAT: Vision-language foundational chat model for 3D chest CT volumes

<p align="center">

<img src="https://github.com/ibrahimethemhamamci/CT-CHAT/blob/main/figures/CTCHAT-demo.gif?raw=true" width="100%">

</p>

Leveraging [the VQA dataset](https://huggingface.co/datasets/ibrahimhamamci/CT-RATE/tree/main/dataset/vqa) derived from CT-RATE and pretrained 3D vision encoder from CT-CLIP, we developed CT-CHAT, a multimodal AI assistant designed to enhance the interpretation and diagnostic capabilities of 3D chest CT imaging. Building on the strong foundation of CT-CLIP, it integrates both visual and language processing to handle diverse tasks like visual question answering, report generation, and multiple-choice questions. Trained on over 2.7 million question-answer pairs from CT-RATE, it leverages 3D spatial information, making it superior to 2D-based models. CT-CHAT not only improves radiologist workflows by reducing interpretation time but also delivers highly accurate and clinically relevant responses, pushing the boundaries of 3D medical imaging tasks.

Our complete codebase is openly available on [our official GitHub repository](https://github.com/ibrahimethemhamamci/CT-CHAT).

## Citing Us

When using this dataset, please consider citing the following related papers:

```

1. @misc{hamamci2024foundation,

title={Developing Generalist Foundation Models from a Multimodal Dataset for 3D Computed Tomography},

author={Ibrahim Ethem Hamamci and Sezgin Er and Furkan Almas and Ayse Gulnihan Simsek and Sevval Nil Esirgun and Irem Dogan and Muhammed Furkan Dasdelen and Omer Faruk Durugol and Bastian Wittmann and Tamaz Amiranashvili and Enis Simsar and Mehmet Simsar and Emine Bensu Erdemir and Abdullah Alanbay and Anjany Sekuboyina and Berkan Lafci and Christian Bluethgen and Mehmet Kemal Ozdemir and Bjoern Menze},

year={2024},

eprint={2403.17834},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2403.17834},

}

(Accepted to ECCV 2024)

2. @misc{hamamci2024generatect,

title={GenerateCT: Text-Conditional Generation of 3D Chest CT Volumes},

author={Ibrahim Ethem Hamamci and Sezgin Er and Anjany Sekuboyina and Enis Simsar and Alperen Tezcan and Ayse Gulnihan Simsek and Sevval Nil Esirgun and Furkan Almas and Irem Dogan and Muhammed Furkan Dasdelen and Chinmay Prabhakar and Hadrien Reynaud and Sarthak Pati and Christian Bluethgen and Mehmet Kemal Ozdemir and Bjoern Menze},

year={2024},

eprint={2305.16037},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2305.16037},

}

(Accepted to MICCAI 2024)

3. @misc{hamamci2024ct2rep,

title={CT2Rep: Automated Radiology Report Generation for 3D Medical Imaging},

author={Ibrahim Ethem Hamamci and Sezgin Er and Bjoern Menze},

year={2024},

eprint={2403.06801},

archivePrefix={arXiv},

primaryClass={eess.IV},

url={https://arxiv.org/abs/2403.06801},

}

```

## Ethical Approval

For those who require ethical approval to apply for grants with this dataset, it can be accessed [here](./ethical_approval.PDF).

## License

We are committed to fostering innovation and collaboration in the research community. To this end, all elements of the CT-RATE dataset are released under a [Creative Commons Attribution (CC-BY-NC-SA) license](https://creativecommons.org/licenses/by-nc-sa/4.0/). This licensing framework ensures that our contributions can be freely used for non-commercial research purposes, while also encouraging contributions and modifications, provided that the original work is properly cited and any derivative works are shared under similar terms. |

m-a-p/PIN-14M | m-a-p | "2024-12-20T04:00:22Z" | 31,987 | 27 | [

"language:en",

"language:zh",

"license:apache-2.0",

"size_categories:10K<n<100K",

"format:json",

"modality:text",

"library:datasets",

"library:pandas",

"library:mlcroissant",

"library:polars",

"arxiv:2406.13923",

"region:us",

"multimodal"

] | null | "2024-04-12T09:35:42Z" | ---

license: apache-2.0

language:

- en

- zh

configs:

- config_name: pin

data_files:

- split: train

path:

- data/DocLayNet/DocLayNet.jsonl

tags:

- multimodal

size_categories:

- 1B<n<10B

---

# PIN-14M

A mini version of "PIN: A Knowledge-Intensive Dataset for Paired and Interleaved Multimodal Documents"

Paper: https://arxiv.org/abs/2406.13923

This dataset contains **14M** samples in PIN format, with at least **7.33B** tokens.

🚀 News

[ 2024.12.12 ] !NEW! 🔥 We have updated the quality signals for all subsets, with the dataset now containing 7.33B tokens after Llama3 tokenization.

[ 2024.12.06 ] !NEW! 🔥 We have updated the quality signals, enabling a swift assessment of whether a sample meets the required specifications based on our quality indicators. Further detailed descriptions will be provided in the forthcoming formal publication. (Aside from the Chinese-Markdown subset, there are unresolved issues that are currently being addressed.)

This dataset contains 14M samples with PIN format.

<img src="assets/intro.png">

## 0 Usage

Download ALL files

```bash

huggingface-cli download m-a-p/PIN-14M --repo-type=dataset --resume-download --local-dir "your_local_path"

```

Download ONLY **Jsonl** files

```bash

huggingface-cli download m-a-p/PIN-14M --repo-type=dataset --resume-download --include "*.jsonl" --local-dir "your_local_path"

```

Decompression

```bash

cat data.tar.part* > data.tar

tar -xvf data.tar

```

## 1 Dataset statistics

| Subsect | Documents (#) | Overall images (#) | Content images (#) | Documents (GB) | Overall images (GB) | Content images (GB) | Total tokens (llama3) |

|-----------------|-----------|----------------|----------------|---------------------|--------------------------|-----------------------|-----------------------|

| pg19 | 2,612,285 | 2,608,029 | 0 | 12.3 | 1,418.1 | 0.0 | 2,699,005,408 |

| OBELICS | 5,795,198 | 5,770,432 | 5,840,658 | 13.0 | 3,141.4 | 3,305.3 | 1,992,402,942 |

| mmc4-core-ff | 5,351,628 | 5,277,983 | 9,014,579 | 33.7 | 3,232.0 | 5,605.0 | 1,546,652,009 |

| chinese-markdown| 168,323 | 167,989 | 106,768 | 1.3 | 773.2 | 15.0 | 355,931,052 |

| leetcode | 2,360 | 2,360 | 0 | 0.016 | 1.3 | 0.0 | 4,102,212 |

| linux-cn | 9,564 | 9,564 | 38,960 | 0.082 | 11.9 | 1.8 | 17,432,641 |

| DocLayNet | 68,757 | 69,375 | 90,259 | 0.18 | 25.9 | 1.6 | 35,287,519 |

| PIN-PMC | 99,157 | 1,074,799 | 454,482 | 2.8 | 724.2 | 29.5 | 685,403,494 |

| **Total** | 14,107,272| 14,980,531 | 15,545,706 | 63.4 | 9,328.0 | 8,958.3 | 7,336,217,277 |

Storage space statistics may have some error, so these values are for reference only.

## 2 Data Structure

### 2.1 Subsets

We process 8 subsets, including PIN-PMC, DocLayNet, Linux-CN, chinese-markdown, OBELICS, MMC4, leetcode, and PG19.

<img src="assets/dataset-example.png">

Note: We do not release the PIN-arXiv subset in the preview version.

### 2.2 Folder Structure

The directory `content images` holds the images mentioned within the markdown text, and `overall images` display the overall visual representation of the markdown files. Moreover, the `JSONL` file encapsulate the textual content along with associated data details.

An example subset:

```

example_dataset/

│

├── content_image/

├── overall_image/

└── example_dataset.jsonl

```

A subset with multiple parts:

```

example_dataset/

│

├── part00/

│ ├── content_image/

│ ├── overall_image/

│ └── part00.jsonl

│

├── part01/

│ ├── content_image/

│ ├── overall_image/

│ └── part01.jsonl

│

... - More similar parts

```

### 2.3 content_image Folder

This folder contains all the content images used in the markdown files.

Note: All images need to be converted to PNG format. The filename should be unique within the folder.

```

content_image/

│

├── 1.png

├── 2.png

...

```

### 2.4 overall_image Folder

This folder contains all the overall images for each sample.

Note: All images need to be converted to PNG format. The filename should be unique within the folder.

```

overall_image/

│

├── 1.png

├── 2.png

...

```

#### 2.5 JSON Lines Format

we provide a detailed example of the annotations included with each data entry.

```

{

"id": 1919,

"meta": {

"language": "en",

"oi_exist": true,

"oi_source": "compiling",

"source_dataset": "example_source (e.g. OBELICS)",

"ori_meta": {

"document_url": "https://www.example.com/2022/02/21/example/",

...

}

},

"doc_id": 1997,

"page_id": 0,

"date_download": "2024-03-01"

},

"license": "CC-BY-4.0",

"quality_signals": {

"doc_length": 100,

...

},

"content_image": [

"content_image/1997-0.png",

"content_image/1997-1.png"

],

"md": "<img src='content_image/1997-0.png'>\n\nThis is a fake sample data line, just for show.\n\nThis is a fake sample data line, just for show.\n\n<img src='content_image/1997-1.png'>\n\nThis is a fake sample data line, just for show.",

"overall_image": "overall_image/1997.png"

}

```

Field Descriptions:

**Field Descriptions:**

- **id**: Unique identifier for each entry.

- **meta**: Metadata for each multimodal document entry.

- **language**: The document's language, such as Chinese (zh) or English (en).

- **source_dataset**: If the document is converted from another dataset, the original dataset name is noted here; otherwise, it is None.

- **doc_id**: A unique document identifier providing name and other details.

- **page_id**: A unique page identifier indicating the document's page number. If there is only one page, this is None. Page IDs are usually numbered starting from 1 in multi-page documents.

- **date_download**: date (download), the date the document was downloaded.

- **ori_meta**: Original metadata from the dataset, if available; otherwise, None.

- **oi_exist**: Indicates whether an overall image exists. True or False.

- **oi_source**: Source of the overall image; 'ori' for images taken from the original dataset and 'compiling' for images generated through code compilation. If this tag is missing, the image is likely compiled.

- ...

- **quality_signals**: Quality indicators inspired by the design of redpajama v2.

- **doc_length**: Length of the document.

- ...

- **content_image**: List of images mentioned in the document; None if no images are present.

- **overall_image**: Path to the corresponding overall image. (A list or a single path)

- **md**: Contains the markdown content.

- **license**: License information for the current sample.

## 3 Examples of jsonl files

We selected samples consisting of short markdown documents.

### 3.1 An example of DocLynet

Notably, the dataset's overall images are converted from the original dataset's PDFs into PNG format.

```json

{

"id": 0,

"meta": {

"language": "en",

"oi_exist": true,

"oi_source": "ori",

"source_dataset": "DocLayNet",

"ori_meta": null,

"doc_id": "NYSE_F_2004.pdf",

"page_id": "0",

"date_download": "2024-3-24"

},

"quality_signals": null,

"license": "https://cdla.io/permissive-1-0/",

"content_image": [

"content_image/34102.jpg"

],

"overall_image": "overall_image/3562e47265520f7a72f3eac73aadfe19a78531698c3b50d7670b8ad9b214106b.png",

"md": "<img src='content_image/34102.jpg'>\n\n# Ford Motor Company / 2004 Annual Report \n\n# R W A R D F O R W A R D \n\n"

}

```

### 3.2 An example of OBELICS

```json

{

"id": 466502,

"meta": {

"language": "en",

"oi_exist": true,

"oi_source": "compiling",

"source_dataset": "OBELICS",

"ori_meta": {

"document_url": "https://www.donegaldaily.com/2022/02/21/watch-incredible-storm-surge-at-portsalon-golf-club/",

"unformatted_src": "https://www.donegaldaily.com/wp-content/uploads/2022/02/Screenshot-2022-02-21-at-17.54.30.jpg",

"src": "https://www.donegaldaily.com/wp-content/uploads/2022/02/Screenshot-2022-02-21-at-17.54.30.jpg",

"formatted_filename": "Screenshot at",

"rendered_width": 817,

"rendered_height": 419,

"original_width": 817,

"original_height": 419,

"format": "jpeg",

"general_meta": {

"url": "https://www.donegaldaily.com/2022/02/21/watch-incredible-storm-surge-at-portsalon-golf-club/",

"warc_filename": "crawl-data/CC-MAIN-2022-27/segments/1656103271864.14/warc/CC-MAIN-20220626192142-20220626222142-00308.warc.gz",

"warc_record_offset": 795020636,

"warc_record_length": 31271

}

},

"doc_id": 98496,

"page_id": 0,

"date_download": "2024-4-22"

},

"md": "<img src='content_image/98496-0.png'>\n\nThe golf course at Portsalon Golf Club took a battering today as a result of Storm Franklin.\n\nDonegal had been left battered and bruised overnight after Storm Franklin ripped across the county.\n\nThere were trees down on the approach roads to Donegal Town and in Gartan.\n\nThere were also trees down in Inishowen while there is also heavy water reported along the sides of roads with motorists asked to slow down and not put themselves in danger.\n\nDonegal’s coastline took a huge impact with massive waves reported along the coastline around the county.\n\nThe video, taken by Johnny Shields was taken from the tee box of the third hole.",

"license": "CC-BY-4.0",

"quality_signals": null,

"content_image": [

"content_image/98496-0.png"

],

"overall_image": "overall_image/98496-0.png"

}

```

### 3.3 An example of chinese-markdown

```json

{

"id": 7,

"meta": {

"language": "zh",

"oi_exist": true,

"oi_source": "compiling",

"source_dataset": "chinese-markdown",

"ori_meta": null,

"doc_id": 7,

"page_id": null,

"date_download": "2024-04-30"

},

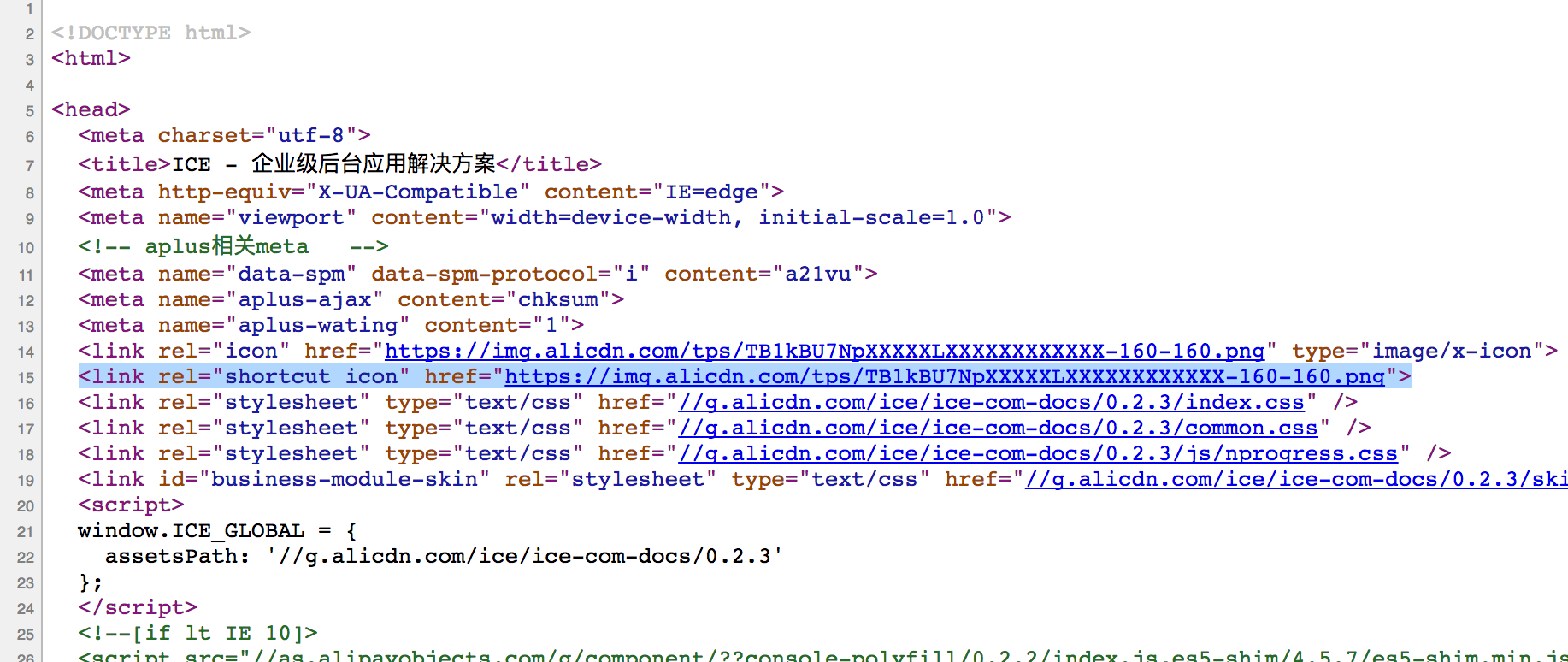

"md": "---\ntitle: 常见问题 QA\ncategory: 其它\norder: 1\n---\n\n> 持续更新中...\n> 如有问题可以到 <https://github.com/alibaba/ice/issues/new> 反馈\n\n## ICE 的浏览器兼容策略是什么\n\n由于 ICE 优先使用 React 16+,其需要的最低 IE 版本为 11,如果您需要在以下的版本使用,您可能需要引入一些 polyfill 来支持 `Map`, `Set` 等特性。参考[React 官网说明](https://reactjs.org/blog/2017/09/26/react-v16.0.html#javascript-environment-requirements)。\n\n以下代码可以帮助你在低版本 IE 下自动跳转到我们提供的提示浏览器升级页面。当然您也可以使用自定义的浏览器升级页面。\n\n```\n<!--[if lt IE 11]>\n<script>location.href = \"//www.taobao.com/markets/tbhome/ali-page-updater\"; </script>\n<![endif]-->\n```\n\n添加如上代码后,如果使用 IE11 及以下浏览器访问页面,则会自动跳转到统一引导升级浏览器的页面。\n\n## WebStorm/IDEA 编辑器卡顿现象\n\n由于项目在安装依赖后,产生文件夹 `node_modules` 含有较多的碎小文件,编辑器在索引文件引起的卡顿。\nWebStorm 中尤为明显,可通过 exclude `node_modules` 目录,不需要检索该文件夹下的内容。\n\n## 如何设置网页在浏览器 Tab 上面的 Icon (favicon)\n\n细心的同学可能会看到页面在浏览器 Tab 上面会有自定义的 Icon:\n\n\n\n如果你想要在自己站点上面加上这个 Icon 可以按照如下步骤添加:\n\n1. 准备一个 Icon,文件格式可以为 `.png` 或者 `.ico`,正方形,分辨率可以是 32x32px 或者 64x64px 文件体积要求尽可能小。\n2. 上传 CDN 拿到一个 url 或者在自己服务器配置静态资源服务\n3. 在 HTML 页面 `<head>` 标签里面添加如下代码:`<link rel=\"shortcut icon\" href=\"your-icon-url\">`\n \n\n这样就添加成功啦!\n\n## 如何在页面显示原始的 HTML 内容\n\n出于安全方面的考虑,React 默认会将节点中 html 代码进行转义,比如:\n\n```jsx\nclass Demo extends Component {\n render() {\n const content = 'hello <span>world</span>';\n return <div>{content}</div>;\n }\n}\n\n// 输出 hello <span>world</span>\n```\n\n如上,`<span>` 标签并不会在页面上被解析,而是被当成字符串输出了。React 提供了 `dangerouslySetInnerHTML` 属性帮助我们进行类似 `innerHTML` 的操作:\n\n```jsx\nclass Demo extends Component {\n render() {\n const content = 'hello <span>world</span>';\n return <div dangerouslySetInnerHTML={{ __html: content }} />;\n }\n}\n\n// 输出 hello world\n```\n\n更多内容请参考 [Dangerously Set innerHTML](https://reactjs.org/docs/dom-elements.html#dangerouslysetinnerhtml)\n\n## 之前创建的项目,遇到如下报错怎么办\n\n\n\n这是由于 ES6 Modules 的标准在物料中不兼容导致的。您可以把 `src/navs.js` 中最后一行修改为:\n\n```js\nexport const headerNavs = transform([\n ...autoGenHeaderNavs,\n ...customHeaderNavs,\n]);\n\nexport const asideNavs = transform([...autoGenAsideNavs, ...customAsideNavs]);\n```",

"license": "MIT",

"quality_signals": null,

"content_image": [

"content_image/7-0.png"

],

"overall_image": "overall_image/7.png"

}

```

### 3.4 An example of leetcode

```json

{

"id": 1,

"meta": {

"language": "en",

"doc_id": 1,

"page_id": null,

"oi_exist": true,

"oi_source": "compiling",

"source_dataset": "leetcode",

"date_download": "2024-05-05",

"ori_meta": {

"slug": "two-sum",

"difficulty": "Easy"

}

},

"quality_signals": null,

"license": "MIT",

"content_image": null,

"md": "# Two Sum\n\n- slug: two-sum\n- difficulty: Easy\n\nGiven an array of integers `nums` and an integer `target`, return _indices of the two numbers such that they add up to `target`_.\n\nYou may assume that each input would have **_exactly_ one solution**, and you may not use the _same_ element twice.\n\nYou can return the answer in any order.\n\n**Example 1:**\n\n**Input:** nums = \\[2,7,11,15\\], target = 9\n**Output:** \\[0,1\\]\n**Explanation:** Because nums\\[0\\] + nums\\[1\\] == 9, we return \\[0, 1\\].\n\n**Example 2:**\n\n**Input:** nums = \\[3,2,4\\], target = 6\n**Output:** \\[1,2\\]\n\n**Example 3:**\n\n**Input:** nums = \\[3,3\\], target = 6\n**Output:** \\[0,1\\]\n\n**Constraints:**\n\n* `2 <= nums.length <= 104`\n* `-109 <= nums[i] <= 109`\n* `-109 <= target <= 109`\n* **Only one valid answer exists.**\n\n**Follow-up:** Can you come up with an algorithm that is less than `O(n2)` time complexity?\n\n## A solution in Java\n\n```java\nimport java.util.HashMap;\nimport java.util.Map;\n\npublic int[] twoSum(int[] nums, int target) {\n Map<Integer, Integer> map = new HashMap<>();\n for (int i = 0; i < nums.length; i++) {\n int complement = target - nums[i];\n if (map.containsKey(complement)) {\n return new int[]{map.get(complement), i};\n }\n map.put(nums[i], i);\n }\n throw new IllegalArgumentException(\"No two sum solution\");\n}\n```\nThe algorithm leverages a hash map (unordered_map in C++, HashMap in Java, dictionary in Python, and Map in JavaScript). It iterates through the given 'nums' array and calculates the complementary value (target - current value). If the complementary value is already in the hash map, it means that we found a solution, and we return those indices. If the complement is not in the hash map, we store the current element in the hash map with its index. If the algorithm doesn't find the solution, it returns an empty array or throws an exception (in Java).\n\nThis approach has a time complexity of O(n) and a space complexity of O(n) as well.\n \n\n## A solution in C++\n\n```cpp\n#include <vector>\n#include <unordered_map>\n\nstd::vector<int> twoSum(std::vector<int>& nums, int target) {\n std::unordered_map<int, int> map;\n for (int i = 0; i < nums.size(); i++) {\n int complement = target - nums[i];\n if (map.find(complement) != map.end()) {\n return {map[complement], i};\n }\n map[nums[i]] = i;\n }\n return {};\n}\n```\nThe algorithm leverages a hash map (unordered_map in C++, HashMap in Java, dictionary in Python, and Map in JavaScript). It iterates through the given 'nums' array and calculates the complementary value (target - current value). If the complementary value is already in the hash map, it means that we found a solution, and we return those indices. If the complement is not in the hash map, we store the current element in the hash map with its index. If the algorithm doesn't find the solution, it returns an empty array or throws an exception (in Java).\n\nThis approach has a time complexity of O(n) and a space complexity of O(n) as well.\n \n\n## A solution in Python\n\n```python\ndef twoSum(nums, target):\n map = {}\n for i, num in enumerate(nums):\n complement = target - num\n if complement in map:\n return [map[complement], i]\n map[num] = i\n return []\n```\nThe algorithm leverages a hash map (unordered_map in C++, HashMap in Java, dictionary in Python, and Map in JavaScript). It iterates through the given 'nums' array and calculates the complementary value (target - current value). If the complementary value is already in the hash map, it means that we found a solution, and we return those indices. If the complement is not in the hash map, we store the current element in the hash map with its index. If the algorithm doesn't find the solution, it returns an empty array or throws an exception (in Java).\n\nThis approach has a time complexity of O(n) and a space complexity of O(n) as well.\n \n\n## A solution in Javascript\n\n```javascript\nfunction twoSum(nums, target) {\n const map = new Map();\n for (let i = 0; i < nums.length; i++) {\n const complement = target - nums[i];\n if (map.has(complement)) {\n return [map.get(complement), i];\n }\n map.set(nums[i], i);\n }\n return [];\n}\n```\nThe algorithm leverages a hash map (unordered_map in C++, HashMap in Java, dictionary in Python, and Map in JavaScript). It iterates through the given 'nums' array and calculates the complementary value (target - current value). If the complementary value is already in the hash map, it means that we found a solution, and we return those indices. If the complement is not in the hash map, we store the current element in the hash map with its index. If the algorithm doesn't find the solution, it returns an empty array or throws an exception (in Java).\n\nThis approach has a time complexity of O(n) and a space complexity of O(n) as well.\n \n",

"overall_image": "overall_image/1.png"

}

```

### 3.5 An example of linux-cn

```json

{

"id": 8,

"meta": {

"language": "zh",

"doc_id": 134,

"page_id": null,

"oi_exist": true,

"oi_source": "compiling",

"source_dataset": "linux-cn",

"date_download": "2024-05-06",

"ori_meta": {

"title": "Ubuntu 11.04正式发布!",

"author": "",

"fromurl": "",

"summary": "刚才接到的消息,Ubuntu 11.04已经正式发布!\r\n\r\n超快!易用!免费!\r\nUbuntu操作系统为世界上数以百万计的电脑、上网本和服务器提供了动力!\r\nUbuntu可以为你完成各种工作,管理你的文件、打印机、摄像头和MP3!并且它 ...",

"pic": "/data/attachment/album/201104/28/193933lnqqwwwn8l64wbn1.jpg.thumb.jpg",

"largepic": "/data/attachment/album/201104/28/193933lnqqwwwn8l64wbn1.jpg",

"titlepic": false,

"thumb": false,

"islctt": false,

"selector": "",

"translator": "",

"reviewer": "",

"editorchoice": false,

"tags": [

"Ubuntu 11.04",

"发布"

],

"category": "新闻",

"count": {

"commentnum": 0,

"favtimes": 0,

"likes": 0,

"sharetimes": 1,

"viewnum": 6165

},

"comments_data": [

],

"related": [

],

"excerpt": "刚才接到的消息,Ubuntu 11.04已经正式发布!\r\n\r\n超快!易用!免费!\r\nUbuntu操作系统为世界上数以百万计的电脑、上网本和服务器提供了动力!\r\nUbuntu可以为你完成各种工作,管理你的文件、打印机、摄像头和MP3!并且它 ...",

"date": "2011-05-09 13:24:00",

"updated": "2011-05-09 13:24:00",

"id": 134,

"permalink": "/article-134-1.html"

}

},

"quality_signals": null,

"license": "CC-BY-NC-4.0",

"content_image": [

"content_image/album_201104_28_193933lnqqwwwn8l64wbn1.jpg",

"content_image/album_201104_28_193935sy4l3bh4bh1ycbbc.jpg",

"content_image/album_201104_28_193936lyvc36fwv91l1359.jpg",

"content_image/album_201104_28_19393800rpr8pf0s8p8w0s.jpg"

],

"md": "# Ubuntu 11.04正式发布!\n\n刚才接到的消息,Ubuntu 11.04已经正式发布! \n \n 超快!易用!免费! \n Ubuntu操作系统为世界上数以百万计的电脑、上网本和服务器提供了动力! \n Ubuntu可以为你完成各种工作,管理你的文件、打印机、摄像头和MP3!并且它还带有数千个免费程序。 \n \n <img src=\"content_image/album_201104_28_193933lnqqwwwn8l64wbn1.jpg\" alt=\"\" title=\"\"> \n **数千个免费程序** \n \n <img src=\"content_image/album_201104_28_193935sy4l3bh4bh1ycbbc.jpg\" alt=\"\" title=\"\"> \n **终生免费升级** \n \n <img src=\"content_image/album_201104_28_193936lyvc36fwv91l1359.jpg\" alt=\"\" title=\"\"> \n **内建的病毒防护** \n \n <img src=\"content_image/album_201104_28_19393800rpr8pf0s8p8w0s.jpg\" alt=\"\" title=\"\"> \n **云中的音乐** \n \n 下载地址:\n\n\n\n\n> 列表: \n> <http://releases.ubuntu.com/11.04/> \n> 桌面版: \n> <http://www.ubuntu.com/download/ubuntu/download> \n> 服务器版: \n> <http://www.ubuntu.com/download/server/download>\n\n\n\n \n BT种子地址:\n\n\n\n\n> \n> * [ubuntu-11.04-alternate-amd64.iso.torrent](http://releases.ubuntu.com/11.04/ubuntu-11.04-alternate-amd64.iso.torrent)\n> * [ubuntu-11.04-alternate-i386.iso.torrent](http://releases.ubuntu.com/11.04/ubuntu-11.04-alternate-i386.iso.torrent)\n> * [ubuntu-11.04-desktop-amd64.iso.torrent](http://releases.ubuntu.com/11.04/ubuntu-11.04-desktop-amd64.iso.torrent)\n> * [ubuntu-11.04-desktop-i386.iso.torrent](http://releases.ubuntu.com/11.04/ubuntu-11.04-desktop-i386.iso.torrent)\n> * [ubuntu-11.04-netbook-i386.iso.torrent](http://releases.ubuntu.com/11.04/ubuntu-11.04-netbook-i386.iso.torrent)\n> * [ubuntu-11.04-server-amd64.iso.torrent](http://releases.ubuntu.com/11.04/ubuntu-11.04-server-amd64.iso.torrent)\n> * [ubuntu-11.04-server-i386.iso.torrent](http://releases.ubuntu.com/11.04/ubuntu-11.04-server-i386.iso.torrent)\n> \n> \n> \n\n\n\n \n 当前尚无DVD版本出现 \n \n \n \n 该贴已经同步到 [wxy的微博](http://api.t.sina.com.cn/1747813575/statuses/9786340397) \n \n \n \n\n\n \n\n\n*[本文内容由 wxy 提供](thread-7135-1-1.html)*\n \n\n\n\n 已同步至 [wxy的微博](http://api.t.sina.com.cn/1747813575/statuses/10347235925)",

"overall_image": "overall_image/134.png"

}

```

### 3.6 An example of mmc-core-ff

```json

{

"meta": {

"language": "en",

"oi_exist": true,

"oi_source": "compiling",

"doc_id": 11,

"page_id": 0,

"source_dataset": "mmc4-core-ff",

"source_jsonl": "mmc4-core-ff/docs_no_face_shard_10375_v3.jsonl",

"ori_meta": {

"url": "http://position-light.blogspot.com/2015/06/whats-up-with-reading-and-northern.html",

"text_list": [

"The Position Light: What's Up with the Reading and Northern?",

"The Reading and Northern has been a rare bright spot in the world of signaling.",

"A commitment to its Reading heritage has resulted in numerous signaling structures being preserved along with attempts to install \"classic\" signaling where new signaling is being installed on its mostly unsignaled territory.",

"The R&N also controls the former Conrail Lehigh Line and for one reason or another has decided not to touch the surviving LVRR signaling along that route.",

"Still, I am still not completely clear on the full extent of the R&N's signal preservation efforts as hinted at in a number of photos I have come across.",

"We begin near the town of Mach Chunk where the R&N runs a tourist operation in the Lehigh Gorge.",

"i have bicycles along the right of way a number of time and I never noticed this cantilever mast and its freshly painted (albeit turned) signals.",

"Is this a sign of a new interlocking or signaling project?",

"Pottsville is the location of some preserved Reading signal bridges and a tower.",

"Both have been out of service for decades, but then I find a photo showing what appears to be a lit Reading US&S three headed signal displaying a restricting indication.",

"Could be that the photographer is having some fun with Photoshoppe, or it could be another R&N instance of an \"island\" interlocking designed to eliminate the need for crews to hand throw switches.",

"Clearly I need to take another field trip to the area, but if anyone has any information (or photos) please let me know.",

"Yes, that dual Signal Cantilever was taken from Schuylkill Haven and refurbished and placed into service as part of the new CP COAL Interlocking aptly named for the nearby town of Coalport.",

"This new interlocking controls R&N connector feed track and switch from Nesquehoning Jct onto the NS Lehigh Line.",

"Be aware, that R&N is constructing a new Y connector bridge over the Lehigh River.",

"The switch at Nesquehoning Jct as well at the Y connecting point northwest along the old CNJ into Nesquehoning and the other apex connecting point at the old Lehigh Valley overpass will make up the new Y along with the new bridge.",

"Expect the R&N to make all 3 points new CP Interlockings as NS will also use the new route to get to Reading & Philadelphia directly off the Lehigh Line.",

"Coming attractions for 2016.",

"Also, R&N is talking about a new signaled controlled passing track siding midway between Port Clinton and Reading.",

"Believe they will leverage the siding that's already in place (don't know name of that area, but, between two grade crossings).",

"Could see even more new R&N signaling if Distants are added to the mix as well.",

"Thank you for the information!",

"I knew something was up with them.",

"Mike - Have updates with pics for R&N.",

"Can share them with you but not sure of best way via e-mail or blog address.",

"Can you provide and I can forward what I have?",

"You can drop a line to [email protected] Thanks!"

],

"image_info": [

{

"face_detections": null,

"image_id": "11-0.png",

"image_name": "338146395110.jpg",

"matched_sim": 0.2532651722,

"matched_text_index": 12,

"raw_url": "http://www.railpictures.net/images/d2/6/0/1/6601.1425352225.jpg"

},

{

"face_detections": null,

"image_id": "11-1.png",

"image_name": "75dca5908f72.jpg",

"matched_sim": 0.2665729225,

"matched_text_index": 18,

"raw_url": "http://www.railpictures.net/images/d2/0/3/5/5035.1411414707.jpg"

}

],

"similarity_matrix": [

[

0.2208167017,

0.2216126323,

0.2174896896,

0.2322429568,

0.1835552454,

0.1933521628,

0.1114124805,

0.1734878719,

0.1712893993,

0.1681747884,

0.2151062787,

0.1558438838,

0.2532651722,

0.2029514462,

0.1683746874,

0.1972030103,

0.2269551754,

0.1497862041,

0.2076308429,

0.1459720433,

0.1406365782,

0.1131924018,

0.0637710392,

0.1748069972,

0.1665924788,

0.1288469583,

0.1271829307

],

[

0.2275835425,

0.2447894663,

0.2326766551,

0.2530837059,

0.197981596,

0.1727618128,

0.1842465401,

0.2053450346,

0.2174785137,

0.2176187485,

0.216365099,

0.152155906,

0.2394197732,

0.2332755029,

0.2077463269,

0.2373518944,

0.2454088479,

0.1549753994,

0.2665729225,

0.2099550366,

0.163154155,

0.1208794788,

0.0917887241,

0.1707040668,

0.1544941813,

0.1439596266,

0.1319040358

]

],

"could_have_url_duplicate": 0

},

"date_download": "2024-05-11"

},

"md": "The Position Light: What's Up with the Reading and Northern? The Reading and Northern has been a rare bright spot in the world of signaling. A commitment to its Reading heritage has resulted in numerous signaling structures being preserved along with attempts to install \"classic\" signaling where new signaling is being installed on its mostly unsignaled territory. The R&N also controls the former Conrail Lehigh Line and for one reason or another has decided not to touch the surviving LVRR signaling along that route. Still, I am still not completely clear on the full extent of the R&N's signal preservation efforts as hinted at in a number of photos I have come across. We begin near the town of Mach Chunk where the R&N runs a tourist operation in the Lehigh Gorge. i have bicycles along the right of way a number of time and I never noticed this cantilever mast and its freshly painted (albeit turned) signals. Is this a sign of a new interlocking or signaling project? Pottsville is the location of some preserved Reading signal bridges and a tower. Both have been out of service for decades, but then I find a photo showing what appears to be a lit Reading US&S three headed signal displaying a restricting indication. Could be that the photographer is having some fun with Photoshoppe, or it could be another R&N instance of an \"island\" interlocking designed to eliminate the need for crews to hand throw switches. Clearly I need to take another field trip to the area, but if anyone has any information (or photos) please let me know. Yes, that dual Signal Cantilever was taken from Schuylkill Haven and refurbished and placed into service as part of the new CP COAL Interlocking aptly named for the nearby town of Coalport.\n\n\n\n<img src='content_image/11-0.png'>\n\nThis new interlocking controls R&N connector feed track and switch from Nesquehoning Jct onto the NS Lehigh Line. Be aware, that R&N is constructing a new Y connector bridge over the Lehigh River. The switch at Nesquehoning Jct as well at the Y connecting point northwest along the old CNJ into Nesquehoning and the other apex connecting point at the old Lehigh Valley overpass will make up the new Y along with the new bridge. Expect the R&N to make all 3 points new CP Interlockings as NS will also use the new route to get to Reading & Philadelphia directly off the Lehigh Line. Coming attractions for 2016. Also, R&N is talking about a new signaled controlled passing track siding midway between Port Clinton and Reading.\n\n\n\n<img src='content_image/11-1.png'>\n\nBelieve they will leverage the siding that's already in place (don't know name of that area, but, between two grade crossings). Could see even more new R&N signaling if Distants are added to the mix as well. Thank you for the information! I knew something was up with them. Mike - Have updates with pics for R&N. Can share them wi",

"license": "ODC-BY",

"quality_signals": null,

"content_image": [

"content_image/11-0.png",

"content_image/11-1.png"

],

"overall_image": "overall_image/11-0.png"

}

```

### 3.7 An example of PG19

```json

{

"meta": {

"language": "en",

"oi_exist": true,

"oi_source": "compiling",

"doc_id": 871,

"page_id": 0,

"source_dataset": "pg19",

"split": "train",

"ori_meta": {

"url": "http://www.gutenberg.org/ebooks/9304",

"short_book_title": "Initiation into Philosophy by Emile Faguet",

"publication_date": 1914

},

"date_download": "2024-05-10"

},

"md": "# Initiation into Philosophy by Emile Faguet \n\n Produced by Ted Garvin, Thomas Hutchinson and PG Distributed Proofreaders \n\n \n\n \n\n \n\n \n\n INITIATION INTO PHILOSOPHY \n\n \nBy Emile Faguet \n\n Of the French Academy \n\n \nAuthor of \"The Cult Of Incompetence,\" \"Initiation Into Literature,\" etc. \n\n \nTranslated from the French by Sir Homer Gordon, Bart. \n\n 1914 \n\n \n\n \nPREFACE \n\n This volume, as indicated by the title, is designed to show the way to the beginner, to satisfy and more espec ially to excite his initial curiosity. It affords an adequate idea of the march of facts and of ideas. The rea der is led, somewhat rapidly, from the remote origins to the most recent efforts of the human mind. \n\n It should be a convenient repertory to which the mind may revert in order to see broadly the general opinion o f an epoch--and what connected it with those that followed or preceded it. It aims above all at being _a frame _ in which can conveniently be inscribed, in the course of further studies, new conceptions more detailed and more thoroughly examined. \n\n It will have fulfilled its design should it incite to research and meditation, and if it prepares for them cor rectly. \n\n E. FAGUET. \n\n \n\n \nCONTENTS \n\n \nPART I ANTIQUITY \n\n \nCHAPTER I BEFORE SOCRATES \n\n Philosophical Interpreters of the Universe, of the Creation and Constitution of the World. \n\n \nCHAPTER II THE SOPHISTS \n\n Logicians and Professors of Logic, and of the Analysis of Ideas, and of Discussion. \n\n \nCHAPTER III SOCRATES \n\n Philosophy Entirely Reduced to Morality, and Morality Considered as the End of all Intellectual Activity. \n\n \nCHAPTER IV PLATO \n\n Plato, like Socrates, is Pre-eminently a Moralist, but he Reverts to General Consideration of the Universe, an d Deals with Politics and Legislation. \n\n \nCHAPTER V ARISTOTLE",

"license": "Apache 2.0",

"quality_signals": null,

"content_image": null,

"overall_image": "overall_image/871-0.png"

}

```

### 3.8 An example of PIN-PMC

```json

{

"meta": {

"language": "en",

"doc_id": "PMC3015258",

"oi_exist": true,

"oi_source": "ori",

"source_dataset": "PIN-PMC",

"ori_meta": null,

"page_id": null,

"date_download": "2024-05-28"

},

"md": "# A Simple Stereoscopic Endoscope\n\n## Abstract\n\nA very simple method is described for producing and viewing stereoscopic endoscopic images.\nThe addition of two simple prisms to the end of a conventional television-monitored endoscope with a simple viewing device produces a stereoscopic endoscope which appears to be suitable for surgical use......",

"license": [

"https://www.ncbi.nlm.nih.gov/pmc/tools/textmining/"

],

"quality_signals": {

"doc_length": 8269

},

"content_image": [

"content_image/PMC3015258/jsls-2-1-67-g03.jpg",

"content_image/PMC3015258/jsls-2-1-67-g04.jpg",

"content_image/PMC3015258/jsls-2-1-67-g01.jpg",

"content_image/PMC3015258/jsls-2-1-67-g02.jpg",

"content_image/PMC3015258/jsls-2-1-67-g05.jpg"

],

"overall_image": [

"overall_image/PMC3015258/jsls-2-1-67_3.png",

"overall_image/PMC3015258/jsls-2-1-67_0.png",

"overall_image/PMC3015258/jsls-2-1-67_1.png",

"overall_image/PMC3015258/jsls-2-1-67_2.png"

],

"id": 60827

}

```

## 4 License

For data generated or produced by us, please adhere to the Apache 2.0 License.

For data sourced from third parties, compliance with the respective third-party licenses is required.

## Citation

```

@article{DBLP:journals/corr/abs-2406-13923,

author = {Junjie Wang and

Yin Zhang and

Yatai Ji and

Yuxiang Zhang and

Chunyang Jiang and

Yubo Wang and

Kang Zhu and

Zekun Wang and

Tiezhen Wang and

Wenhao Huang and

Jie Fu and

Bei Chen and

Qunshu Lin and

Minghao Liu and

Ge Zhang and

Wenhu Chen},

title = {{PIN:} {A} Knowledge-Intensive Dataset for Paired and Interleaved

Multimodal Documents},

journal = {CoRR},

volume = {abs/2406.13923},

year = {2024}

}

``` |

allenai/MADLAD-400 | allenai | "2024-09-09T16:23:42Z" | 31,250 | 132 | [

"task_categories:text-generation",

"license:odc-by",

"size_categories:n>1T",

"arxiv:2309.04662",

"arxiv:2010.14571",

"arxiv:2103.12028",

"region:us"

] | [

"text-generation"

] | "2023-09-01T00:06:27Z" | ---

license: odc-by

task_categories:

- text-generation

size_categories:

- n>1T

---

# MADLAD-400

## Dataset and Introduction

[MADLAD-400 (*Multilingual Audited Dataset: Low-resource And Document-level*)](https://arxiv.org/abs/2309.04662) is

a document-level multilingual dataset based on Common Crawl, covering 419

languages in total. This uses all snapshots of CommonCrawl available as of August

1, 2022. The primary advantage of this dataset over similar datasets is that it

is more multilingual (419 languages), it is audited and more highly filtered,

and it is document-level. The main disadvantage is also its strength -- being

more filtered, it may lack the recall needed for some applications.

There are two versions released: the **noisy** dataset, which has no filtering

except document-level LangID, and the **clean** dataset, which has a variety of

filters applied, though it naturally has a fair amount of noise itself. Each

dataset is released in a document-level form that has been deduplicated.

## Loading

You can load both the clean and noisy versions of any language by specifing its LangID:

~~~

madlad_abt = load_dataset("allenai/madlad-400", "abt")

~~~

A list of langagues can also be supplied with a keyword argument:

~~~

madlad_multilang = load_dataset("allenai/madlad-400", languages=["abt", "ace"])

~~~

Additionally, you can load the noisy and clean subsets seperately with the split keyword argument:

~~~

madlad_multilang_clean = load_dataset("allenai/madlad-400", languages=["abt", "ace"], split="clean")

~~~

## LangID model and Crawl

Following [Language Id In the Wild](https://arxiv.org/pdf/2010.14571.pdf), we

trained a Semi-Supervised LangId model (SSLID) on 500 languages. The training

data is as described in that paper, with the differences that 1) training data

is sampled to a temperature of `T=3` to reduce over-triggering on low-resource

languages; and 2) the data is supplemented with web-crawled data from the same

paper (that has already been through the various filters described therein) in

the hopes that it will increase robustness to web-domain text.

## Filtering

Before separating the raw CommonCrawl corpus by LangID, these

filtering steps are done, similar to Raffel et al (2020):

- Discarded any page with fewer than 5 sentences and only retained lines that

contained at least 3 words.

- Removed any line with the word Javascript.

- Removed any page where the phrase “lorem ipsum” appeared.

- Removed any pages containing the phrases "terms of use", "privacy policy",

"cookie policy", "uses cookies", "use of cookies", "use cookies"

- Removed any pages that contained a curly bracket.

- To deduplicate the data set, discarded all but one of any three-sentence span occurring more than once in the data set.

The `noisy` subset of the data was filtered only by document-level LangID, which

was taken to be the majority sentence-level LangID prediction. The `clean`

subset removed all documents with a `percent_questionable` score greater than

20%. It furthermore removed any document with under 5 sentences.

The `pct_questionable` score is simple the percentage of sentences in the input

document that were "questionable". A sentence was considered questionable if any

of the following were true:

* **LangID Consistency:** the sentence-level LangID does not match the

document-level LangID

* **List Case:** The sentence has at least 12 tokens, and over 50% percent of

the tokens began in a capital letter.

* **Length:** The sentence has under 20 characters or over 500 characters

(note: this is a bad heuristic for ideographic languages)

* **Danger Chars:** Over 20% of the characters in the sentence match

`[0-9{}+/()>]`

* **Cursedness:** The sentence matches a cursed regex (see below)

### Cursed Substrings

Based on the initial round of data audits, the authors created a heuristic list of

substrings and regexes accounting for a large amount of questionable content.

Keep in mind that these all are fed into the `pct_questionable` score -- a

sentence is only excluded from the `clean` dataset if over 20% of the sentences

in that document are flagged as questionable.

notes about cursed substrings:

* low quality sentences ending in the pipe character were very common. Before

you ask, this was not Devanagari-script text using a Danda.

* The last few regexes are meant to match `A N T S P E A K`, `List Case`, and

weirdly regular text (for instance, lists of shipping labels or country

codes)

```

# this implementation is for demonstration and is pretty inefficient;

# to speed it up, use string inclusion (`in`) instead of regex for all but the

# last four, and for those use a compiled regex.

def is_cursed(s):

return any(re.findall(curse, s) in s for curse in CURSED_SUBSTRINGS)

CURSED_SUBSTRINGS = [" №", "���", "\\|\\s*$", " nr\\.$", "aute irure dolor ", " sunt in culpa qui ", "orem ipsum ", " quis nostrud ", " adipisicing ", " dolore eu ", " cupidatat ", "autem vel eum", "wisi enim ad", " sex ", " porn ", "黄色电影", "mp3", "ownload", "Vol\\.", " Ep\\.", "Episode", " г\\.\\s*$", " кг\\.\\s*$", " шт\\.", "Develop", "Facebook", " crusher ", " xxx ", " ... ... ... ... ... ... ... ... ...", " .... .... .... .... .... .... .... .... ....", " [^ ] [^ ] [^ ] [^ ] [^ ] [^ ] [^ ] [^ ] [^ ]", ", ..,,? ..,,? ..,,? ..,,?"]

```

### Virama Correction

Many languages using Brahmic Abugida (South and Southeast Asian scripts like

Devanagari, Khmer, etc.) use some variant on the virama character. For whatever

reason, it was found that this character was often messed up in the common crawl

snapshots used. Therefore, for the languages `bn my pa gu or ta te kn ml

si th tl mn lo bo km hi mr ne gom as jv dv bho dz hne ks_Deva mag mni shn yue zh

ja kjg mnw ksw rki mtr mwr xnr`, a special correction step was done.

For these languages, the authors took the list of all virama characters and removed all

unnecessary spaces between each instance of a virama character and the next

character with a regex.

```

'%s' % regex.sub(r' ([%s]) ' % _VIRAMA_CHARS, '\\1', x)

```

### Myanmar Font Compatibility

Prior to 2019, the most popular font for Burmese websites was the Zawgyi font.

The authors used [Myanmar Tools](https://github.com/google/myanmar-tools) to convert text.

Several scripts, like the Chinese script, Tibetan script, and Thai, do not use

whitespace to separate characters. The languages with this property in this

dataset are `yue zh ja th lo kjg mnw my shn ksw rki km bo dz`.

Alas, the **Length** aspect of the `pct_questionable` score was calculated using

simplistic whitespace tokenization, and therefore rendered the whole

`pct_questionable` score invalid for those languages. Therefore, for these

languages, the "clean" data is identical to the "noisy" data (barring Chinese;

see below.)

### Special filters

Chinese had a particular issue with pornographic content. After manual inspection

a list of strings likely to be present in pornographic content was developed. All

pages containing at least one of these strings were removed. Resulted in 17%

reduction in number of documents and 56% reduction in file size.

```

pornsignals = "caoporn caoprom caopron caoporen caoponrn caoponav caopom caoorn 99re dy888 caopro hezyo re99 4438x zooskool xfplay 7tav xxoo xoxo 52av freexx 91chinese anquye cao97 538porm 87fuli 91pron 91porn 26uuu 4438x 182tv kk4444 777me ae86 91av 720lu yy6080 6080yy qqchub paa97 aiai777 yy4480 videossexo 91free 一级特黄大片 偷拍久久国产视频 日本毛片免费视频观看 久久免费热在线精品 高清毛片在线看 日本毛片高清免费视频 一级黄色录像影片 亚洲男人天堂 久久精品视频在线看 自拍区偷拍亚洲视频 亚洲人成视频在线播放 色姑娘综合站 丁香五月啪啪 在线视频成人社区 亚洲人成视频在线播放 久久国产自偷拍 一本道 大香蕉无码 香港经典三级 亚洲成在人线免费视频 天天色综合网 大香蕉伊人久草 欧美一级高清片 天天鲁夜夜啪视频在线 免费黄片视频在线观看 加比勒久久综合 久草热久草在线视频 韩国三级片大全在线观看 青青草在线视频 美国一级毛片 久草在线福利资源 啪啪啪视频在线观看免费 成人福利视频在线观看 婷婷我去也 老司机在线国产 久久成人视频 手机看片福利永久国产 高清国产偷拍在线 大香蕉在线影院 日本高清免费一本视频 男人的天堂东京热 影音先锋男人资源 五月婷婷开心中文字幕 亚洲香蕉视频在线播放 天天啪久久爱视频精品 超碰久久人人摸人人搞".split()

```

A few more random notes, comparing to common alternative codes for these

languages:

* `fil` for Filipino/Tagalog, not `tl`

* `ak` for Twi/Akan, rather than `tw`. This includes Fante.

* Unfortunately use the macro code `chm` for Meadow Mari (instead of the

correct `mhr`), and `mrj` for Hill Mari

* `no` for Norwegian Bokmål, whereas some resources use

`nb`

* `ps` for Pashto instead of `pbt` (Southern Pashto)

* `ms` for Standard Malay, not `zlm`

* `sq` for Albanian, and don't distinguish dialects like

Gheg (`aln`) and Tosk (`als`)

* `ber` as the code for Tamazight, after consultation with Tamazight

speakers opining that the dialect distinctions are not significant. Other

resources use the individual codes like `tzm` and `kab`.

* Macrocode `qu` for Quechua. In practice, this seems usually to be

a mix of the Ayacucho and Cusco dialects. Other resources, like NLLB, may

use the dialect code, e.g. `quy` for Ayacucho Chanka. The same is true for a

few other macro codes, like `ff` (Macro code for Fulfulde, whereas other

sources may use e.g. `fuv`.)

* Really, there are notes that can be made about almost any code, from the

well-accepted conventions like `zh` for Mandarin, to many dialectical notes,

like which variant of Hmong really is the `hmn` data? But the above ones are

made specifically for ones where the authors are aware of other datasources floating

out there that use different conventions.

## Audit

Following [Quality at a Glance](https://arxiv.org/abs/2103.12028), the authors performed

an "audit" of every corpus in this dataset. Although the authors did not speak most

languages, they were able to give high-level comments on the general quality. They

looked at a sample of 20 documents of each language.

After an initial round of auditing, they devised a new set of filters and applied

them. They then re-did all audits.

### Overall notes from the audit

The decision was to **include languages that looked noisy, but omit any language

that was clearly majority noise, or only had 20 or fewer docs.** This is a low

bar -- twenty documents can be very little indeed, and some of the corpora released are quite noisy, but all of them should have at least the potential to

be used in some useful way. The motivation for not releasing nonsense or tiny

datasets is to not give a false sense of how multilingual this dataset actually

is ("Representation washing"), as recommended by **Quality at a Glance**.

A few overarching points:

* Many low-resource languages only had Bible text, or in some cases jw.org

data. These are marked in the rows below. Generally `ok bible` means that

100% of the audited sentences were Biblical, whereas if `bible` is simply

mentioned in the note, it was not the only source of data.

* Indian languages in the Latin script had a high concentration of

pornographic content.

### Renames and Merges as a result of the Audit

In several cases, it was clear from the audit that the corpora were not in the

languages that the LangID model claimed they were. This led to the following

renames:

* dty renamed to `zxx-xx-dtynoise`, aka a "language" of noise. This is mainly

mis-rendered PDFs and may have some practical applications for decoding

said.

* `fan` renamed to `bum`

* `ss-SZ` renamed to `ss` -- this was just a result of us having inconsistent

data labels.

* `cjk` merged into the `gil` dataset

* `bjj` merged into the `awa` dataset

## Canaries

Canaries are provided in separate `canaries` folder. Canaries are organized into three directions: `monolingual` hosts canaries designed for the MADLAD-400 monody data, `multiway` for the multiway data, and `generic` the generic canaries generated only from the model's vocabulary.

* Monolingual: Canaries here are organized by the language the canary was generated from. This corresponds exactly to the `translate_copy` setting in the paper, where the source and target language match.

* Multiway: Canaries here are organized in one of two fashions. `to_XX` indicates canaries organized by the target language (and where the source language could be any language). `XX-XX` indicates the canaries (interleaved_both and interleaved_mislabeled_both) designed for a specific pair of languages.

Within each subdirectory above, canaries are into separate files named by the canary type. There is always only a single file for each canary type. The `generic` folder contains within it the four canary types.

Canaries can be mixed in with normal training data to then be analyzed post-hoc to training

## References

Raffel, Colin, et al. "Exploring the limits of transfer learning with a unified

text-to-text transformer." J. Mach. Learn. Res. 21.140 (2020): 1-67.

## Contact